Patrick (Pengcheng) Jiang

57 posts

@patpcj

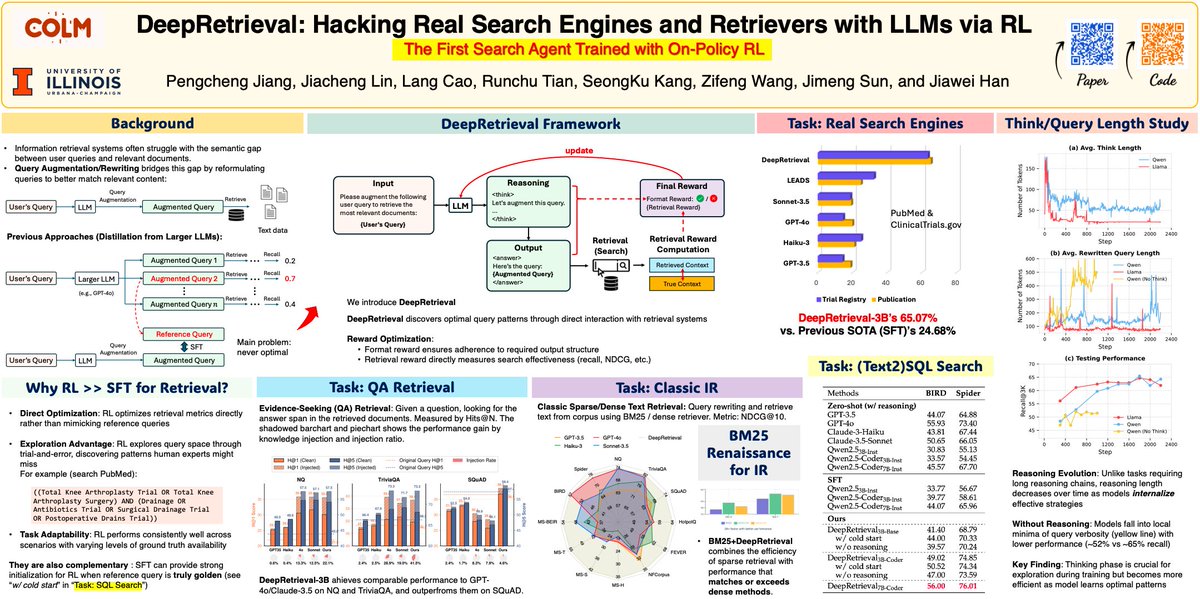

CS PhD @ UIUC; prev: SR @GoogleResearch; research: Agentic AI, Knowledge Indexing, Retrieval; recent work: DeepRetrieval, s3; open to chat

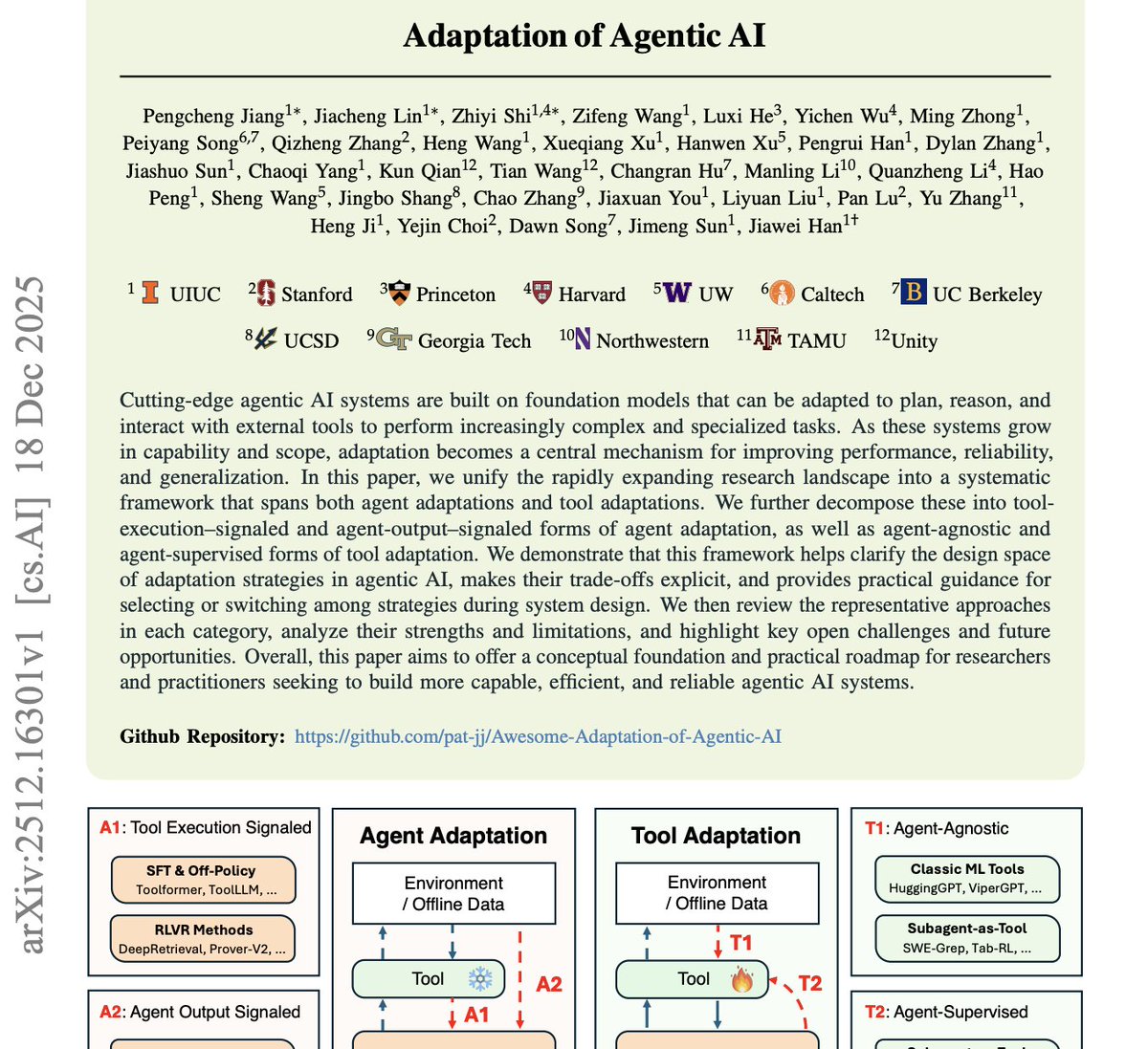

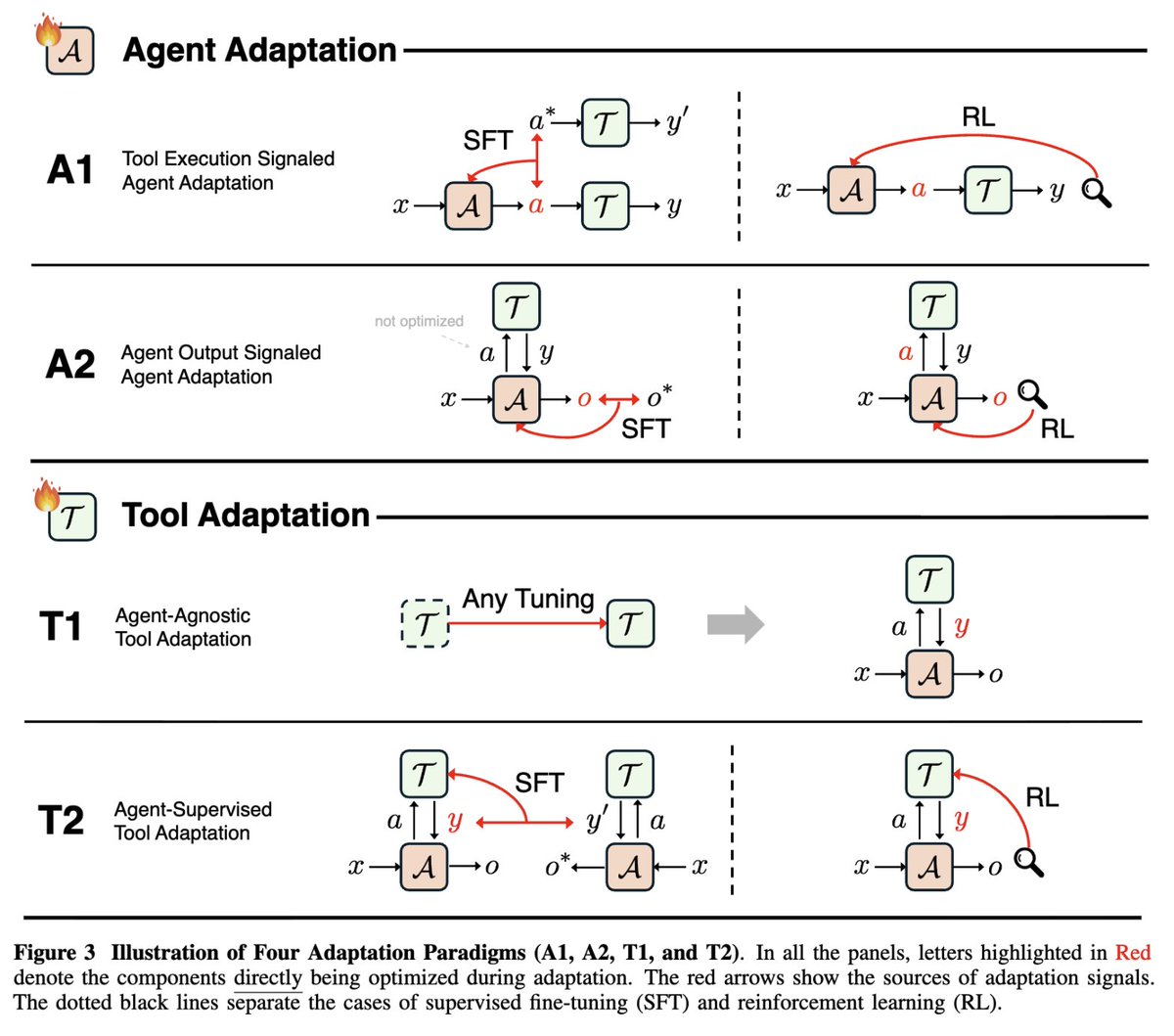

Excited to share our survey "Adaptation of Agentic AI" (led by @patpcj & @jclin808) unifying the rapidly growing landscape of agent adaptation (tool-execution- vs. agent-output-signaled) & tool adaptation (agent-agnostic vs. agent-supervised)! Preprint: github.com/pat-jj/Awesome…

Excited to share our survey "Adaptation of Agentic AI" (led by @patpcj & @jclin808) unifying the rapidly growing landscape of agent adaptation (tool-execution- vs. agent-output-signaled) & tool adaptation (agent-agnostic vs. agent-supervised)! Preprint: github.com/pat-jj/Awesome…

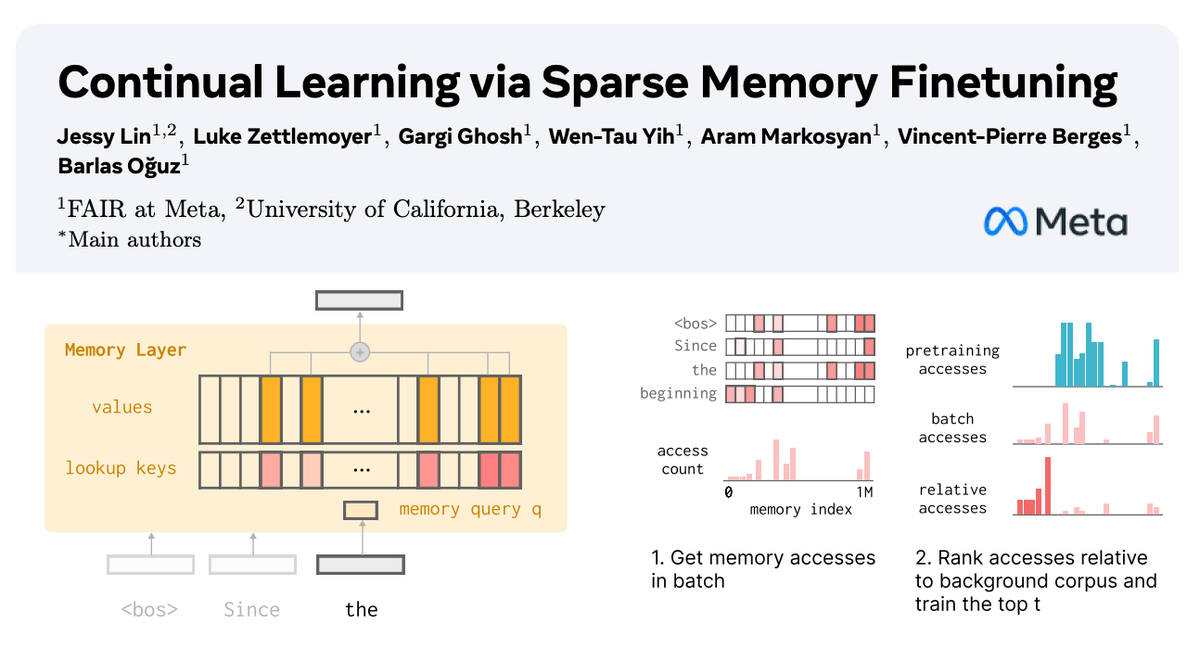

🧠 How can we equip LLMs with memory that allows them to continually learn new things? In our new paper with @AIatMeta, we show how sparsely finetuning memory layers enables targeted updates for continual learning, w/ minimal interference with existing knowledge. While full finetuning and LoRA see drastic drops in held-out task performance (📉-89% FT, -71% LoRA on fact learning tasks), memory layers learn the same amount with far less forgetting (-11%). 🧵:

Introducing SWE-grep and SWE-grep-mini: Cognition’s model family for fast agentic search at >2,800 TPS. Surface the right files to your coding agent 20x faster. Now rolling out gradually to Windsurf users via the Fast Context subagent – or try it in our new playground!