Peter Zalman

1.5K posts

Peter Zalman

@peterzalman

I am crafting great ideas into working products and striving for balance between Design, Product and Engineering.

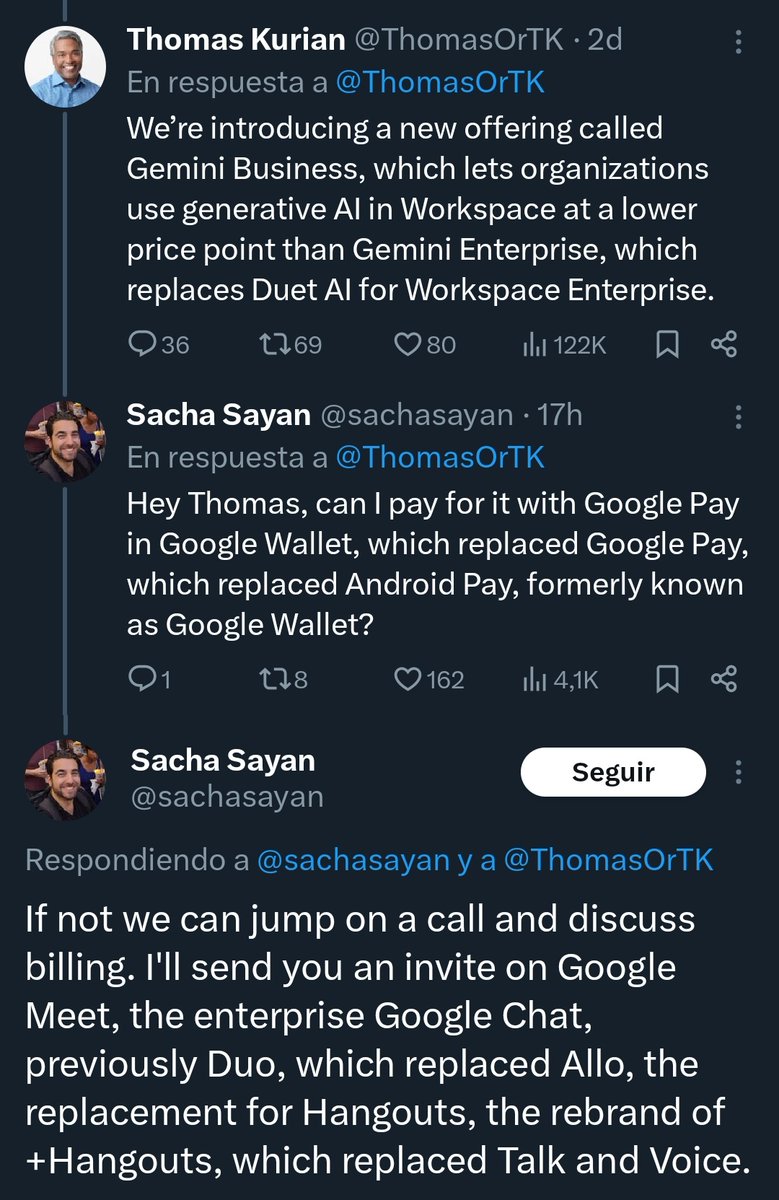

Box CEO Aaron Levie on the AI Adoption Gap Aaron Levie joins Steven Sinofsky, Martin Casado, and Erik Torenberg to discuss how AI agents will revolutionize work, the growing pains of building software for the agent economy, what Wall Street gets wrong about AI, and more. 00:00 Intro 00:51 Building software for agents vs. humans 02:10 Can non-technical workers actually use AI agents? 14:31 CFO/CIO pushback: the real fear of agents doing integration 18:39 Treating agents like employees and why it breaks down 27:35 Diffusion gap: startups vs. enterprises 42:53 What Wall Street gets wrong @levie @stevesi @martin_casado @eriktorenberg