We’re ready, and we’re very committed to this. 😎

Peter Hizalev

684 posts

@petrohi

TT-Lang at Tenstorrent. Also retro computing and homebrew electronics.

We’re ready, and we’re very committed to this. 😎

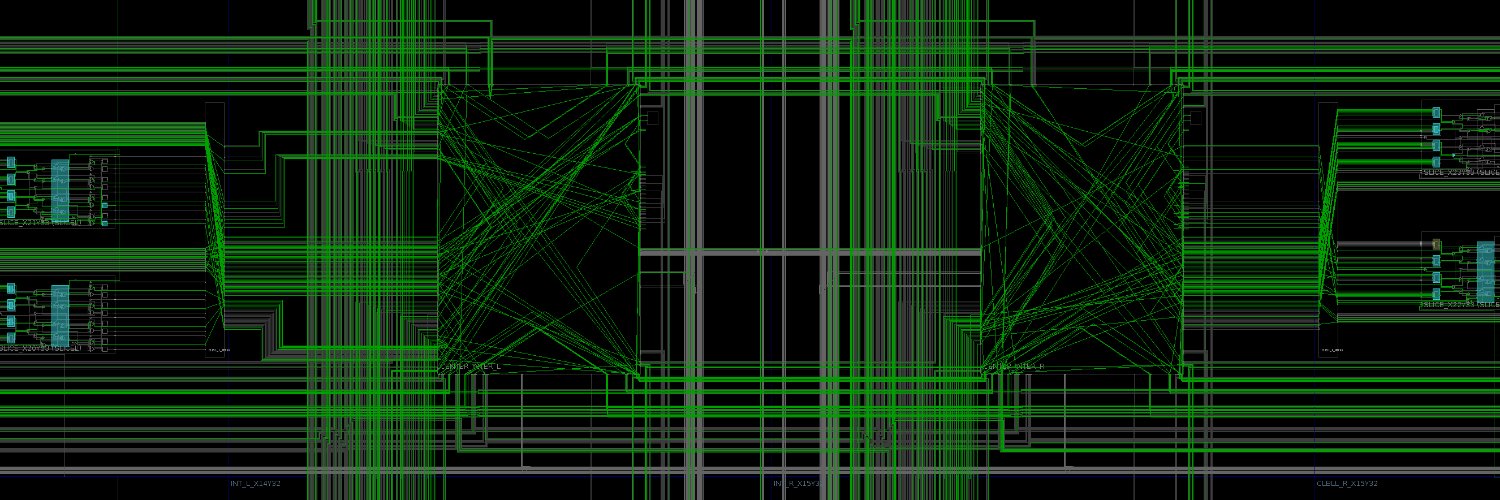

At TT-Deploy, see our latest benchmarks and hear from partners and customers scaling on Tenstorrent. Learn more about Networked AI –– our unified compute, memory, and networking in one scale-out architecture. No proprietary interconnects. No rigid workload declarations. Watch it live this Friday, May 1st @ 1:30 PM PDT: tenstorrent.com/deploy

.@tenstorrent will launch its new gen cluster-scale systems next week, but in the mean time, Jim Keller @jimkxa and Jasmina Vasiljevic @JasminaVas gave me a sneak preview of their video generation demo running on 256 BlackHole chips (spoiler - it's fast): eetimes.com/tenstorrent-pr…

The largest advancement of the CUDA platform since its creation in 2006 is here 👀 Introducing CUDA Tile, a tile-based programming model that provides the ability to write algorithms at a higher level and abstract away the details of specialized hardware, such as tensor cores. Read the technical blog 👉 developer.nvidia.com/blog/focus-on-…