Mehdi (e/λ)@BetterCallMedhi

I genuinely think this might be the most important story I’ve read this year and I need to talk about it

a guy in australia just designed a custom mRNA cancer vaccine for his dying dog using chatGPT and alphafold, he has 0 background in biology and it worked, the tumor shrunk by half, the genomics researchers are absolutely stunned & I genuinely think this story is way bigger than people realize

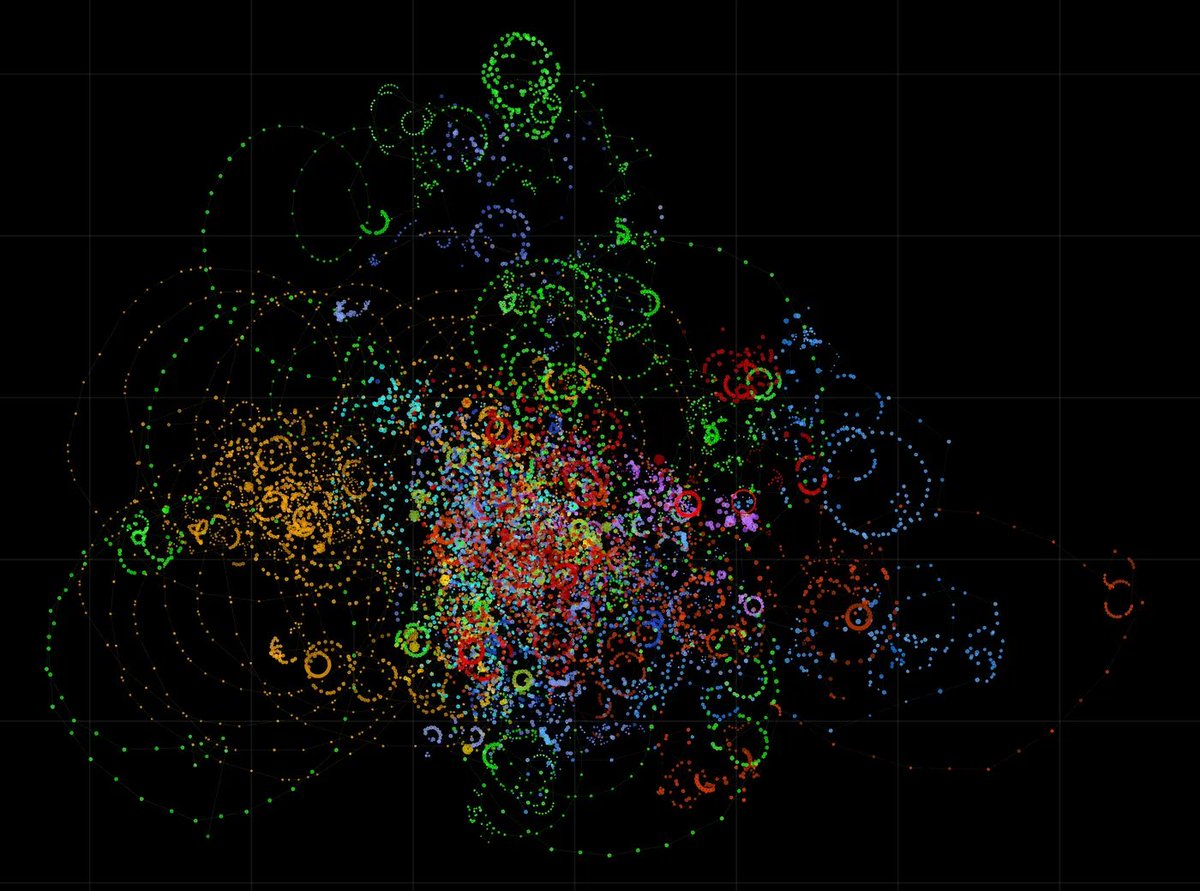

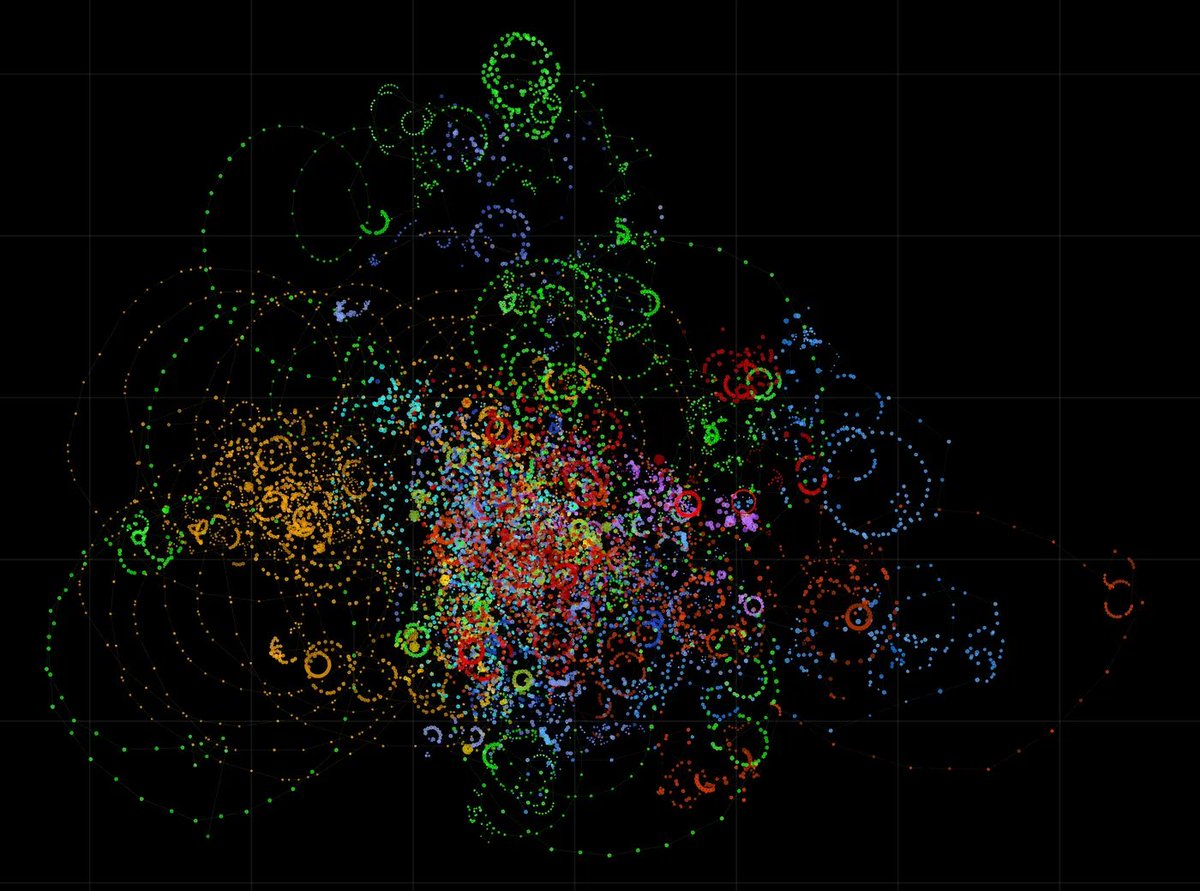

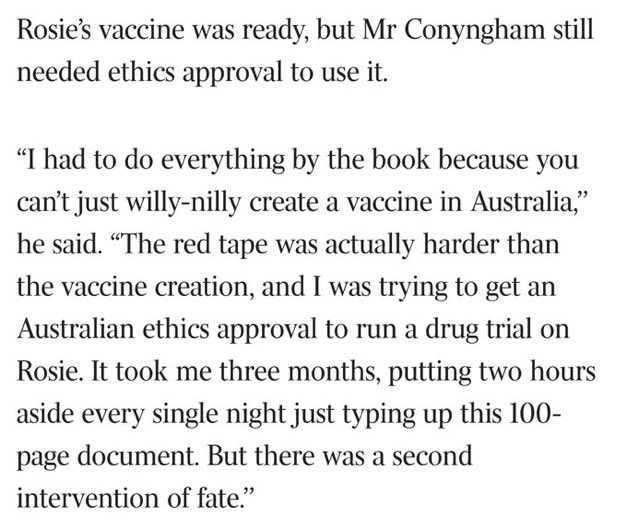

here’s what he actually did, he paid 3000 bucks to get the tumor DNA sequenced, fed the data to chatGPT to identify mutations of interest then used alphafold to predictt the 3D structure of the mutated proteins & find therapeutic targets, then he designed a custom mRNA vaccine targeting the specific neoantigens of his dog’s tumor, all of this from his laptop & the genomics professor who received the sequencing request initially thought it was a joke

few months later this same professor is looking at the results saying if we can do this for a dog why are we we rolling this out to all humanswith cancer

and this is where I need you to understand what alphafold actually represents because I’m convinced most people have heard the name without grasping what’s hapening underneath:

for decades figuring out the 3D structure of a single protein required months sometimes years of X-ray crystallography /cryoelectron microscopy, entire labs dedicated to one molecule, alphafold2 solved this by predicting the structure of virtually every known protein thats ovr 200 million structures which earned it the Nobel prize in chemistry in 2024

but here’s the thing, alphafold 3 released in 2024 went even further where alphafold 2 predicted the structure of an isolated protein alphafold 3 predicts interactions between proteins DNA RNA small molecules & ligands in a unified system

basically it models how a drug molecule will bind to a protein target with 50% better accuracy than the best existing tools & it does it in hours instead of years and thats exactly what this guy exploited for his dog, he used alphafold to see the 3D shape of of the mutated tumor proteins & figure out how an mRNA vaccine could teach the immune system to recognize & destroy them specifically

and look what fascinates me personally is what this signals for whats coming next

isomorphic Labs the deepmind subsidiary dedicated to drug discovery already signed multibillion dollar partnerships with Eli Lilly & Novartis and the first drugs entirely designed by AI through alphafold3 are expected to enter human clinical trials by end of 2026

we’re talking oncology & immunology candidates that were designed through rational design meaning the AI literally drew the molecule to fit perfectly onto the target instead of screening millions of random compounds like we’ve been doing for 50y

by the way the movement is accelerating way faster than people think, deepmind open sourced alphafold 3 in late 2024 the scientific community immediately built on top of it, models like OpenFold3 backed by amazon & Novo Nordisk, startups like recursion developing specialized versions…

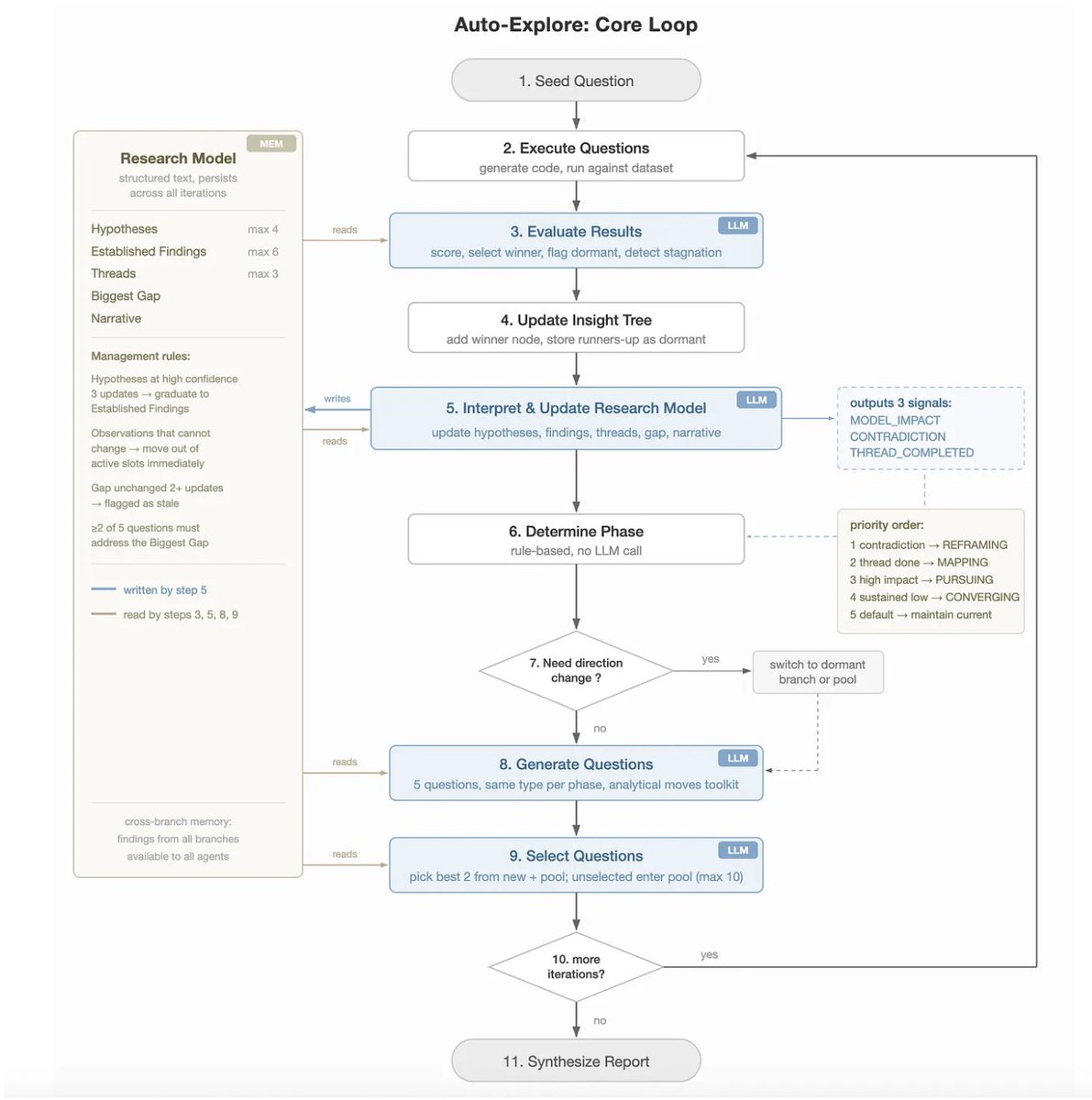

I’m telling you we’re entering the era of the autonomous lab where AI designs a molecule robots synthesize it & high-throughput platforms test it with 0 human intervention

I believe the next frontier is temporal modeling, today alphafold predicts the static shape of a molecule tomorrow we’ll predict how it moves & vibrates over time inside a living cell & after that come patient digital twins simulations that predict how your specific genetic variations will affect your response to a given drug, truly personalized medicine at the atomic level

traditionally it takes 15 years & roughly billion dollars to bring a drug from discovery to market, AI is compressing that cycle at a pace that should terrify every incumbent & what this australian guy just proved is that the entire pipeline tumor sequencing target identification structure prediction custom vaccine design can be executed by 1 person with a laptop for a few thousand $$