Pit Schultz

24.9K posts

Pit Schultz

@pitsch

Pit Schultz (DE) is a media artist, theorist and net activist.

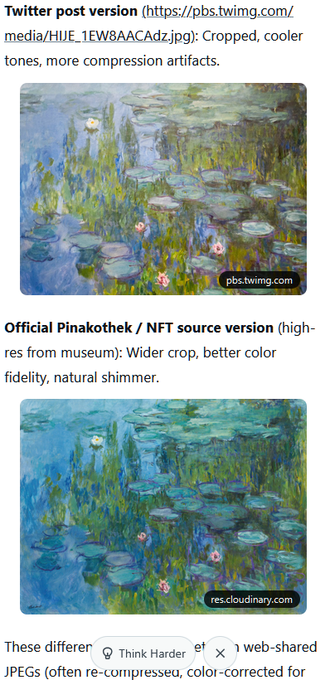

What made the SHL0MS post interesting was not simply that people mistook a real Monet for AI. It was how quickly perception changed the moment the label “AI-generated” appeared. Suddenly the brushwork felt “soulless.” The atmosphere became “artificial.” People began pointing out algorithmic textures and emotional emptiness inside an actual Monet painting. Susan Sontag wrote in Against Interpretation that critics often approach art with the urge to extract meaning before truly encountering the work itself. Every image becomes allegory. Every detail becomes something to decode. Kafka was endlessly subjected to this. Some read his work as social allegory about bureaucracy and alienation. Others reduced it to psychoanalytic fears of the father. Religious readings turned his characters into symbols of divine judgment and salvation. Interpretation itself is not the problem. It can deepen understanding and reshape the past. But when interpretation overtakes experience, the artwork begins to disappear beneath explanation. That is why Sontag wrote, “In place of a hermeneutics we need an erotics of art.” The SHL0MS post revealed something uncomfortable. Many people were no longer looking at the painting itself. They were looking at the category surrounding it. The label determined the experience before the image even had the chance to speak. Perhaps the real problem is not AI art, but our growing inability to encounter an artwork without immediately trying to classify, decode, and intellectually dominate it. Was the painting truly worse once people believed it was AI? Or did interpretation arrive before seeing ever could? @SHL0MS @Jediwolf

Good morning to everyone whose brain hasn’t been infected by Foucault, Derrida, et al.

What happens when you post a real Monet and say it’s AI? The coolest art social experiment I’ve seen in a while. Thank you @SHL0MS

people in cryptoart wouldn't know art if it sat on their face

Continual learning is bottlenecked by realistic evaluations Introducing FutureSim, which replays real-world events in the temporal order they occurred We benchmark frontier agents at updating predictions about how our world evolves, in native harnesses like Codex, Claude Code