Rasheed Posts

5.9K posts

Rasheed Posts

@rasheedpostx

Building a new SaaS project.

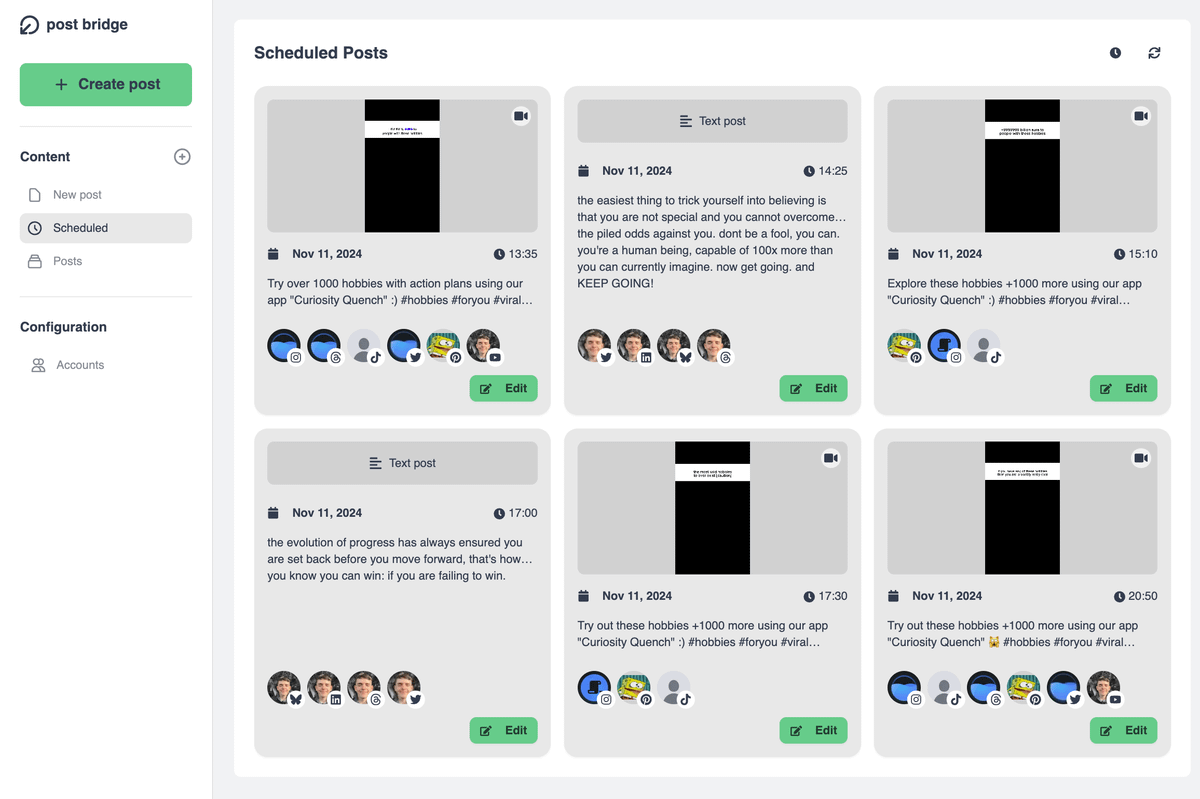

So I think the new X algorithm f*cked my account, and maybe yours too. Elon and Nikita said they were going to stop this, but I think they didn't: the new algorithm is clearly showing your content based on your IP geolocation. The proof: Left: 3 months of stats Right: 7 weeks of stats I went from 30% US traffic in the last 3 months (in fact, I had 50% months ago) to 40% Spanish traffic, even though I was writing exactly the same type of content this week, in English. It seems they just want non US people to be unable to reach that audience. It's as plain as that. So, so sad. Three years of working hard to build a US audience by providing value to you all, and now a f*cking algorithm is going to destroy all that. Come on, X. What the hell does it matter where you post from if the content is good?

Nice day for a drive eh.

We're big fans of open source. I actually just put up a few PRs to improve prompt cache efficiency for OpenClaw specifically. This is more about engineering constraints. Our systems are highly optimized for one kind of workload, and to serve as many people as possible with the most intelligent models, we are continuing to optimize that. When you use an API key or overages it should still work. The issue was just subs. If you still want to cancel, we're giving full refunds. We know not everyone realized this isn't something we support, and this is an attempt to make it clear and explicit.

Digging into reports, most of the fastest burn came down to a few token-heavy patterns. Some tips: • Sonnet 4.6 is the better default on Pro. Opus burns roughly twice as fast. Switch at session start. • Lower the effort level or turn off extended thinking when you don't need deep reasoning. Switch at session start. • Start fresh instead of resuming large sessions that have been idle ~1h • Cap your context window, long sessions cost more CLAUDE_CODE_AUTO_COMPACT_WINDOW=200000 We're rolling out more efficiency improvements, make sure you're on the latest version. If a small session is still eating a huge chunk of your limit in a way that seems unreasonable, run /feedback and we'll investigate

We're aware people are hitting usage limits in Claude Code way faster than expected. Actively investigating, will share more when we have an update!