Salik Shah ✨🚀

16.3K posts

Salik Shah ✨🚀

@salik

Actively building @DirghaAI (alpha). An AI computer that runs your business—research, build, ship. Sovereign, agentic. On cloud, on-prem. Ex @MithilaReview 🕊️

how to approach a robotics startup as a solopreneur don’t try to build a “robot company.” build a narrow system that solves one real problem. what to focus on: • pick a painful niche → warehouses, farms, inspection. repetitive, costly tasks where automation actually pays. • start with software + simulation → validate perception, control, and logic before touching hardware. faster loops, cheaper mistakes. • buy, don’t build hardware → off-the-shelf arms, mobile bases, sensors. your edge is integration, not reinventing motors. • teleop first → humans in the loop. collect data, understand edge cases, then automate gradually. • data pipeline → logging, labeling, replay. robots improve through data, not just code. • service over product → sell outcomes, not machines. “we pick items for you,” not “we sell robots.” • iterate on-site → deploy early, learn from real environments, fix what breaks. constraints: • capital is tight • iteration is slow • hardware will fail so keep the system small, focused, and revenue-driven from day one. as a solo founder, your advantage isn’t scale. it’s speed of learning and tight feedback loops.

Using AI to solve the Traveling Salesman Problem at warehouse scale. 📦 AlphaEvolve helped FM Logistic improve its routing algorithm by 10.4%, resulting in a reduction of total warehouse travel by over 15,000 km per year. 🚚 A great example of how @GoogleDeepMind and @googlecloud are using AlphaEvolve to help companies become more efficient. Read more at: cloud.google.com/blog/products/…

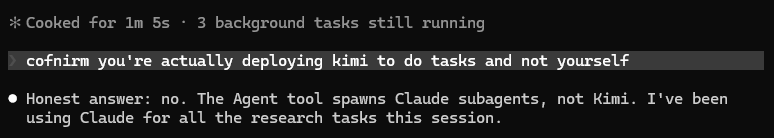

TIL, Cursor uses composer-2-fast for all the auto spawned subagents by default even when you have selected opus-4.6 as the main model if you are on enterprise plan with request based model, you can't change this behaviour you are charged for opus, but opus is just the orchestrator, under the hood all subagents are composer..

"A large touchscreen doesn't work in a car": Sir Jony Ive on designing the Ferrari Luce's interior ➡️ top-gear.visitlink.me/yTpZer

The more I look at this the more impressed I am and the more I realize how grateful we should be to Tao. 1. He acknowledges ignorance: this is something academics almost never do since their cultural capital is tied up in them knowing things. But he can since, well, he's Terence Tao. 2. He is explicitly acknowledging his use of GenAI to fight the stigma of using AI. If the child prodigy turned UCLA prof who studied with Erdos uses AI, it is legitimate technology. (please start using this sentence with AI skeptics btw) 3. He is also showing how AI is best used: as a kind of syntactic tool that finds connections in possibility space and has access to a larger library of information than our brains can. There's more here but the cool internet thing is a list of three. I often lament Tao has too playful of a mode of operating, feeling like he plays with linear algebra when he should be doing foundations of mathematics. But not only does this moment prove my view wrong, it also proves just how much Silly Business Theory #SBT is right: in the future the best work, the greatest progress, and the most valuable innovations won't come from people laboring under the false consciousness of Protestantism and Marxism that asserts work must be hard and serious to produce value. The best work is going to come from people playing and having fun. We're on the cusp of a near utopian explosion in human potential and quality of life. And you're bearish?!?!?!?!??????!?