salomechen

33.3K posts

Elon Musk has used SpaceX as a kind of piggy bank over the last two decades, turning to the company as a financial tool to get loans and bolster his struggling companies, according to an examination by The New York Times. nyti.ms/4w8dInZ

美国数学家Richard Hamming是图灵奖得主,计算机先驱,纠错码之父。 他说自己很早在Los Alamos见过Feynman费曼、Oppenheimer奥本海默等。他承认自己当时很嫉妒,凭什么大家都是物理人,你们这些人是大牛人? 他后来在贝尔实验室Bell Labs继续观察Shannon香农、这些人,为什么有些人做到了,而其他人只是差点做到了? “运气”只能解释一半。具体做成哪一个题,当然有运气。 Hamming自己也承认,他和Shannon香农同在贝尔实验室Bell Labs,同一时期一个做coding theory,一个做information theory,确实有运气成分。 但Einstein爱因斯坦、Shannon香农这类人可以反复做出好东西。一次可以说是撞上了,反复撞上,就要看准备工作、胆量和选择了。 机会会飘过很多人身边,但只有少数人已经在脑子里预留了接口。 他讲,重要问题不是结果影响听起来多大。比如时间旅行、传送、反重力,结果影响当然巨大,但手里没有合理的入口,也只能供人幻想。 一个真正该上的科题,要同时有分量和入口,如果做成,它会改变一些东西;而你现在又能找到一条可以攻进去的路。 很多聪明人输在这里。他们每天忙忙碌碌,设计问题很精致,方法很专业,可心里其实知道,这些东西就算做完,也很难通向更大的东西。 Hamming每周五中午以后给自己留“Great Thoughts Time”,只聊大问题,比如计算机会怎样改变科学? 这听起来像偷懒,其实是给自己留10%的雷达时间。 你如果一周五天都在处理眼前小事,很容易把效率误认为方向。你会变成一个很勤奋、很可靠、很会交付的人,然后十年后发现,自己一直在小问题上越做越熟。 Hamming还有一个观察: 关着门工作的人,完全隔绝噪音,短期内很舒服,貌似产出更高;长期看,你会错过那些不成体系、没法写进报告、但能告诉你“问题变了”的信号。 而开着门工作的人,虽然经常被打断,但更可能知道世界的新动向。一流工作需要深度,也需要暴露在真实问题流里。 他讲,成名后的危险,也很像今天的创业者、研究者和内容创作者。 一旦做出一个大东西,人会不愿意再种小种子,只想一上来就抱大树。Hamming说Shannon香农在信息论之后,可能就被“下一次必须同样伟大”这件事困住了。 早期的伟大而会把人冻住。因为你不愿意再做那些小、丑、未成形、别人看不上的起点。 但大东西通常就是从这种小起点长出来的。 还有一点很多人不爱听。 做出来还不够,你要会把它讲出去。 Hamming说科学家讨厌“sell”这个词,觉得好东西应该自然被世界看见。可现实是,所有人都在忙自己的事。你写得不清楚,讲得不清楚,会议上不敢开口,别人就会翻过去。 所以表达不是包装,是研究的一部分。 一个想做一流工作的人,至少要会三种表达: 写清楚,在正式场合里讲清楚,在混乱的场合里也能讲清楚。 很多“事后诸葛亮”三周后写报告证明自己早就看对了,但时过境迁了。 才华放晚了也会变成旁白。 把“伟大”从天赋、环境、运气这些大词拽回到具体的日常动作: 你有没有固定时间想大问题。 你手里有没有10到20个真正重要、且可能进攻的问题。 你遇到一个机会时,能不能立刻看出它碰到了你哪一个老问题。 你做一个项目时,有没有顺手把它变成一类问题的方法,而不止交一个答案。 你有没有把自己的缺点拿来当借口。 你会不会和大的体系合作,借力秘书、同事、老板、听众、组织流程打战役,而不是一生耗在小型战斗里。 讲真,你可以说自己缺运气,缺资源,缺年轻,缺老板支持。那你有没有准备好?有没有选对问题?有没有留出想大问题的时间?有没有勇气押上去,把成果讲到别人愿意停下来听? 说到最后,很多所谓怀才不遇,可能只是长期没有管理自己。

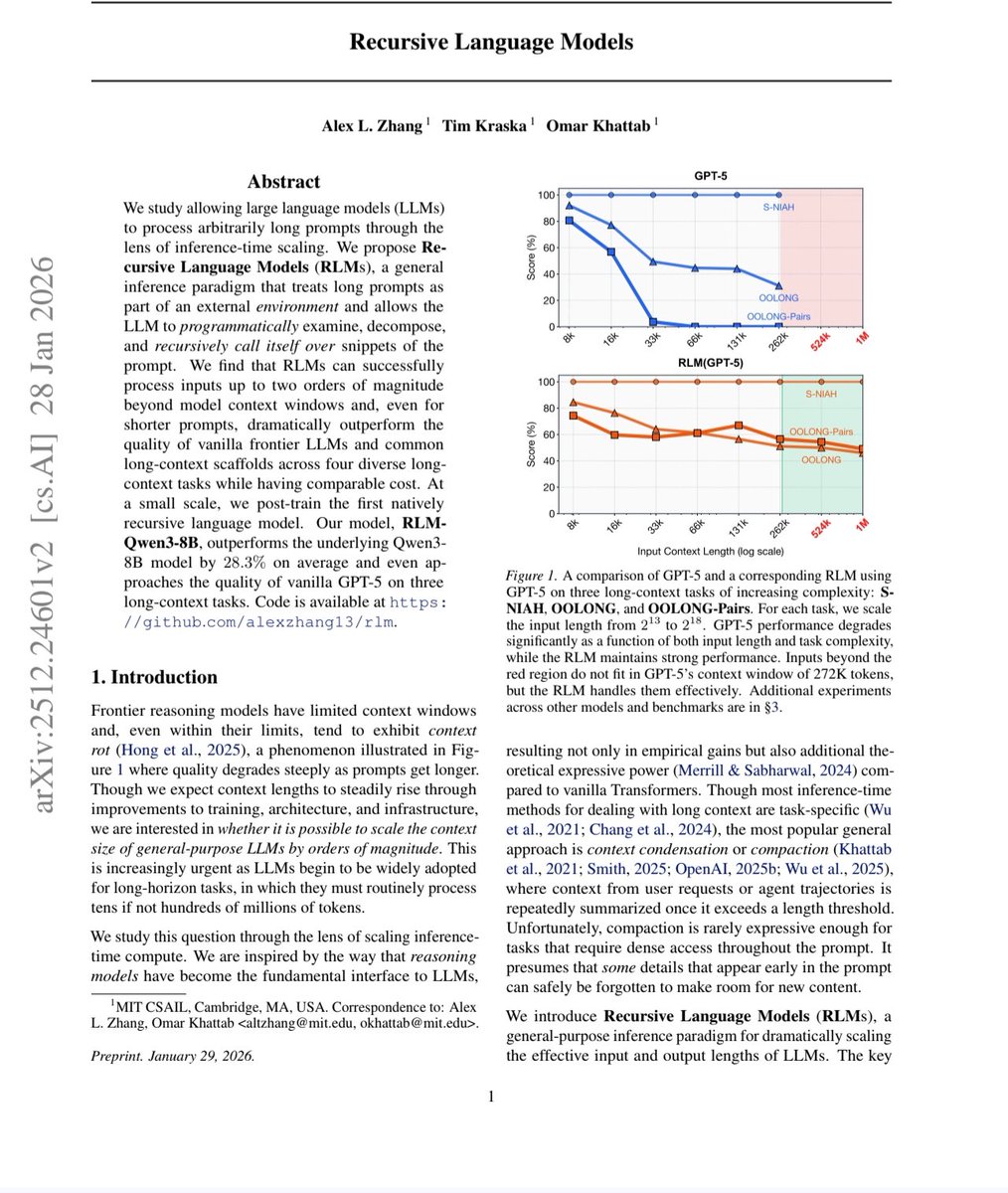

MIT just made every AI company's billion dollar bet look embarrassing. They solved AI memory. Not by building a bigger brain. By teaching it how to read. The paper dropped on December 31, 2025. Three MIT CSAIL researchers. One idea so obvious it hurts. And a result that makes five years of context window arms racing look like the wrong war entirely. Here is the problem nobody solved. Every AI model on the planet has a hard ceiling. A context window. The maximum amount of text it can hold in working memory at once. Cross that line and something ugly happens — something researchers have a clinical name for. Context rot. The more you pack into an AI's context, the worse it performs on everything already inside it. Facts blur. Information buried in the middle vanishes. The model does not become more capable as you feed it more. It becomes more confused. You give it your entire codebase and it forgets what it read three files ago. You hand it a 500-page legal document and it loses the clause from page 12 by the time it reaches page 400. So the industry built a workaround. RAG. Retrieval Augmented Generation. Chop the document into chunks. Store them in a database. Retrieve the relevant ones when needed. It was always a compromise dressed up as a solution. The retriever guesses which chunks matter before the AI has read anything. If it guesses wrong — and it does, constantly — the AI never sees the information it needed. The act of chunking destroys every relationship between distant paragraphs. The full picture gets shredded into fragments that the AI then tries to reassemble blindfolded. Two bad options. One broken industry. Three MIT researchers and a deadline of December 31st. Here is what they built. Stop putting the document in the AI's memory at all. That is the entire idea. That is the breakthrough. Store the document as a Python variable outside the AI's context window entirely. Tell the AI the variable exists and how big it is. Then get out of the way. When you ask a question, the AI does not try to remember anything. It behaves like a human expert dropped into a library with a computer. It writes code. It searches the document with regular expressions. It slices to the exact section it needs. It scans the structure. It navigates. It finds precisely what is relevant and pulls only that into its active window. Then it does something that makes this recursive. When the AI finds relevant material, it spawns smaller sub-AI instances to read and analyze those sections in parallel. Each one focused. Each one fast. Each one reporting back. The root AI synthesizes everything and produces an answer. No summarization. No deletion. No information loss. No decay. Every byte of the original document remains intact, accessible, and queryable for as long as you need it. Now here are the numbers. Standard frontier models on the hardest long-context reasoning benchmarks: scores near zero. Complete collapse. GPT-5 on a benchmark requiring it to track complex code history beyond 75,000 tokens — could not solve even 10% of problems. RLMs on the same benchmarks: solved them. Dramatically. Double-digit percentage gains over every alternative approach. Successfully handling inputs up to 10 million tokens — 100 times beyond a model's native context window. Cost per query: comparable to or cheaper than standard massive context calls. Read that again. One hundred times the context. Better answers. Same price. The timeline of the arms race makes this sting harder. GPT-3 in 2020: 4,000 tokens. GPT-4: 32,000. Claude 3: 200,000. Gemini: 1 million. Gemini 2: 2 million. Every generation, every company, billions of dollars spent, all betting on the same assumption. More context equals better performance. MIT just proved that assumption was wrong the entire time. Not slightly wrong. Fundamentally wrong. The entire premise of the last five years of context window research — that the solution to AI memory was a bigger window — was the wrong answer to the wrong question. The right question was never how much can you force an AI to hold in its head. It was whether you could teach an AI to know where to look. A human expert handed a 10,000-page archive does not read all 10,000 pages before answering your question. They navigate. They search. They find the relevant section, read it deeply, and synthesize the answer. RLMs are the first AI architecture that works the same way. The code is open source. On GitHub right now. Free. No license fees. No API costs. Drop it in as a replacement for your existing LLM API calls and your application does not even notice the difference — except that it suddenly works on inputs it used to fail on entirely. Prime Intellect — one of the leading AI research labs in the space — has already called RLMs a major research focus and described what comes next: teaching models to manage their own context through reinforcement learning, enabling agents to solve tasks spanning not hours, but weeks and months. The context window wars are over. MIT won them by walking away from the battlefield. Source: Zhang, Kraska, Khattab · MIT CSAIL · arXiv:2512.24601 Paper: arxiv.org/abs/2512.24601 GitHub: github.com/alexzhang13/rlm

How Anthropic’s product team moves faster than anyone else I sat down with @_catwu, Head of Product for Claude Code at @AnthropicAI, to get a peek into their unprecedented shipping pace, how AI is changing the PM role, and how to be the right amount of AGI-pilled. We discuss: 🔸 How Anthropic’s shipping cadence went from months to weeks to days 🔸 The emerging skills PMs need to develop right now 🔸 Why you should build products that don't work yet—then wait for the model to catch up 🔸 Why a 95% automation isn't really an automation 🔸 Cat’s most underrated AI skill (introspection) 🔸 What Cat actually looks for when hiring PMs now (hint: it's not traditional PM skills) Listen now 👇 youtu.be/PplmzlgE0kg

说个暴论,AI界的iPhone时刻可能就要到来了。 OpenRouter上最近杀出来一个匿名模型,把所有Agent开发者都打懵了。 它叫Elephant Alpha,没有发布会,没有营销通稿,连开发者是谁都不知道😂 纯靠用户口口相传,一周就冲到了平台日活前十,token使用量暴增377%。 我自己测了三天,结论是这他么才是2026年AI该有的样啊! 速度快到离谱,不是那种一个字一个字蹦的慢输出,是你刚敲完回车,一整段带注释的代码直接完整输出,体感和Grok 4 Fast差不多,但代码质量高一个档次。 最夸张的是智效比,同一份任务,完全相同的输出质量,它的token消耗是Claude Opus的一半,GPT-5.4的三分之一,账单是真的肉眼可见地往下掉。 它不是啥全能思考型模型,更像一个纯粹到极致的执行机器,跨十几个文件找Bug,256K上下文稳如狗,一点不丢引用。 几十页合同直接转成结构化的条款表,会议记录转待办,群聊转摘要,网页转初稿,所有你不想干的脏活累活,它干得又快又好。 唯一要注意的是它不会帮你脑补,指令越清晰,约束越明确,输出质量越爆炸,模糊的需求很容易得到平庸的结果,也不适合复杂的多步长链规划,知识时效性需要自己注入上下文。 最反直觉的地方来了,以前我们总觉得,什么活儿都得用最好的旗舰模型 ,但实际上你每天80%的工作,根本不需要Claude或者GPT的深度思考能力,你只是需要一个东西,能准确、快速、便宜地把事做完。 现在OpenClaw和Hermes社区已经形成了标准玩法,Claude管整体规划和架构设计,只调用一次。 Elephant管分步执行、局部修复、批量生成,跑一百次,整体效率翻三倍,成本直接砍到原来的十分之一甚至更低。 这才是Agent经济真正的突破口啊,以前Agent跑不起来,不是因为不够聪明,是调用一次太贵,延迟太高😟 当执行层的成本趋近于零的时候,所有自动化才真正变得可行。 更有意思的是匿名模型这个趋势,以后最好用的模型,可能都不是大厂发布会吹的那些。 OpenRouter的盲测机制,让模型纯靠真实使用数据说话,没有品牌溢价,没有营销滤镜,谁好用谁就会被用户用脚投票选出来。 社区普遍猜测这是某国产大厂的马甲在全球盲测,也侧面说明中国AI在推理优化赛道已经跑在了前面。 现在它还在盲测期,完全免费,256K上下文,32K输出,函数调用,结构化输出,全开放。 OpenRouter和Kilo Code上都能用,建议兄弟们把所有日常重复任务都切过去,把省下来的预算,留给真正需要深度推理的场景。 Elephant Alpha 是不是下一个ChatGPT 呢,我觉得不是,它更像是AI从偶尔炫技变成每天省事省钱的基础设施。 所以别再用大炮打蚊子了,模型分层才是2026年AI玩家的核心竞争力啊, 咱们工作流里有哪些活儿,其实根本不需要用旗舰模型🌚 #AI #大模型 #OpenRouter #ElephantAlpha #开发者 #Agent

Anthropic just pulled Claude Code from the Pro plan. Pro users wanting it need Max now. $100/month minimum. 5x jump. I'm on Max 20x so I'm fine. Flagging for anyone on Pro who's about to find out. No announcement. Just a pricing page edit.

Holy shit, trump's Labor Secretary, Lori Chavez-Deremer has just resigned in shame after allegations of misconduct, including asking staffers to "bring her wine." This whole regime is drunk on power, and UNFIT.