Samuel Castillo

374 posts

A 27B model is #2 on pinch-bench You’d need 150,000$ in GPU hours to train this from scratch (base + post training) Basically 1-2 weeks over 256 H100s That is not unreasonable, you’d need 540B tokens for pre-training and a bit more for post training. None of this is crazy

🚀 Introducing Nemotron-Cascade 2 🚀 Just 3 months after Nemotron-Cascade 1, we’re releasing Nemotron-Cascade 2: an open 30B MoE with 3B active parameters, delivering best-in-class reasoning and strong agentic capabilities. 🥇 Gold Medal-level performance on IMO 2025, IOI 2025, and ICPC World Finals 2025: • Capabilities once thought achievable only by frontier proprietary models (e.g. Gemini Deep Think) or frontier-scale open models (i.e. DeepSeek-V3.2-Speciale-671B-A37B). • Remarkably high intelligence density with 20× fewer parameters. 🏆 Best-in-class across math, code reasoning, alignment, and instruction following: • Outperforms the latest Qwen3.5-35B-A3B (2026-02-24) and even larger Qwen3.5-122B-A10B (2026-03-11). 🧠 Powered by Cascade RL + multi-domain on-policy distillation: • Significantly expand Cascade RL across a much broader range of reasoning and agentic domains than Nemotron-Cascade 1, while distilling from the strongest intermediate teacher models throughout training to recover regressions and sustain gains. 🤗 Model + SFT + RL data: 👉 huggingface.co/collections/nv… 📄 Technical report: 👉 research.nvidia.com/labs/nemotron/…

Customize Speech-to-text for Healthcare (in real-time) Transcribing medical conversations requires systems that continually adapt to the newly developed and approved medications, tests, and procedures. Furthermore, there are more than 135 medical specialties, each bringing its unique vocabulary to learn. General-purpose systems are simply not useful in these settings. A popular method for continual adaptation is to fine-tune general-purpose speech-to-text models on evolving vocabularies. However, this requires frequent production deployments with significant updates, potentially leading to excessive time-to-market delays and engineering overhead. The newly improved Argmax Custom Vocabulary feature enables developers to customize speech-to-text in real-time in a self-serve fashion: - Updating the system vocabulary is a configuration change, not a model or system update. - Each medical specialty can easily configure its unique vocabulary to scalably customize behavior in a fine-grained fashion. - Accuracy surpasses medical-domain fine-tuned models in many cases, thanks to precision-targeted vocabularies. (Numbers in replies) As a concrete example, here is how Argmax performs on a file that vocalizes all medications approved by the FDA in 2025.

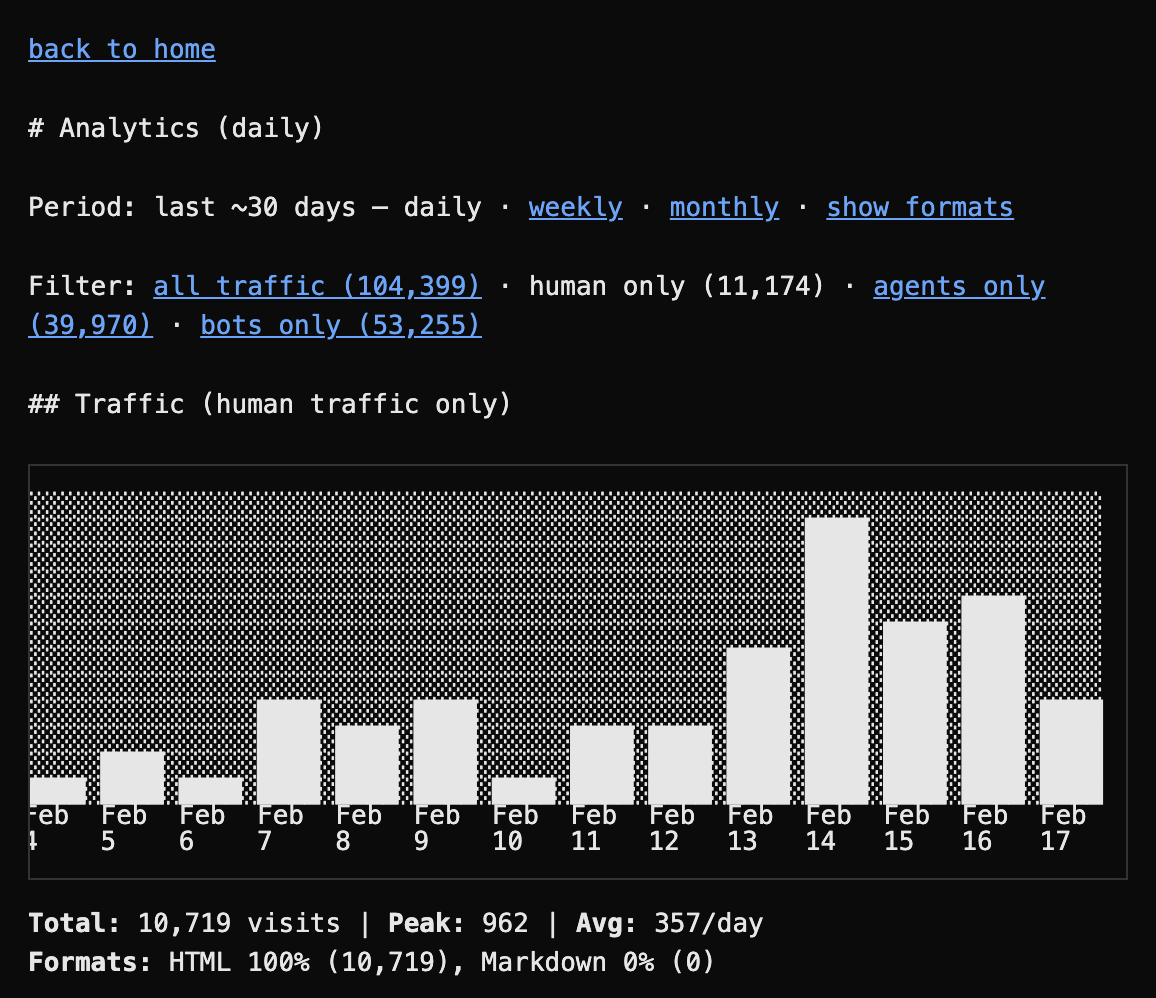

I think QMD is one of my finest tools. I use it every day because it’s the foundation of all the other tools I build for myself. A local search engine that lives and executes entirely on your computer. github.com/tobi/qmd Both for you and agents

PSA - I've heard from two different people at @OpenAI that these regressions are not intentional, and they have folks in engineering investigating the problem. I shared two repro threads with them to confirm the problem.