Stijn 'Vioo' Diependaele

2.5K posts

Stijn 'Vioo' Diependaele

@sdiepend

Freelance DevOps Engineer Building: - https://t.co/SPTuvS2qJD - https://t.co/nrEBDhpBVS Broad interest in the things of life https://t.co/RiHVi5bZfw

One common issue with personalization in all LLMs is how distracting memory seems to be for the models. A single question from 2 months ago about some topic can keep coming up as some kind of a deep interest of mine with undue mentions in perpetuity. Some kind of trying too hard.

This man is a billionaire and was removing labels with a hair dryer personally, you're simply not grinding hard enough

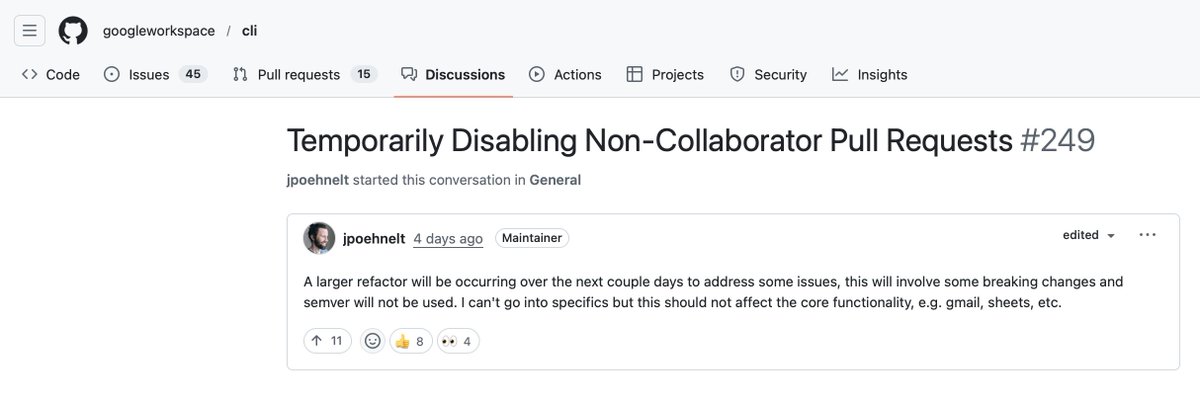

Google just dropped an official CLI for gmail, drive, calendar sheets and more complete with skills and an MCP server 👌

Released today: /loop /loop is a powerful new way to schedule recurring tasks, for up to 3 days at a time eg. “/loop babysit all my PRs. Auto-fix build issues and when comments come in, use a worktree agent to fix them” eg. “/loop every morning use the Slack MCP to give me a summary of top posts I was tagged in” Let us know what you think!

This is what happens when you don’t have enough “no people” on your team. There’s 1 million yes men but you need someone that’s gonna say no Nobody felt confident enough to be able to say “hey we shouldn’t release this. It looks weird” Common sense consultancy group

I think we are witnessing the biggest explosion in software creation in history. New website creation is up 40% year on year. New iOS apps are up nearly 50%. GitHub code pushes in the US jumped 35% and in the UK around 30%. All of these metrics were flat for years before late 2024. The entire graph looks like a hockey stick. You no longer need a six month runway and a dev team to ship something real. We see this in our metrics as well! People who never wrote a line of code are building and launching apps. The barrier to building software just disappeared. What matters now is knowing what to build and the taste to build it right.

Anthropic's own researchers just proved that using AI to learn new skills makes you 17% worse at them. and the part nobody's reading is more important than the headline. the paper is called "How AI Impacts Skill Formation." randomized experiment. 52 professional developers. real coding tasks with a Python library none of them had used before. half got an AI assistant. half didn't. the AI group scored 17% lower on the skills evaluation. Cohen's d of 0.738, p=0.010. that's a real effect. and here's what makes it sting: the AI group wasn't even faster. no significant speed improvement. they learned less AND didn't save time. but the viral framing of "AI bad for learning" misses what actually matters in this paper. the researchers watched screen recordings of every single participant. they identified 6 distinct patterns of how people use AI when learning something new. 3 of those patterns preserved learning. 3 destroyed it. the gap between them is enormous. participants who only asked AI conceptual questions scored 86% on the evaluation. participants who delegated everything to AI scored 24%. same tool. same task. same time limit. the difference was cognitive engagement. the highest-scoring AI users actually outperformed some of the no-AI group. they asked "why does this work" instead of "write this for me." they generated code then asked follow-up questions to understand it. they used AI as a thinking partner, not a replacement for thinking. the lowest-scoring group did what most people do under deadline pressure: pasted the prompt, copied the output, moved on. they finished fastest. they learned almost nothing. and here's the finding that should concern every engineering manager alive: the biggest score gap was on debugging questions. the skill you need most when supervising AI-generated code is the exact skill that atrophies fastest when you let AI do the work. the control group made more errors during the task. they hit bugs. they struggled with async concepts. they got frustrated. and that struggle is precisely what built their understanding. errors aren't obstacles to learning. they ARE learning. removing them with AI removes the mechanism that creates competence. participants in the AI group literally said afterward they wished they'd "paid more attention" and felt "lazy." one wrote "there are still a lot of gaps in my understanding." they could feel the hollowness of having completed something without understanding it. that's not a productivity win. that's debt. this paper isn't an argument against using AI. it's an argument against using AI unconsciously. Anthropic publishing research showing their own product can inhibit skill formation is the kind of intellectual honesty the industry needs more of. the practical takeaway is simple: if you're learning something new, use AI to ask questions, not to skip the work. the struggle is the product.

Just went on Bluesky and like 40-50% of what I saw was just people posting screenshots of tweets from here and dunking on them there. You almost never see the reverse. Twitter is still the center.

Today, we share our AI doctor for the first time The future is an AI that knows more about your body than any human ever could. 247 commits. 140,000 lines of code. Months of engineering. Here it is:

pro tip: give claude aws credentials and just let him do whatever he needs. read logs, re-deploy something, update something. he'll get shit done