sigmoid@sigmoidwtf

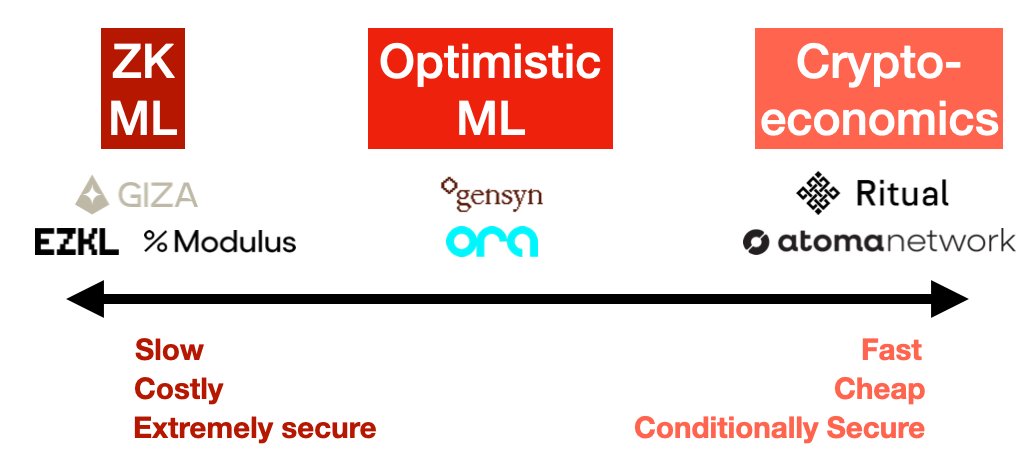

Decentralized AI is the start of a decade-long societal shift. Democratizing access to training data and computational power, allowing model developers and users to be rewarded for their contributions, and ensuring safe and censorship-resistance access to these powerful bots clearly have their merits.

Several high-quality teams, such as @ritualnet, @opentensor, @marlinprotocol, @autonolas, @sentient_agi, and @morpheusAIs, are working on making open-source AI a reality.

However, there are a few key challenges:

1) AI networks are usually more complex than standard PoS blockchains, with more node types and roles.

2) Every AI network requires participation from a decentralized community. These participants or node runners need to have devops knowledge, access to compute and potentially even ML knowledge to be competitive on such networks. This increases the barrier to entry for these AI protocols.

3) Token holders may be required to bridge from one chain to another, convert one token to another and lock them up to secure these networks without access to liquidity.

Sigmoid is here to change that.

Sigmoid acts as an integrated coordination layer between AI networks, node operators, and potential node owners who want to run infrastructure for AI networks. It abstracts away the complexity of running these networks by allowing node operators to offer their expertise in running nodes on AI networks to potential users who want to pay for such nodes. We call these users node owners.

For example, teams like @architex_ai, @ionet, @akashnet, @AethirCloud and @nirmaanai can use Sigmoid to provide distribution for their node operation services. Node owners or users don't need to worry about payments and quality of service as Sigmoid acts as the coordination layer between them and their service providers. Now, the natural question is, why would you trust Sigmoid? Sigmoid itself is a decentralized blockchain built using the cosmos SDK with security bootstrapped using ETH on @eigencloud.

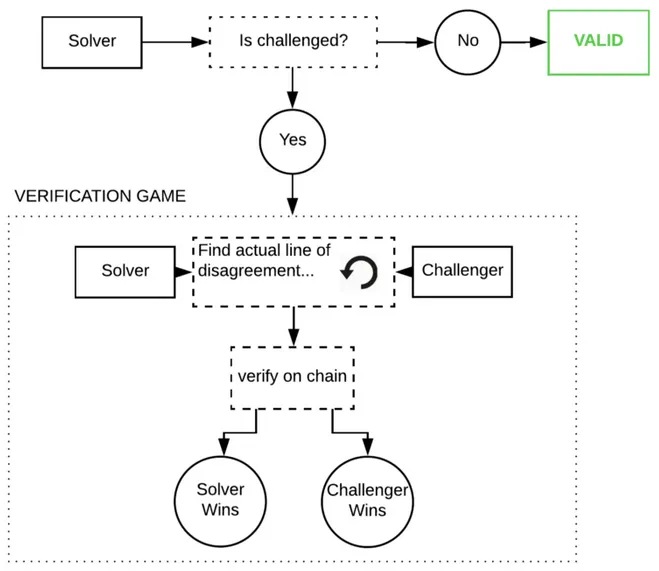

Payments sent by node owners to node operators will only be streamed if the node operators comply with certain quality of service requirements. This makes Sigmoid a trustless coordination layer between node owners and operators. Over time, Sigmoid's goal is to abstract away all of the complexity in running crypto-specific node infrastructure so that competent folks from AI can come and contribute to large decentralized AI networks as node operators and domain experts. We, as the crypto community, can support them by providing capital.

Sigmoid continues beyond node orchestration, though. We understand that liquidity is a critical component of any crypto network, and decentralized AI networks are no different. Sigmoid allows users to permissionlessly stake their AI tokens from one unified user interface and receive sigLSTs tokens on the other end. As large AI networks launch, Sigmoid will integrate them all to become the staking hub for AI projects. Through its monitoring of node operators, it can guarantee quality of service for stakers, and sigLST's capital efficiency serves as an extra incentive for users to come and stake with Sigmoid.

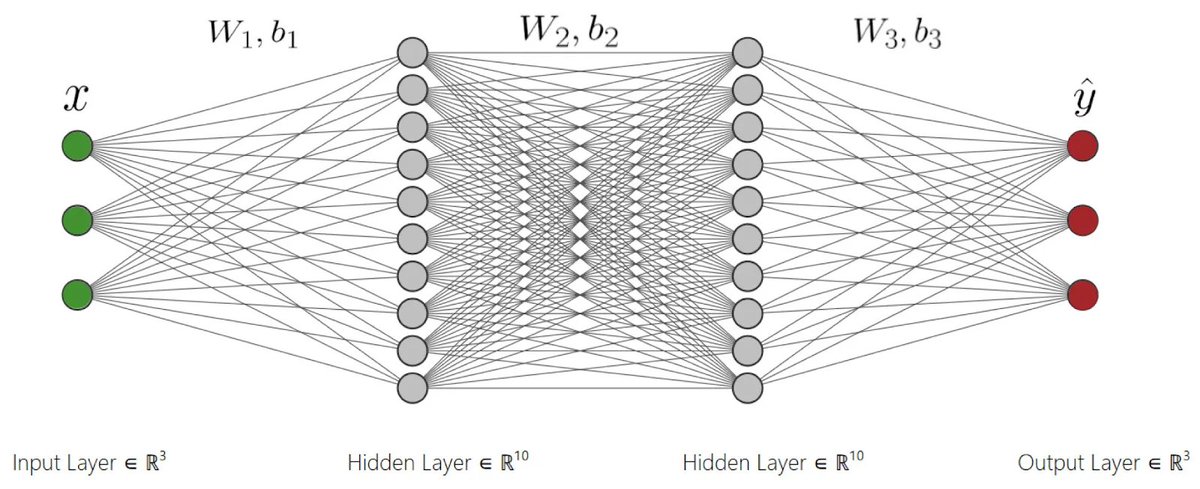

Sigmoid uses TSS to enable secure signing and management of assets on each AI network. This is especially important since many large AI networks don't have smart contracts enabled and aren't expected to have a native DeFi economy.

If you are a cryptography wizard interested in building a high-performance MPC network that can scale to a large number of nodes, DM us now!