Someshwaran Mohankumar

154 posts

Someshwaran Mohankumar

@som23x

Developer Advocate @elastic! Open-source enthusiast, love to collaborate and share knowledge. I enjoy coding, troubleshooting, and blogging Tech!

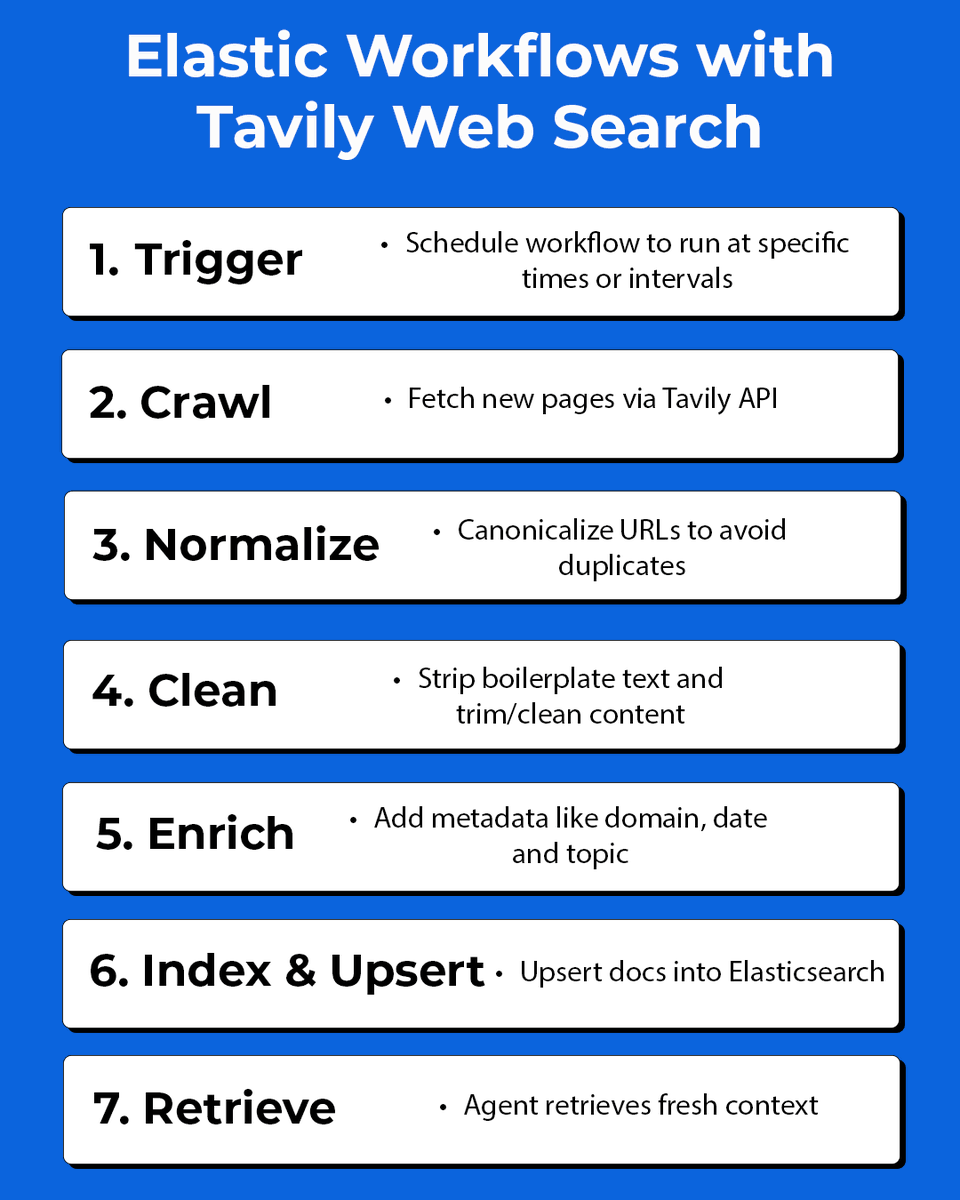

We are making AutoOps free for every self-managed cluster as a direct investment in the Elasticsearch community. This provides the full diagnostic layer previously reserved for commercial tiers, allowing SREs and Admins to move from reactive troubleshooting to proactive health management. Stop hunting for root causes. AutoOps correlates metadata every 10 sec to identify bottlenecks and provides the exact Elasticsearch commands to fix them. • Proactive Stability: Identifies shard imbalances and JVM spikes before they trigger an incident. • Secure & Metadata-based: Your data never leaves your environment. We only analyze cluster metadata. • No new monitoring clusters to maintain: Connect via Cloud Connect in ~5 minutes. Move from reactive troubleshooting to expert-level feedback. AutoOps: go.es.io/46scf0n Read the Blog: go.es.io/3OBjzAH