John P

548 posts

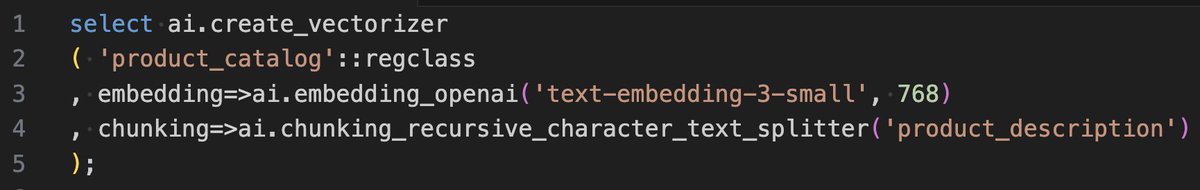

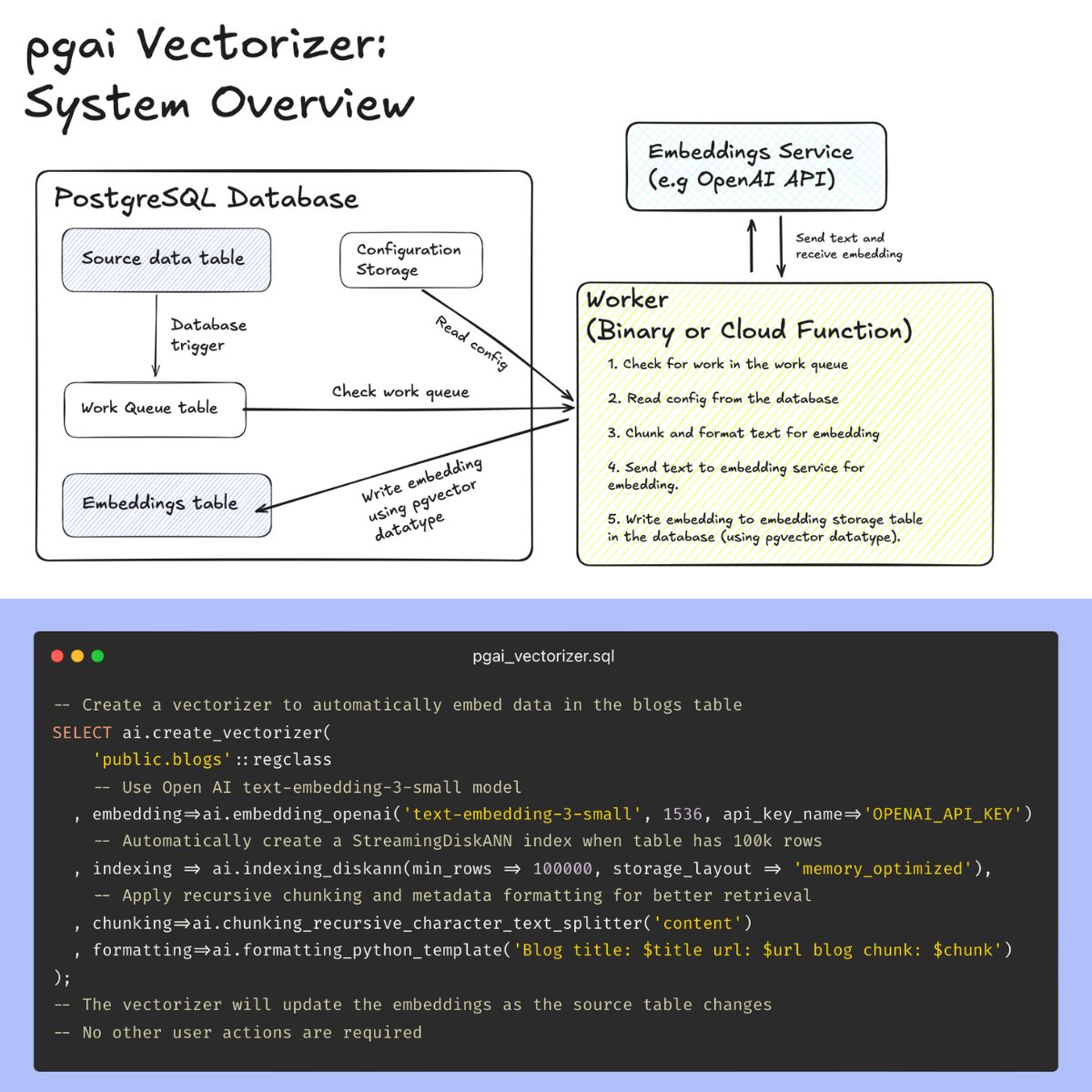

VECTOR DATABASES ARE THE WRONG ABSTRACTION. Here’s a better way: introducing pgai Vectorizer, a new open-source PostgreSQL tool that automatically creates and syncs embeddings with source data, just like a database index. ❌ Why vector databases fail Vector databases treat embeddings as independent data, divorced from the source data from which embeddings are created, rather than what they truly are: derived data. This pitfall means that many AI projects that start out as simple vector search implementations inevitably evolve into a complex orchestra of monitoring, synchronization, and firefighting. 😓 Keeping embeddings in-sync is hard In an attempt to avoid stale embeddings, engineering teams have to build and maintain a maze of ETL pipelines, juggle multiple databases (vector DB, metadata store, lexical search), and manage complex queuing systems for updates. Add monitoring for data drift, alert systems for stale results, and validation checks across systems - and you have a brittle infrastructure that inevitably breaks down, leading to stale embeddings and wasted engineering hours. What if you could just use Postgres instead? ✅ Pgai Vectorizer: Vector embeddings as database indexes Pgai Vectorizer treats embeddings like database indexes. It automatically creates, updates, and maintains embeddings as your data changes. Just like an index, the database handles all the complexity: syncing, versioning, and cleanup happen automatically. This means no manual tracking, zero maintenance burden, and the freedom to rapidly experiment with different embedding models and chunking strategies without building new pipelines. 🤔Why did we build pgai Vectorizer? Our team at @timescaledb built pgai Vectorizer because many developers regard PostgreSQL as the “Swiss army knife” of databases, as it can handle everything from vectors and text data to JSON documents. We think an “everything database” like PostgreSQL is the solution to eliminate the nightmare of managing multiple databases, making it the ideal home for vectorizers and the foundation for AI applications. ⚙️How does pgai Vectorizer work? Check out the code snippet below – it takes just 6 lines of SQL to put your embedding creation pipeline on autopilot with pgai Vectorizer! Under the hood, pgai Vectorizer checks for modifications to the source table (inserts, updates, and deletes) and asynchronously creates and updates vector embeddings in an external worker. 🧑💻 Sounds exciting! How can I get started? Pgai Vectorizer is open-source under the PostgreSQL license and available for free to use on any PostgreSQL database. You can find installation instructions on the pgai GitHub repository (see end of post). It’s also available as a managed service in Timescale’s PostgreSQL cloud platform. 📚Learn more [1] Pgai github repo: github.com/timescale/pgai [1] Technical explainer post: timescale.com/blog/vector-da… Share this post with your followers to let them know about pgai Vectorizer and comment your reactions and questions.