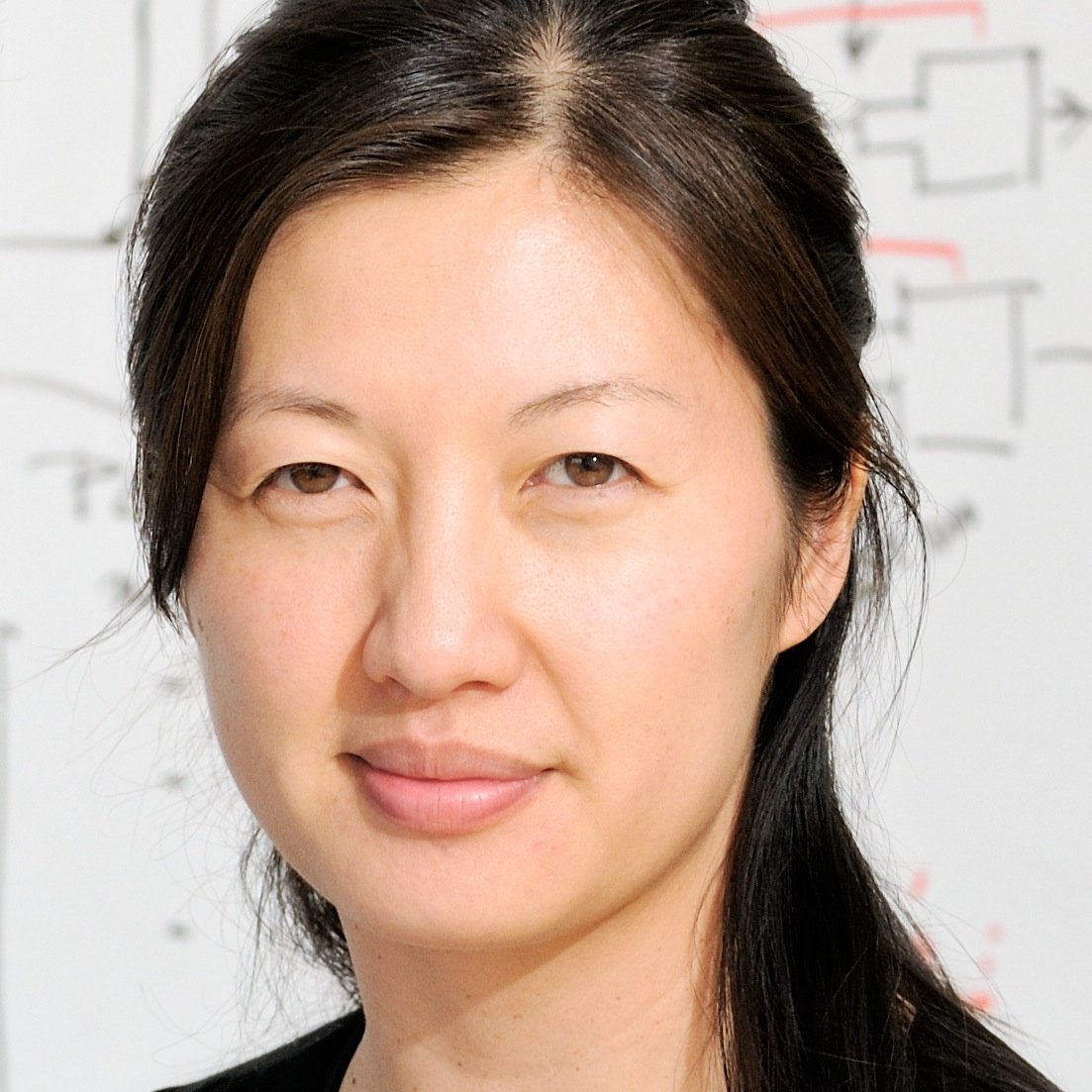

Weinan Sun

894 posts

@sunw37

Neuroscience, Artificial Intelligence, and Beyond. Assistant professor, Neurobiology and Behavior @CornellNBB

There was a nice time where researchers talked about various ideas quite openly on twitter. (before they disappeared into the gold mines :)). My guess is that you can get quite far even in the current paradigm by introducing a number of memory ops as "tools" and throwing them into the mix in RL. E.g. current compaction and memory implementations are crappy, first, early examples that were somewhat bolted on, but both can be fairly easily generalized and made part of the optimization as just another tool during RL. That said neither of these is fully satisfying because clearly people are capable of some weight-based updates (my personal suspicion - mostly during sleep). So there should be even more room for more exotic approaches for long-term memory that do change the weights, but exactly - the details are not obvious. This is a lot more exciting, but also more into the realm of research outside of the established prod stack.

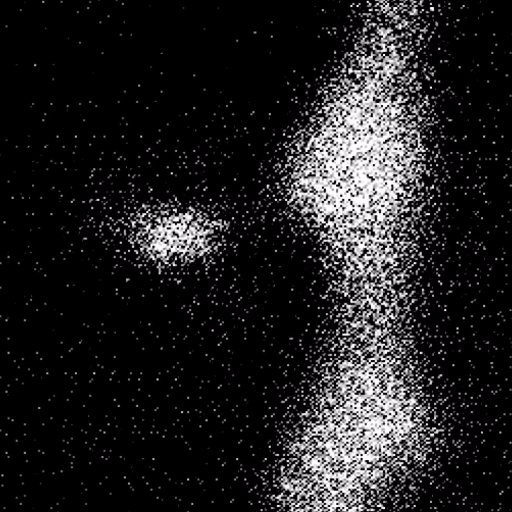

🚀 Looking to hire a light-sheet microscopy scientist to help us design, build, and optimize the next-gen platform that will power mammalian connectomics at scale. If you’re excited to join a fast-paced team doing high-impact science, take a look: jobs.lever.co/convergentrese…🔬⚙️

"Now, a neural network can approximate... or it can understand. Be the spiral, my friend."

@E11BIO is excited to unveil PRISM technology for mapping brain wiring with simple light microscopes. Today, brain mapping in humans and other mammals is bottlenecked by accurate neuron tracing. PRISM uses molecular ID codes and AI to help neurons trace themselves. We discovered a new cell barcoding approach exceeding comparable methods by more than 750x. This is the heart of PRISM. We integrated this capability with microscopy and AI image analysis to automatically trace neurons at high resolution and annotate them with molecular features. This is a key advance towards economically viable brain mapping - 95% of costs stem from neuron tracing. It is also an important step towards democratizing neuron tracing for everyday neuroscience. Solving these problems is critical for curing brain disorders, building safer and human-like AI, and even simulating brain function. In our first pilot study, we acquired a unique dataset in mouse hippocampus. Barcodes improved the accuracy of tracing genetically labelled neurons by 8x – with a clear path to 100x or more. They also permit tracing across spatial gaps – essential for mitigating tissue section loss in whole-brain scaling. Using molecular annotation, we uncover an intriguing feature of synaptic organization, demonstrating how PRISM can be used for systematic discovery 🧵