Sabitlenmiş Tweet

SweepAI

216 posts

SweepAI

@sweepai

Sweep is the AI coding assistant built for JetBrains. Download at https://t.co/7ZK0kiDANr.

San Francisco Katılım Mart 2023

7 Takip Edilen1.4K Takipçiler

SweepAI retweetledi

New edit prediction providers just dropped in Zed: @_inception_ai, @sweepai, @ollama, and @GitHub Copilot NES.

We also simplified adding new providers. If there's a model you want to use in Zed, open a PR.

zed.dev/blog/edit-pred…

English

SweepAI retweetledi

🚀 We just shipped v0.222!

Edit prediction now supports multiple providers: @github Copilot's Next Edit Suggestions, @ollama, @mistralai's Codestral, @sweepai, and @_inception_ai's Mercury Coder.

zed.dev/blog/edit-pred…

English

SweepAI retweetledi

using this in nvim and damn. it really good. this is on a m1 16gigs so

SweepAI@sweepai

Introducing Sweep Next-Edit, an open-weights 1.5B model trained to predict your next edit. Sweep Next-Edit outperforms models 4x its size while being small enough to run locally. We're open-sourcing the weights so the community can build fast, privacy-preserving autocomplete for every IDE.

English

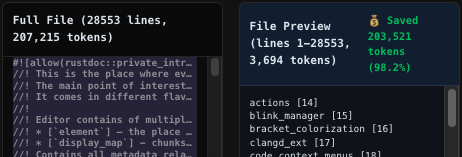

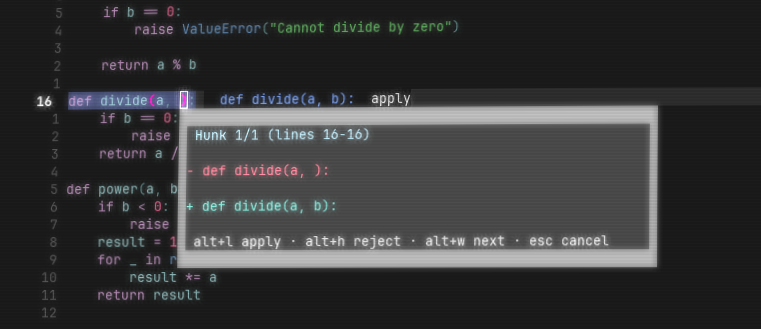

Results after implementing in Sweep Agent:

- 90% reduction in token usage for files >2,000 lines

- Faster response times (fewer tokens = lower latency)

- Reduced context rot

Try it in our JetBrains plugin: plugins.jetbrains.com/plugin/26860-s…

And read the full blog here: blog.sweep.dev/posts/read-file

English

We optimized the outline format for token efficiency, which

Our initial format looked like this (15 tokens):

[...5 nested items, read lines 298-558 for details]

We found this format to be 30% more token efficient across the entire outline (9 tokens):

(5 children) [298:558]

For Zed's editor.rs (207k tokens), our outline is only 3.7k tokens - a 98.2% reduction.

English

We decreased token usage for ultra-large files by 90%.

When navigating large files, coding agents use start and end line numbers, which either misses context or wastes tokens.

We used the JetBrains project structure to return structural outlines for large files, letting agents navigate precisely.

English

@dingchilling @continuedev We'll have something for you in VSCode soon - if you're using jetbrains you can try: plugins.jetbrains.com/plugin/26860-s…

English

@sweepai can i use it with @continuedev out of the box with ollama? how does the config file look like for this model?

English

Sweep 0.5B is out! It beats 7B models in autocomplete performance while being 14x smaller.

huggingface.co/sweepai/sweep-…

English

SweepAI retweetledi

local sweep next-edit implemented in neovim as a plgun, icl my laptop is ass with the 1.5b so developing this plugin was very annoying and i really want to use local inference instead of their api, so i might pause on this till a smaller model is out, but it's still really cool.

SweepAI@sweepai

Introducing Sweep Next-Edit, an open-weights 1.5B model trained to predict your next edit. Sweep Next-Edit outperforms models 4x its size while being small enough to run locally. We're open-sourcing the weights so the community can build fast, privacy-preserving autocomplete for every IDE.

English

@sabir_huss50540 @ycombinator No but they're really good for code complete

English

@sweepai @ycombinator Can next edit models replace big LLMs?

English

@Mualim_dev @sijiramakun we have some sample code here to run the model: huggingface.co/sweepai/sweep-…

English

@forwarddeploy Thank you Umesh! Can't wait for more IDEs to experience our autocomplete

English

Download the model here: huggingface.co/sweepai/sweep-…

JetBrains users can try it now in the Sweep plugin:

plugins.jetbrains.com/plugin/26860-s…

Building for VSCode, Neovim, or your own editor? We'd love to see what you build.

English

Training pipeline:

1. SFT on ~100k examples from popular permissively-licensed repos (4hrs on 8xH100)

2. RL for 2000 steps with tree-sitter parse checking + size regularization

RL fixed edge cases SFT couldn't, like when the model generates code that doesn't parse or produces overly verbose outputs.

English