Tess Hegarty 🔸

2.6K posts

Tess Hegarty 🔸

@thegartsy

AI development is inherently political & social. PhD @Stanford | formerly @MIT | 🔸 10% Pledger

I didn’t see the doc yet - but I do think the best “don’t panic” people don’t rly do interviews because most of the best arguments against doomers (who I think are very logical except with regards to their own branding) essentially come down to opinions on the nature of life that the vast majority of people will not like or be ok with. Like I’ve had people make very strong arguments to me about why zero care should be spent addressing doomer concerns and it basically comes down to things like human life isn’t particularly special in the context of intelligence, or the philosophies of the people building ai are based on such and such superior cultural approach that I trust more than the current one etc etc obviously that’s extremely basic but - I think the reason we don’t hear these arguments in public is because they tend to end up being lik well a bunch of ppl r gna be poor in the short term and it’ll be awful and it’s gna be a bad bottleneck time or the cybernetic system deserves priority over individuals hence a certain amount of suffering death and merging and possibly even extinction is ok I don’t see these types of arguments ever risking being subjected to actual debate or rigor from the opposition. It’s pretty crazy there aren’t more well documented well planned earnest formal debates between the best doomers and the best optimists with fact checking - and mebe it’s not a totally formal debate cuz I want people to debate their side and I want optimists to have to stand up to the most meticulous scrutiny and if it still stands then awesome, Same for doomers idk It’s insane that it never actually comes down to being person v person. There’s almost no reason to do any more docs or have any more discussions if we can’t do that because it’s just ppl yelling unchallenged arguments back and forth This is too long sorry

@MichaelSteidel You spot a difference between these two signs?

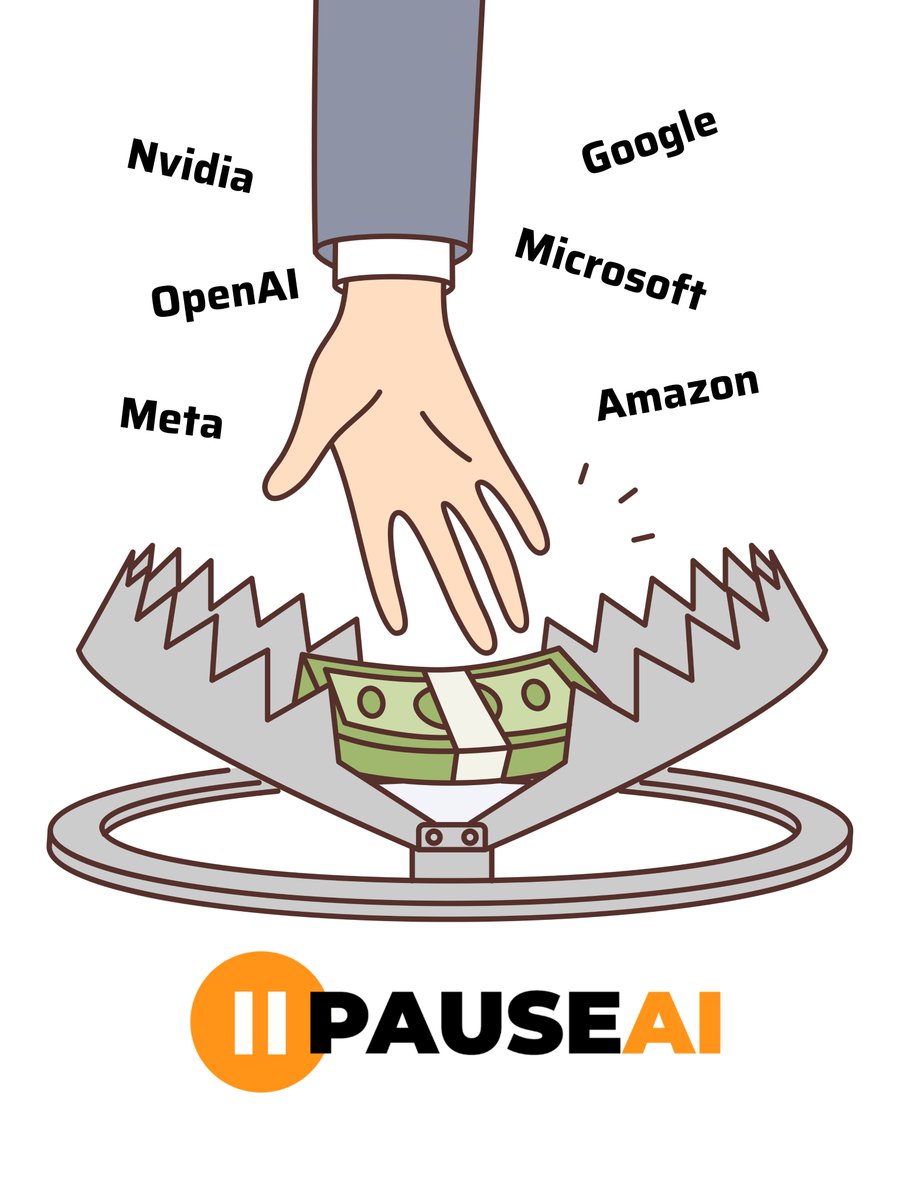

Let’s not end up like the frog getting boiled alive. Try to feel every degree by which the temperature of the water increases. Pay attention. Follow the money. Ask the big questions. Don’t stop demanding answers.

On our way to OpenAI!

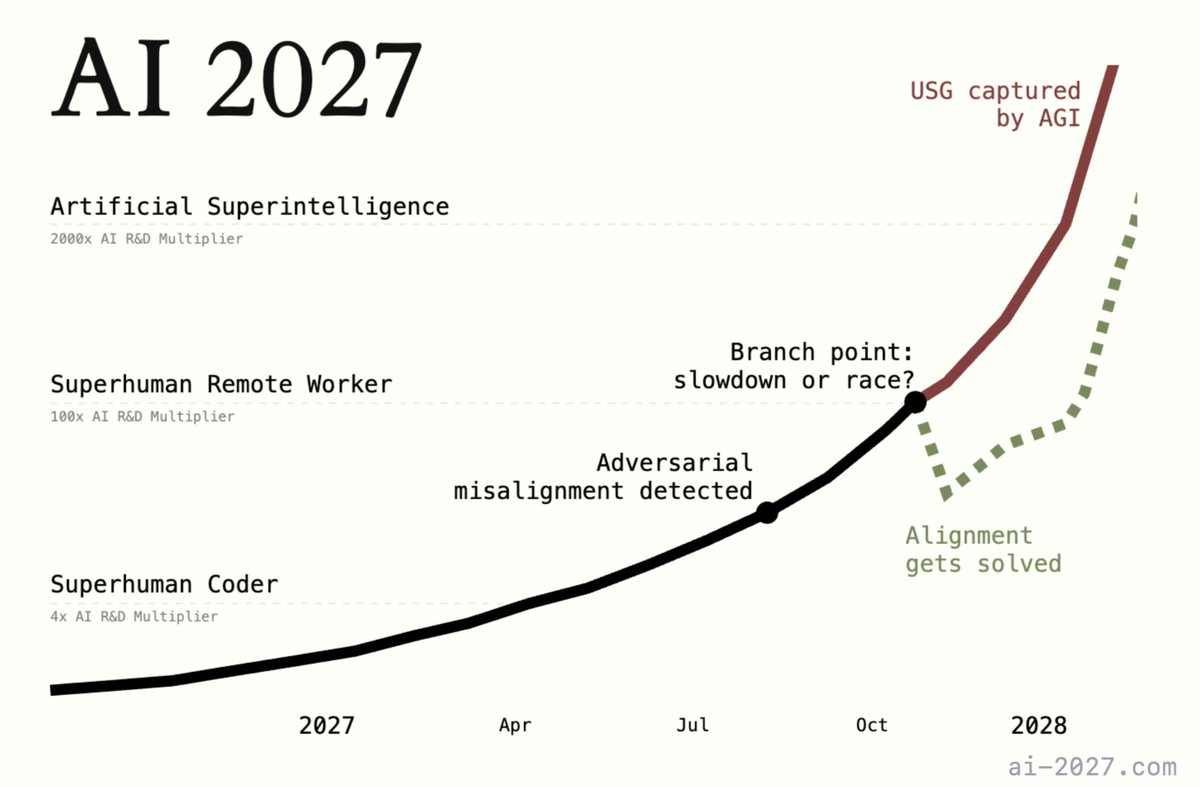

"How, exactly, could AI take over by 2027?" Introducing AI 2027: a deeply-researched scenario forecast I wrote alongside @slatestarcodex, @eli_lifland, and @thlarsen

AI springs from California. Thank you, @CAgovernor Newsom, for recognizing the opportunity and responsibility we all share to enable small entrepreneurs and academia – not big tech – to dominate. gov.ca.gov/2024/09/29/gov…

A new study endorsed by @Yoshua_Bengio finds that the risk management practices of most major AI labs are "very weak." xAI came in last, with a 0/5 rating. time.com/7026972/safera…