Thomas Morselt

2.1K posts

Thomas Morselt

@thomasmorselt

Vertaald data & AI naar meer omzet, efficiëntere processen en waardevolle inzichten.

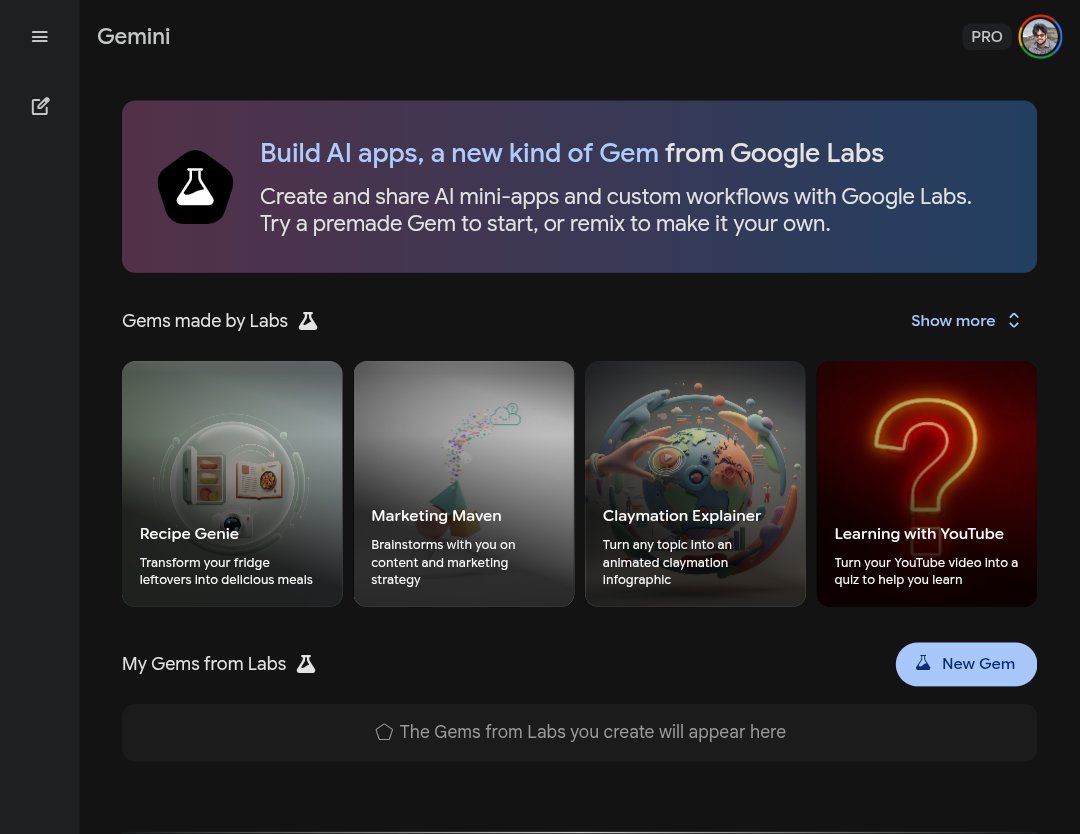

Opal, meet @Geminiapp. 🤝 We’ve now brought Opal, our tool for building AI-powered mini apps, directly into the Gemini web app as an experimental Gem. You can find the Opal Gem in your Gems manager and start creating reusable mini apps to unlock even more customized Gemini experiences. Go forth and customize! Learn more here: blog.google/technology/goo…

We're taking the next big step with Researcher. With Computer Use, it can now securely browse the open and gated web to find hard-to-locate information—even across hundreds of sites—and handle multi-step tasks to uncover insights, take action, and create richer reports.

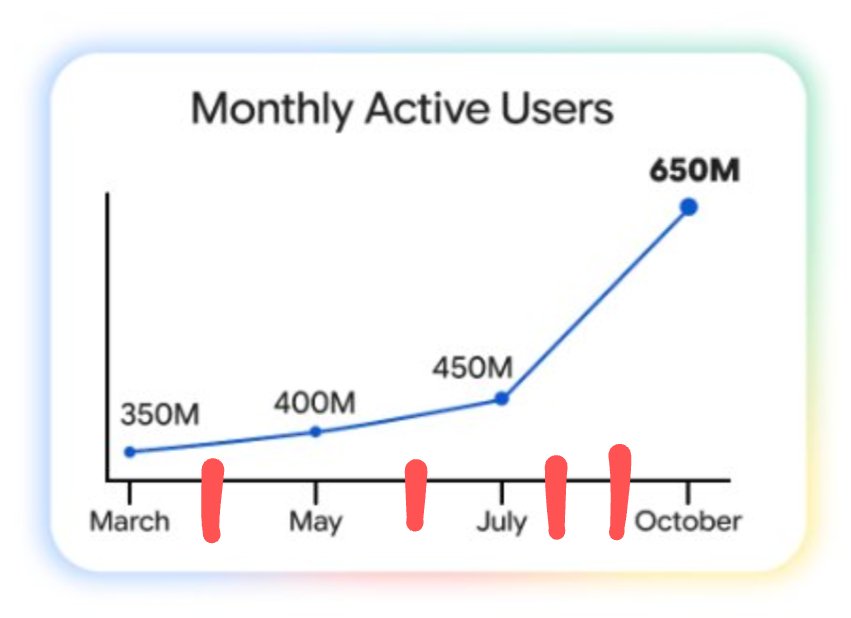

This is how I feel about vibe coding. Any project I try that has any kind of complication has this immediate burst of progress. Things are amazing and it feels like a superpower. Then... as I add more complexity, things crash to a halt. The only projects that I think I can create are ones that fall in this "vibe zone". Prototypes, UIs, products—anything that's simple and has low complexity fits right in that zone. Proof of concepts, interactions, stuff like that. The tools are able to make things that fit in that slot. But. Everything falls to pieces as that complexity curve increases. And the problem is that any good product design process has increasing complexity. A basic prototype turns into a good prototype as soon as it has layered interactions, transitions, good affordances, hover states, 1000 tiny little details that make something feel correct and real. The benefit of vibe coding is supposed to be that you move fast and you can whip things out—letting AI do all the work for you. The problem is it loses steam as soon as the necessary complexity is added. It keeps redoing itself, rewriting code, affecting things that are unrelated and then causing other issues. But if you add that complexity, every vibe coding session quickly turns into a whack-a-mole bug-bashing session. I'm not sure the solution to this. With traditional prototyping the solution is to duplicate, add more complexity, create more frames/scenes, tweak, fork, etc. However with vibe coding, one little prompt can destroy literally everything. There's a stage where I end up walking on prompt eggshells-- trying not to give it too much or too little context so that it doesn't go rogue and break everything. There's only a few exceptions to this. @cursor and @framer. I can make great progress with Cursor, give it narrow context, and I have to approve the edits that it makes. This feels like a correct workflow. The problem is, I can't see the thing that it's making because it's an IDE, not a visual environment. Yes, I can create local builds and refresh my browser and all that kind of stuff. But the visual aspect is totally lost from the coding experience. It's a developer tool. Framer gets this right because it only allows narrow updates within a single component on the page. Yes, it's limiting because it can only do a single thing at once, but at least it's not trying to create the entire page from scratch and manage it all through a prompt interface. These seem like the right approach. @Cursor: Allow the AI to edit anything but allow the user to approve those edits and see them in context. @Framer: Allow the AI to only narrowly edit a single file or component to keep the complexity down to a minimum and reduce catastrophic edits. I'm optimistic that tools like @Figma, @Lovable, @Bolt, and @V0 can make cool prototypes, but I just keep running into walls when it comes to doing anything more than just a basic interaction prototype. They need to do less IMO. Hopeful that those tools add more controls that are in the same line as Cursor and Framer. I'll also add that this is similar to how we do it with @Basedash chart generation as well. But we're not a vibe tool in the normal sense so the parallels are a little bit harder to draw.

@btibor91 @koltregaskes Agent mode is now available for Teams plan in America via iOS app.

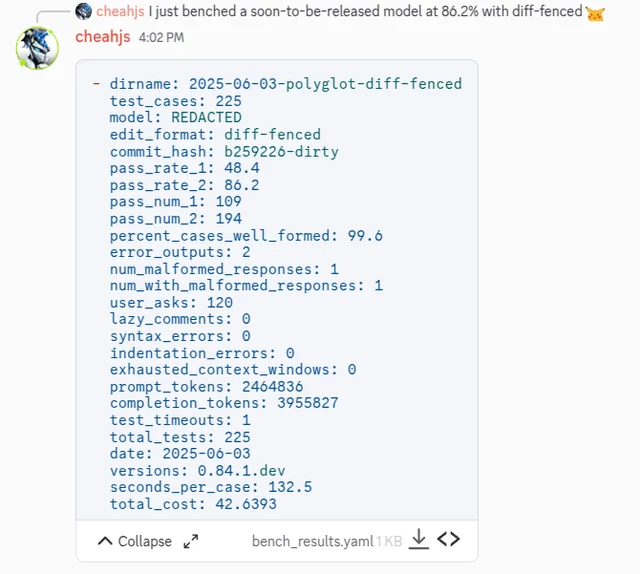

Gemini 2.5 pro GA version scores 86.2 on aider polyglot

Can’t believe I’m about to say this…but today’s announcements make me want to switch back to Android. Google’s AI is what Apple Intelligence needed to be. @Google can you hook me up? 🤣

@ai_for_success @OpenAI You mean "big thing" right 🙈