Sabitlenmiş Tweet

Tim Davison ᯅ

1.7K posts

Tim Davison ᯅ

@timd_ca

Scientist building CellWalk • Apple Design Awards Finalist • CEO @ Ako Biotica • visionOS

Calgary, Canada Katılım Eylül 2008

1.2K Takip Edilen4.9K Takipçiler

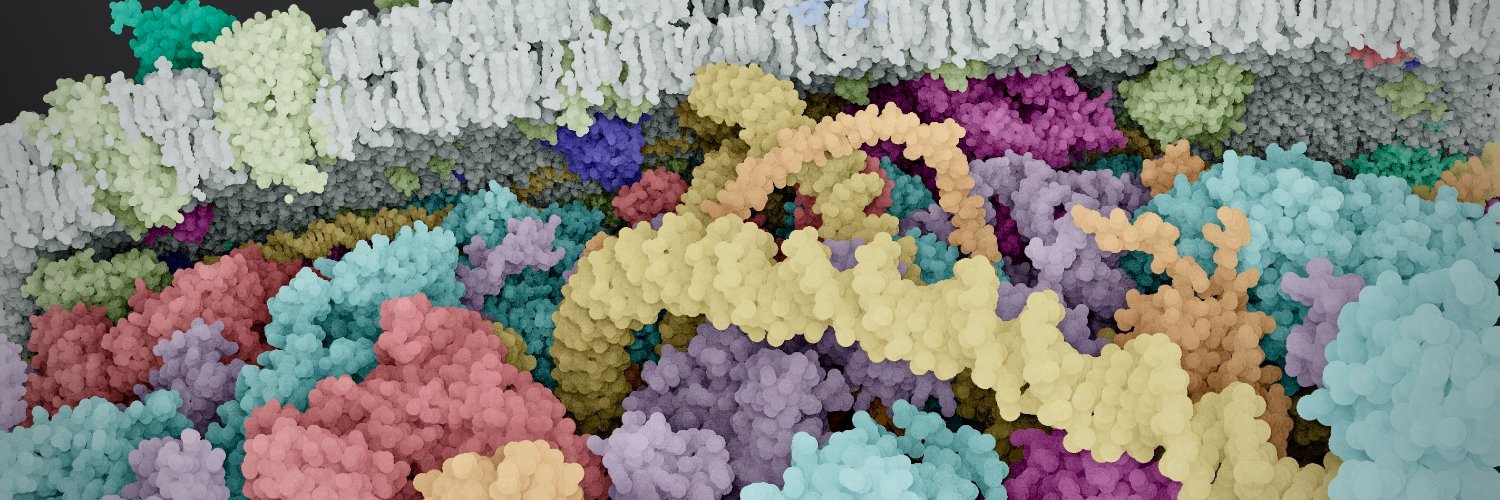

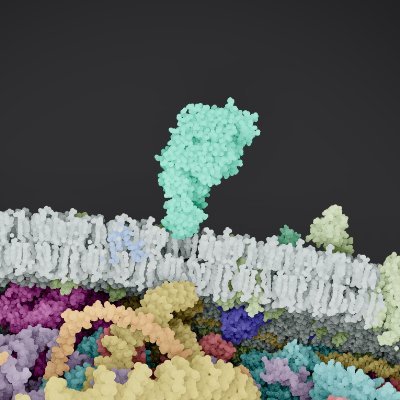

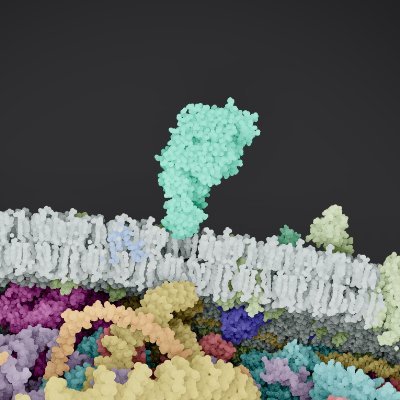

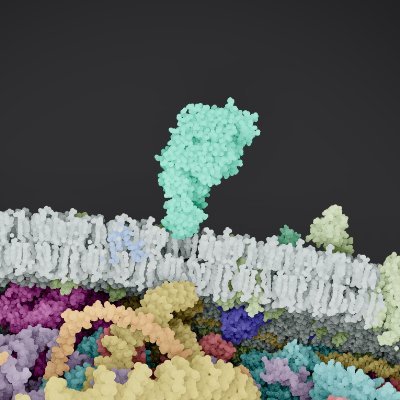

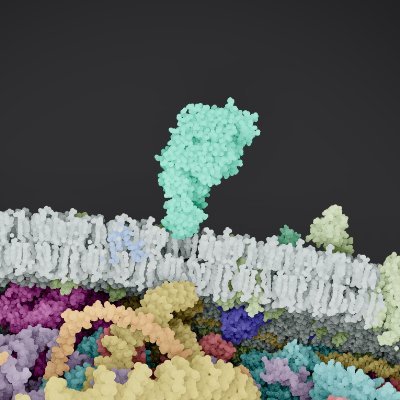

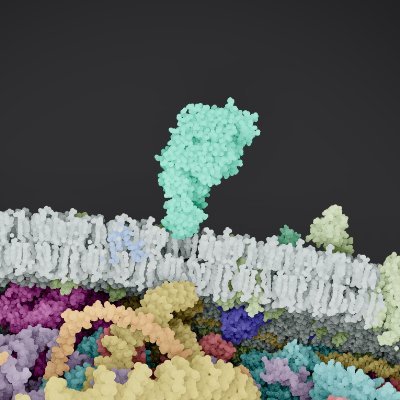

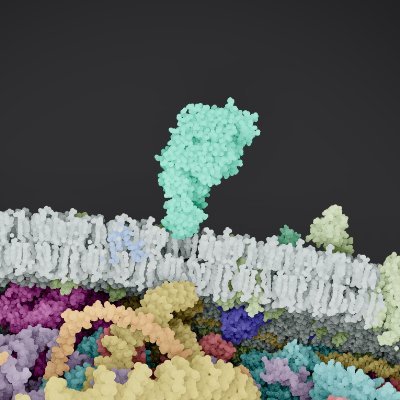

@mode7studio Based on real proteins structures. It's an integrative biological model

English

@timd_ca Is it a real protein, or did someone draw that up looks cool, regardless

English

We're shipping an update to CellWalk soon that walks through this question

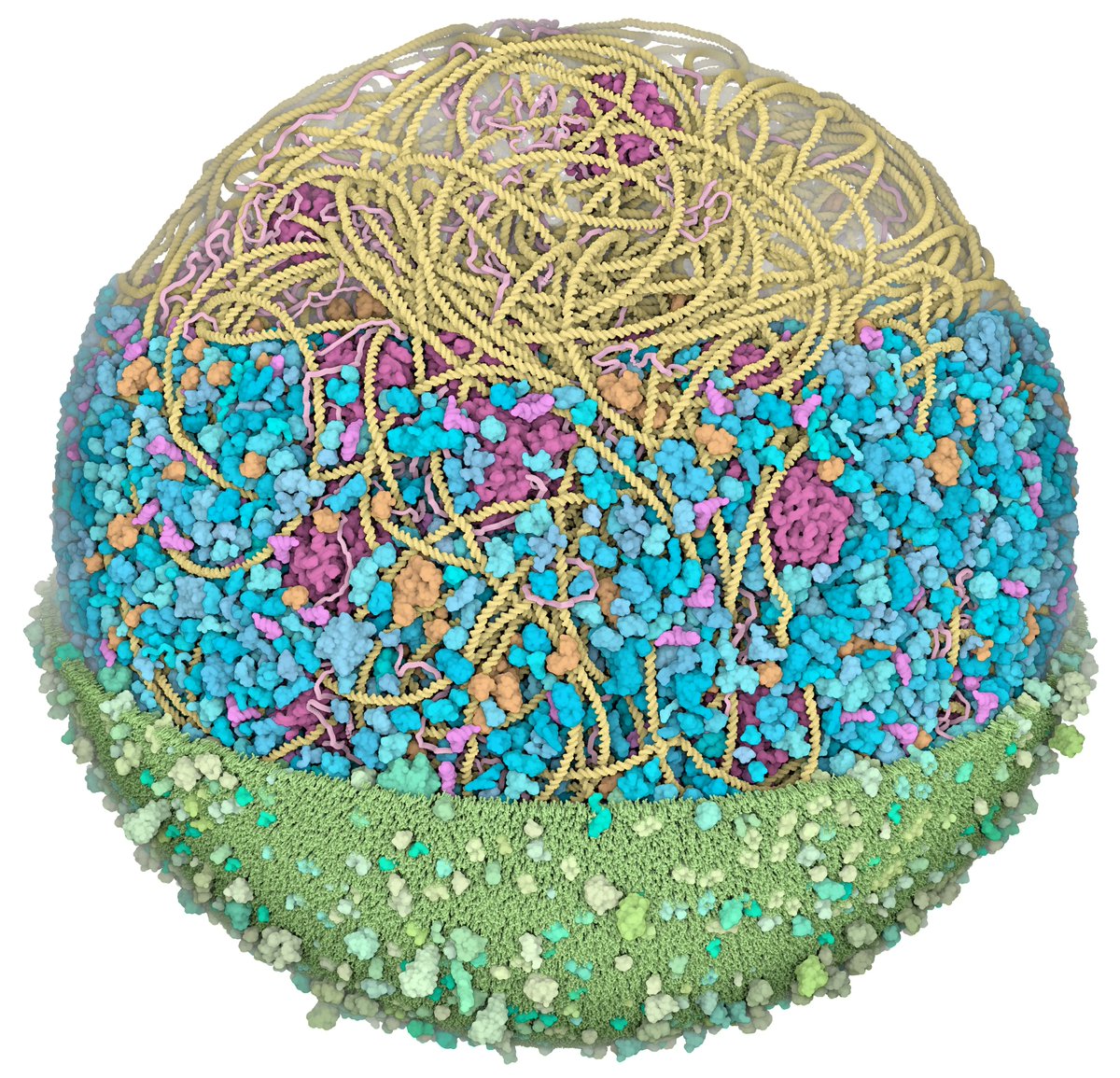

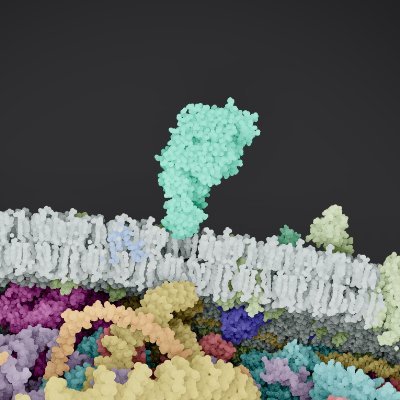

We're looking at the inside of a neuron. The lavender sacs are filled with chemical messages (neurotransmitters). When the neuron fires, they fuse with the outer membrane, turn inside out, and dump their contents onto the next neuron, which gets activated in turn.

Kind of amazing actually.

English

@Morzignis_Zero yea, we can't do this with electron microscopes unfortunately, so the best we can do right now is build integrative biological models

English

@timd_ca Lmao. No that isn't. That is some dumbass fake shit.

English

@entropic_d3ath @matlabdogboy RNA polymerase and ribosomes are the coolest Turing machines

English

@matlabdogboy @timd_ca Indeed! Computing and Turing machines is one of the best things to happen to us & has also confused our thinking so much!!

English

@worldly_bee We do! It's on the App Store (this model is still in beta)

cellwalk.ca

English

@timd_ca do you have a site with more of these? Such a cool way to understand biology

English

@McdarghZach Yes, it's an illustrative integrative model of the active zone of a presynaptic bouton. Vesicle pool top, piccolo and bassoon in the middle, other cytoplasmic proteins peeled back, and fused/fusing vesicles bottom. Small piece of a full bouton (few billion atoms).

English

@timd_ca I love this. Gently. Can't wait to see how you evolve this over time. Very curious to watch how you adapt.

English

@EasternDaylight We do! they are .cif/pdb but we're not releasing them under an open source license at this time :)

English

@MatthewZ73671 Will add in the next version. Carrying some synaptic vesicle precursors down the axon

English

@timd_ca Where are the kinesin atlas dudes walking down the microtubules?

English

@NikoMcCarty The best way is iPhone and iPad (mac version soon). The neuron/synapse is a beta, I can send you a TestFlight if you are interested.

English

@timd_ca What are the best ways for people to experience this? Is there a browser view + some kind of Apple Vision view?

English

Why isn't there a 3Blue1Brown for biology?

For context, 3Blue1Brown is a YouTube channel, founded by Grant Sanderson, that publishes videos about math. Sanderson built an "animation engine," called manim, to help create these videos; it's a Python library that uses code to render smooth animations.

So why is nobody making highly visual, explanatory videos for biology in the same way that 3Blue1Brown is for mathematics, where each video explains a concept using a consistent visual aesthetic? I think there are at least three plausible explanations:

1. Biology demands a larger visual palette than math. Whereas many different ideas in math can be explained using a small number of symbols (charts, equations, shapes), maybe biology just requires a larger array of symbols. Showing a kinesin protein walk on a microtubule demands a completely different set of elements compared to, say, the evolution of a species. Perhaps this makes it harder to create visuals for biology.

I'm not sure this holds up to scrutiny. Math is arguably as broad as biology. 3Blue1Brown has made videos on everything from Bayes' theorem to Hilbert's curve and how Bitcoin works, and all of them have the same visual aesthetic.

2. Biology doesn't have a rich history of visual ideas, so maybe it's harder to align on an aesthetic. Graphs and geometric shapes are many centuries old, and mathematicians consciously draw on these historical norms and conventions. A line chart looks like a line chart regardless of how it's styled. Biology, though, has no such "fixed" visual language, so it takes more effort to create each new visual.

Maybe there's merit to this idea? Everyone draws a chromosome differently, for example; some people might show all 23 pairs at once, or zoom into a single locus, or abstract the entire chromosome down to a few letters. Biology operates across so many orders of magnitude that choosing the scale at which to convey an idea is itself part of the creative act, and there's no inherited convention telling anybody which scale to pick.

3. Maybe it takes too long to build visuals in biology, or the technical bar is too high? If you want to show how an enzyme works at the molecular level, for example, you'd need to understand PyMOL, Blender, etc. Iteration speeds are low, and the skill set needed to build one type of visual — like how molecules bind — won't necessarily apply to higher-order ideas, like evolution.

This bottleneck is collapsing with AI tools, though. Claude now works directly in Blender and Adobe products, for example, so iterations will be much faster. Maybe we'll see a 3Blue1Brown-esque creator emerge for biology? I'm not sure.

I'm hoping to write about these ideas, so if you have feedback (or reject my claims entirely) please let me know! I'd be keen to hear from you.

> Painting by David Goodsell, whose visual aesthetic has been extremely transformative in terms of how people think about molecular biology.

English

@dreamwieber That tree is so perfect for sitting under and coding

English