ㅤㅤㅤ

9.8K posts

Autistic people who specced into manually-learned social skills get oracle-zoned rather than passing for allistic. You generally get seen as a useful and interesting font of mysterious wisdom, not as a fellow normal person who should be socially included.

people are trying to argue with this and it’s literally the truth. a kilogram of beef requires over 15,000 litres of water to produce. a vegan who uses chatgpt every day is living a more sustainable lifestyle than someone who regularly eats beef while boycotting AI.

Millionaire Tax Already Working: tinyurl.com/4sm3azwb

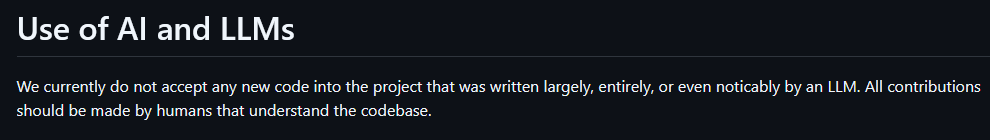

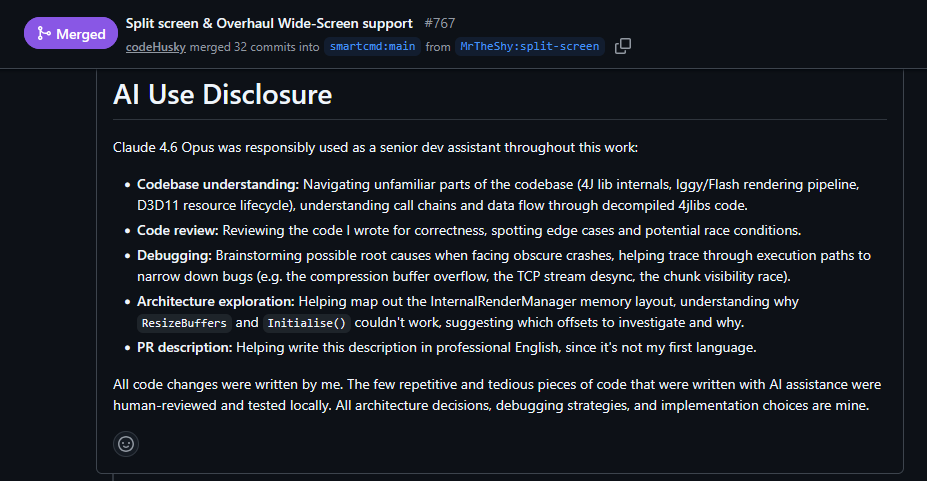

to the people contributing to t3.codes could you please... stop? - do not file a 9k loc pr that adds 7 features on top of what you advertised in the title. - ANY ui change should have before / after screenshots, idk how this isn't common knowledge. - and for the ones adding providers, i'd just wait for @jullerino / @theo to add them. if you want to make things right: - first open an issue with something you'd like changed / added with clear examples of how it'll work / look, also attach examples from other open source apps that do that specific thing well - something as simple as changing the wording "open pr" to "view pr" - removing a stray dot in the ui for the working animation and most important of all... make it easy to review