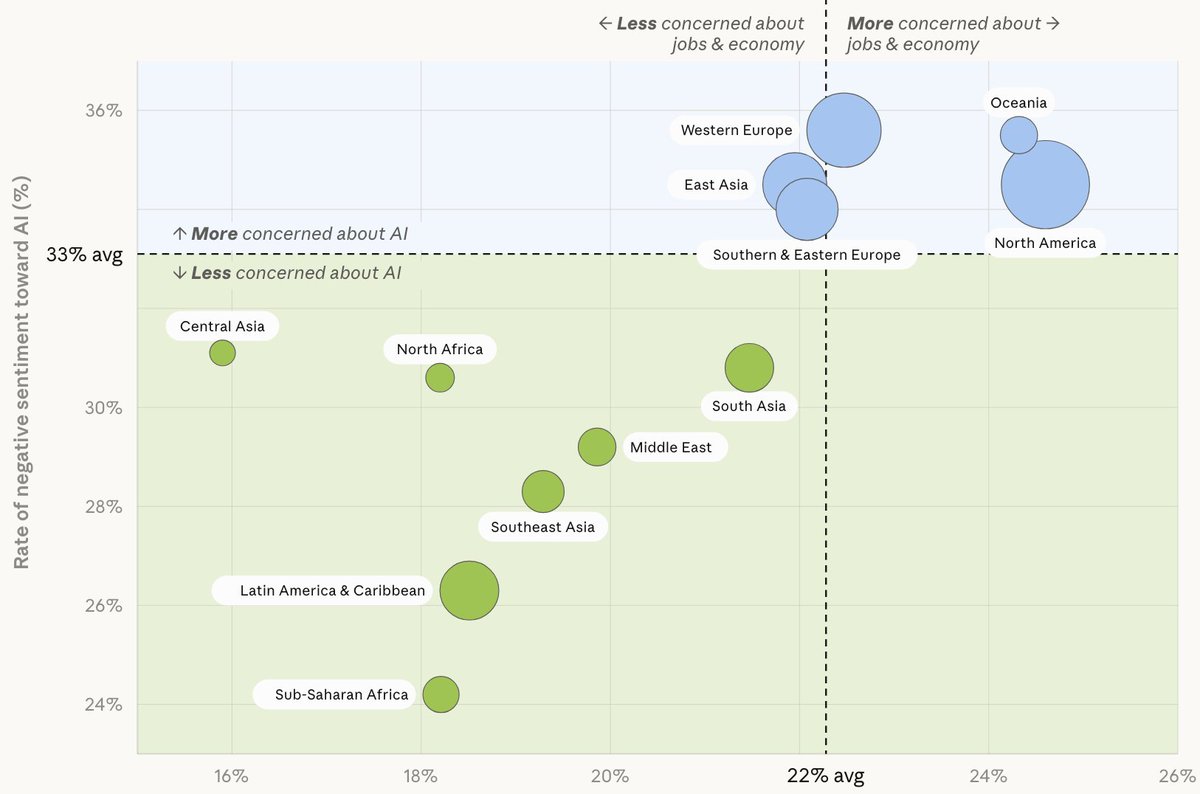

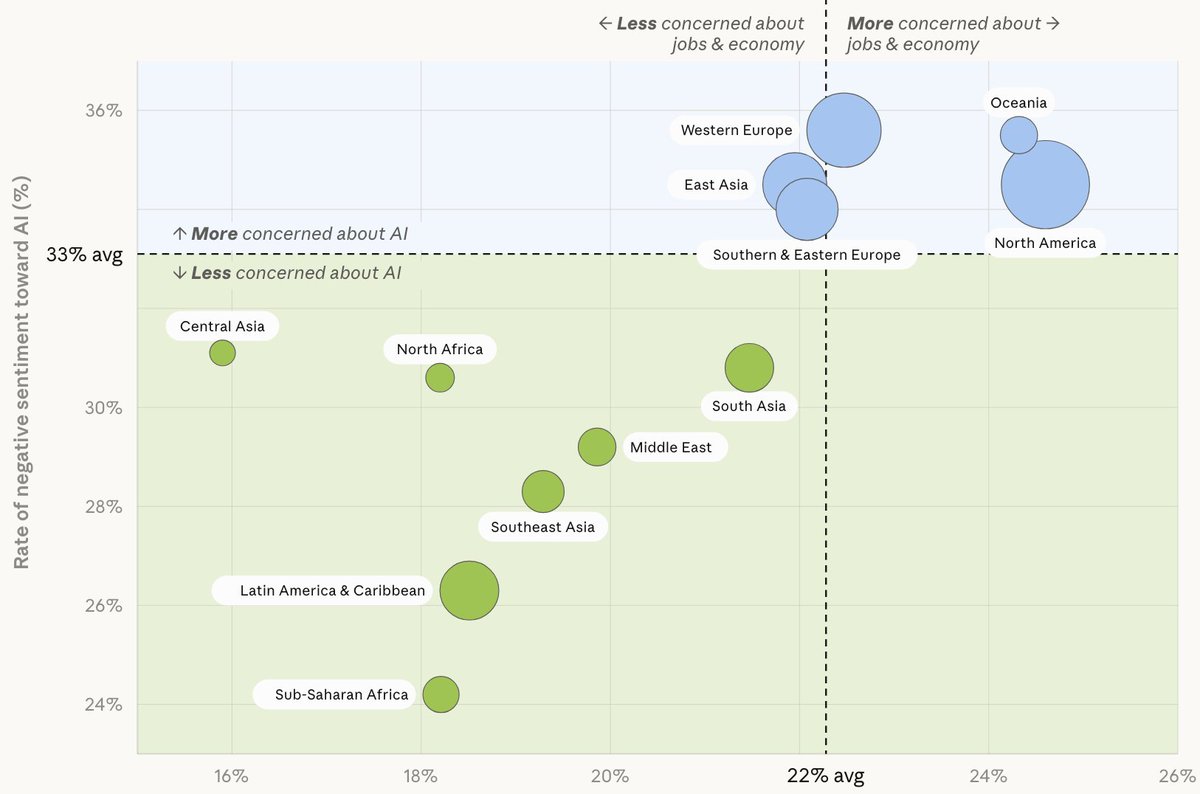

We invited Claude users to share how they use AI, what they dream it could make possible, and what they fear it might do. Nearly 81,000 people responded in one week—the largest qualitative study of its kind. Read more: anthropic.com/features/81k-i…

Wade Dorrell

22.1K posts

@waded

Quality, human factors, software, gardening, denominators, late-stage dadops. Ex MSFT, data-driven startups. Idaho lifer, Lego, LLAP, LLTBTB.

We invited Claude users to share how they use AI, what they dream it could make possible, and what they fear it might do. Nearly 81,000 people responded in one week—the largest qualitative study of its kind. Read more: anthropic.com/features/81k-i…

@DBurkland @pbeisel It’s in testing right now. Wide release in a few weeks.

I didn’t think this would stimulate as much conversation as it did. Most people are okay with waiting 10-15 minutes for 10-20% charge because it gives them a chance to grab food, use the bathroom, etc. Does Supercharging need to be as fast as pumping gas?

Everyone commenting on this video is talking about FSD. NO, it was not FSD, it was Autopilot. Very different driving behaviours. And if you don’t know what the difference is, you are not qualified to provide analysis on the video. $TSLA

Tesla fans using the “4-second disengagement” as a gotcha are missing the forest for the trees. Yes, the driver was technically in control of the vehicle at the moment of impact. But she was in control because FSD was already failing by driving too fast ahead of this sharp turn — it was heading straight into a concrete barrier at highway speed with no sign of correcting. Everyone who has frequently used FSD or Autopilot and paints this 4-second disengagement as a “gotcha” moment is being disengenous, and that includes Elon Musk. I have tens of thousands of miles on FSD, and I’ve experienced the system coming too fast into a turn at least half a dozen times. We’ve said this before and we’ll keep saying it: the problem with FSD isn’t what happens when the driver is paying attention and the system works. The problem is what happens when the system gives you every reason to trust it, and then suddenly doesn’t work. The driver has to recognize the failure, assess the situation, decide on a correction, and physically execute it, all in less time than the system needs to create the danger. Musk and Tesla’s propagandists can point to the logs all they want. The video shows what actually matters: FSD approaching a standard highway curve at full speed with zero indication it was going to navigate it. That’s the failure. Everything that happened after, including the panicked disengagement, is a consequence of that failure. The framing that this was “manual driving, not FSD” is technically true for the final 4 seconds and deeply dishonest about the full sequence of events. It’s exactly the kind of liability shell game that courts are increasingly rejecting, as that $243 million verdict makes clear. Tesla created the system, sold it as “Full Self-Driving,” and profits from the ambiguity. At some point, it has to own the consequences.

I normally don't bother armchair quarterbacking these situations. But watching the Tesla Stans come into the comments is funny. Elon said Autopilot (FSD?) was disengaged 4s before the impact which if you watch the video looks like it was going way too fast at that point. Am I missing something?

Amazon recently won a preliminary injunction against Perplexity’s agentic browser, blocking it from accessing Amazon accounts even when users authorized the agent. The opinion is heavily CFAA-based and could have big implications for AI agents and platform liability if it survives on the merits. I, for one, don't want to see the CFAA's scope overexpanded, particularly to something like agent handoffs. But a lot depends on how broadly the court draws the line at the merits stage.