Siyin Wang @ICLR 2026

28 posts

Siyin Wang @ICLR 2026

@wang_siyin

PhD Student at Fudan University #NLProc #LLM

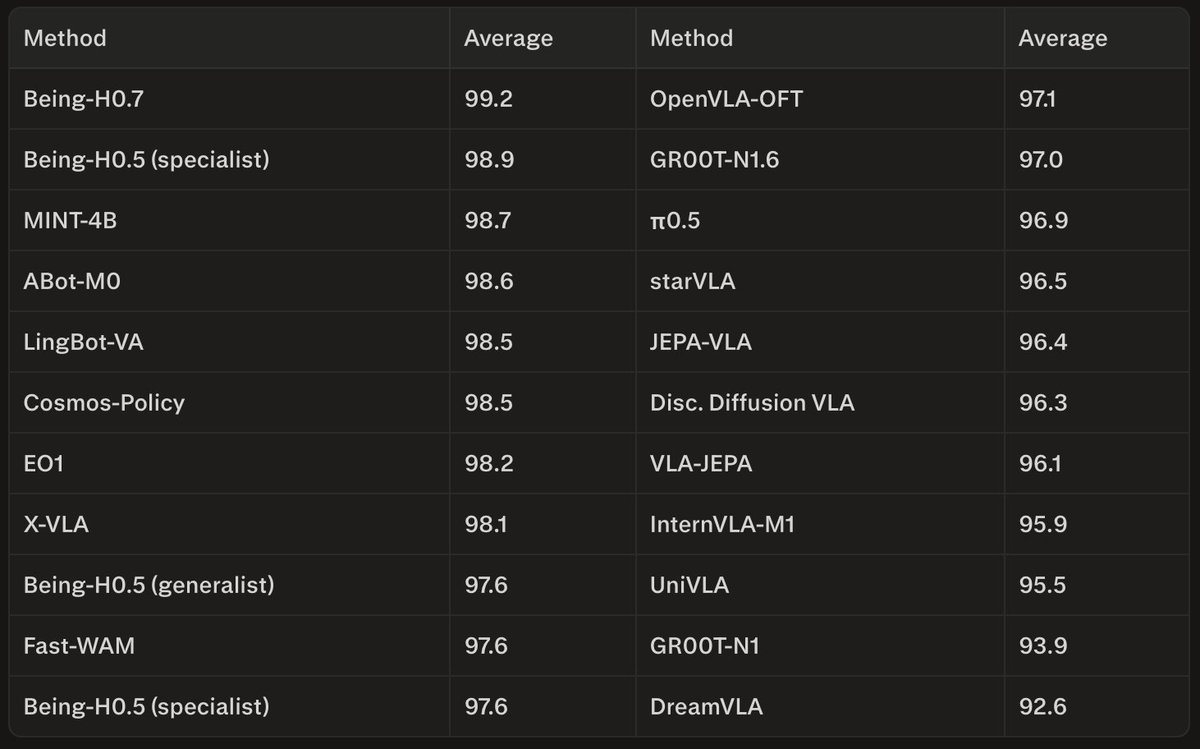

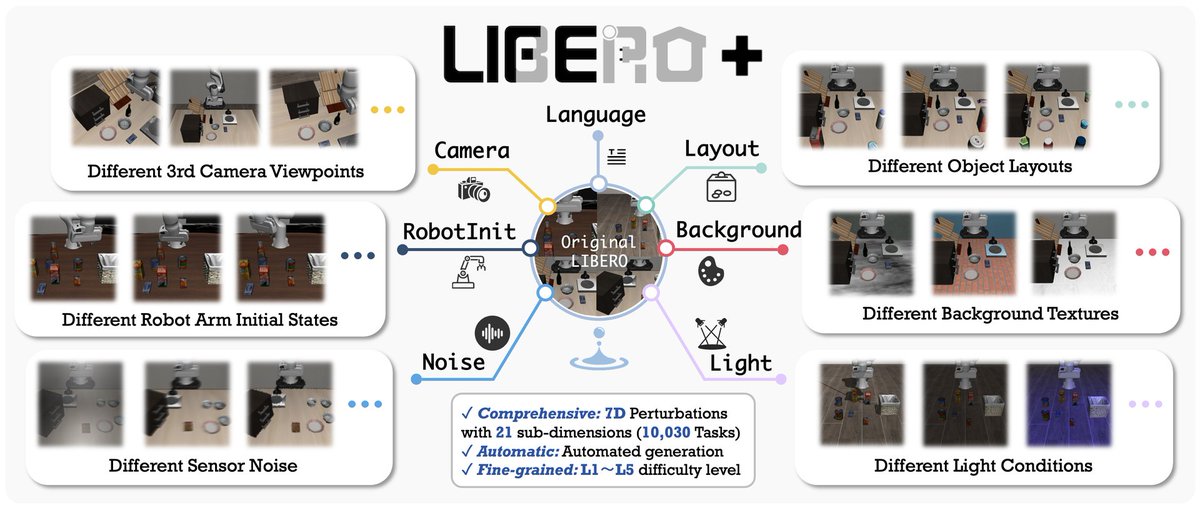

🤯 Shocking findings from our new LIBERO-Plus benchmark for VLA robustness! 💡 Key Insight: High LIBERO scores ≠ strong models. 🔗 Paper: huggingface.co/papers/2510.13… 🌐 Page: sylvestf.github.io/LIBERO-plus 💻 Code: github.com/sylvestf/LIBER… ⭐ Star us & 🚀 upvote! #VLA #Robotics 1/8

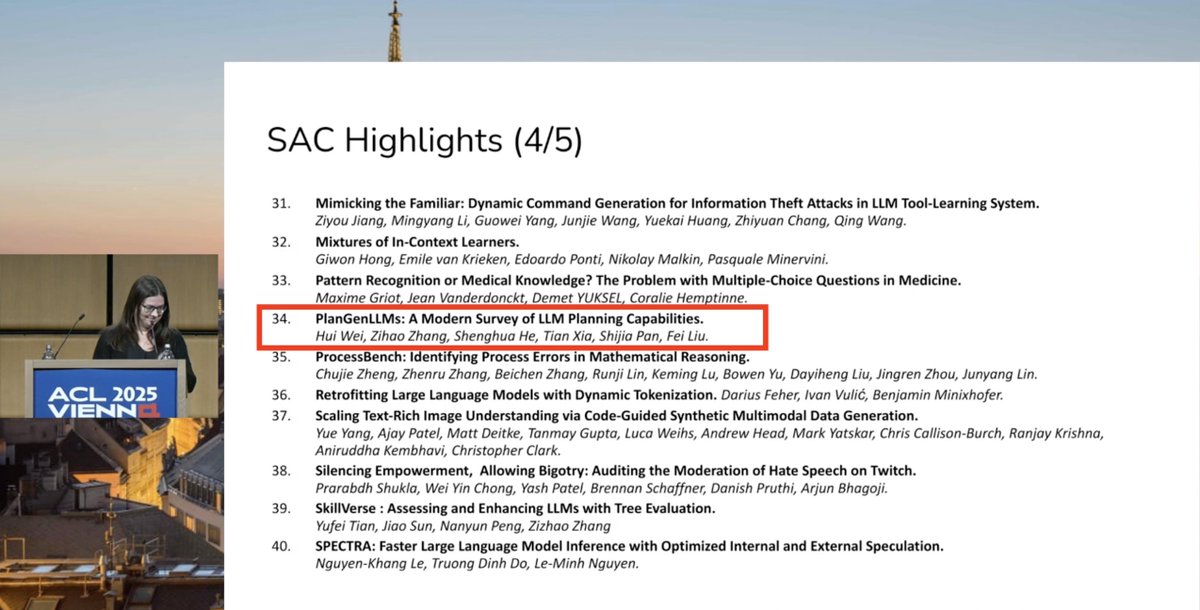

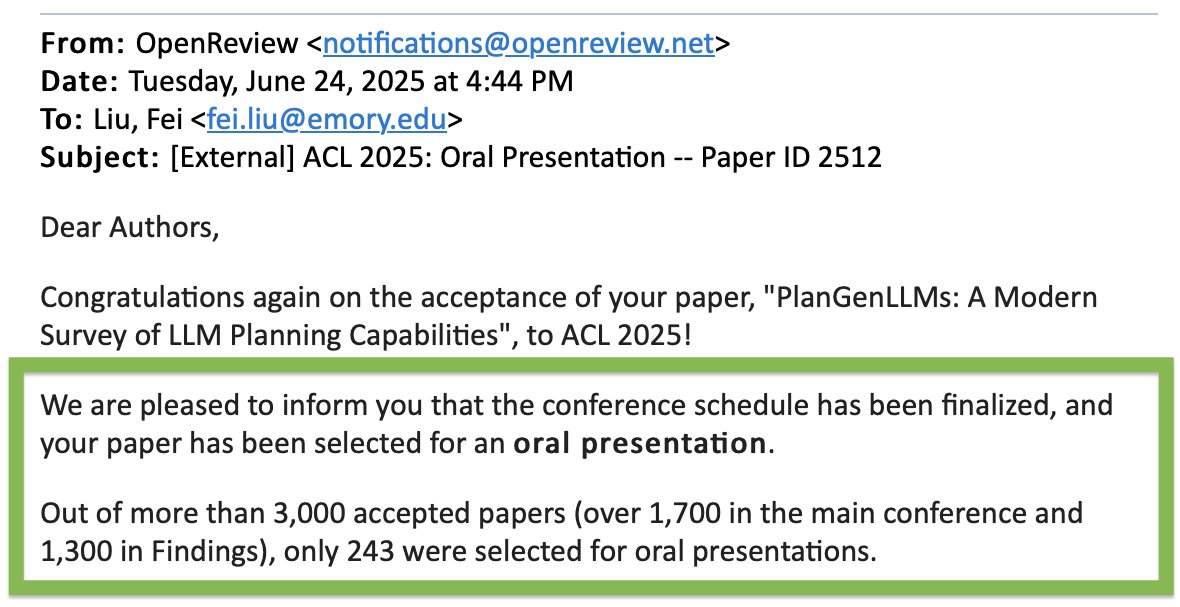

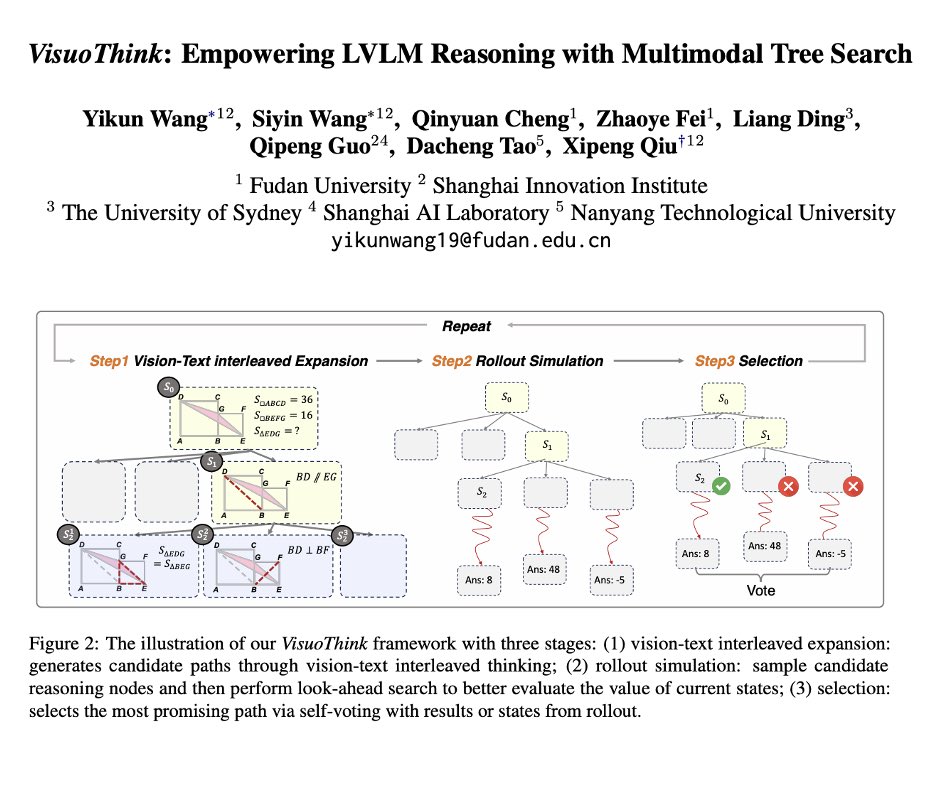

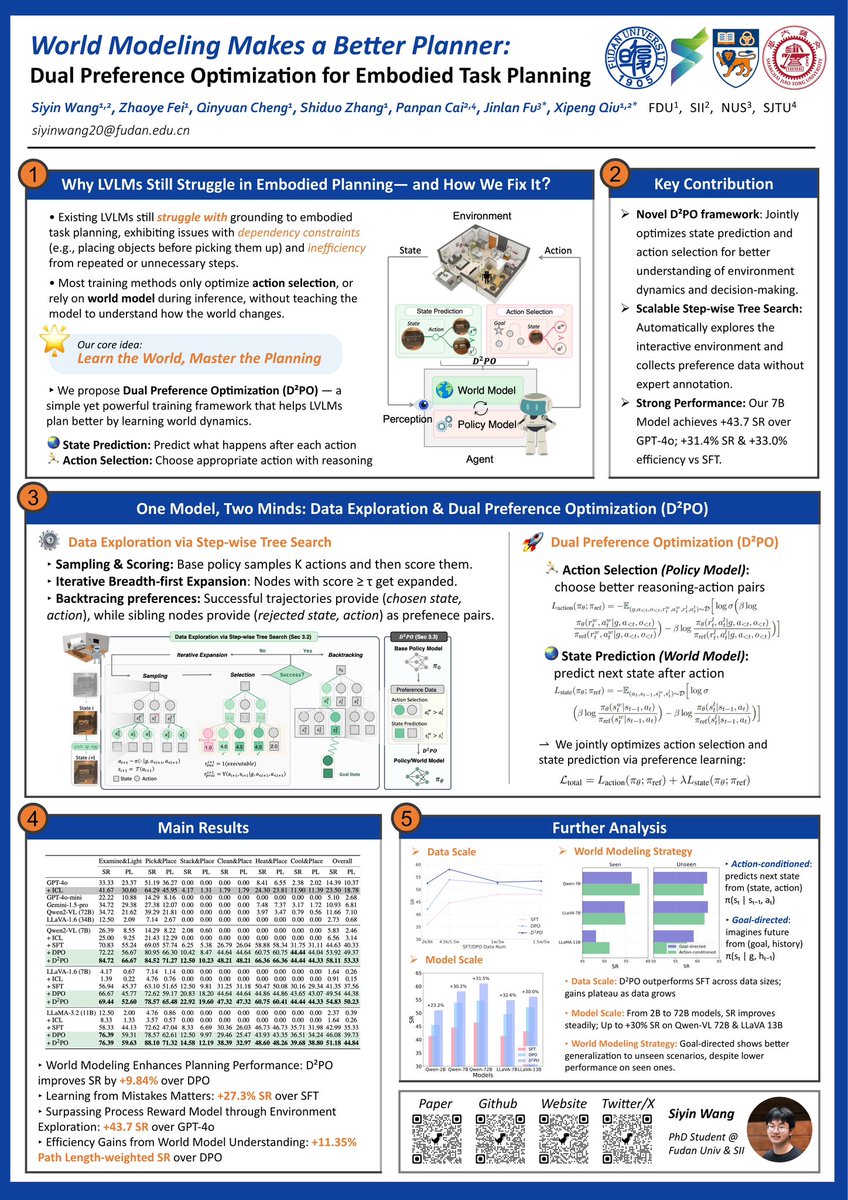

Thrilled to share our TWO papers accepted to #ACL2025 Main Conference! 🥳🎉 🎨VisuoThink: Empowering LVLM Reasoning with Multimodal Tree Search 🌏World Modeling Makes a Better Planner: Dual Preference Optimization for Embodied Task Planning #AI #MultimodalLearning #worldmodel

🎙️ Welcome to try MOSS-TTSD~ When we first heard our AI voices naturally chatting and even interrupting each other, the shock was indescribable. This isn't cold TTS anymore - it's dialogue with real warmth. Try it online! huggingface.co/spaces/fnlp/MO…

🚀 New work: OpenMOSS Embodied Planner-R1 - A step toward AI self-improvement in interactive planning! We've developed an RL framework where LLMs learn to plan through autonomous environmental exploration - no human demonstrations needed. 🤖 🧵 Thread below 👇

VLMs for embodied agents just got a major upgrade. Introducing World-Aware Planning Narrative Enhancement (WAP) — a framework that gives vision-language models true environmental understanding for complex, long-horizon tasks. Key upgrades: 🧠 Visual modeling 📐 Spatial reasoning 🔧 Functional abstraction 🗣️ Syntactic grounding

Learning about World Models, Understanding, Modeling and Scaling at @iclr_conf this morning proved not quite realistic! Shouldn’t the organizers have guessed this would be pretty popular in 2025?

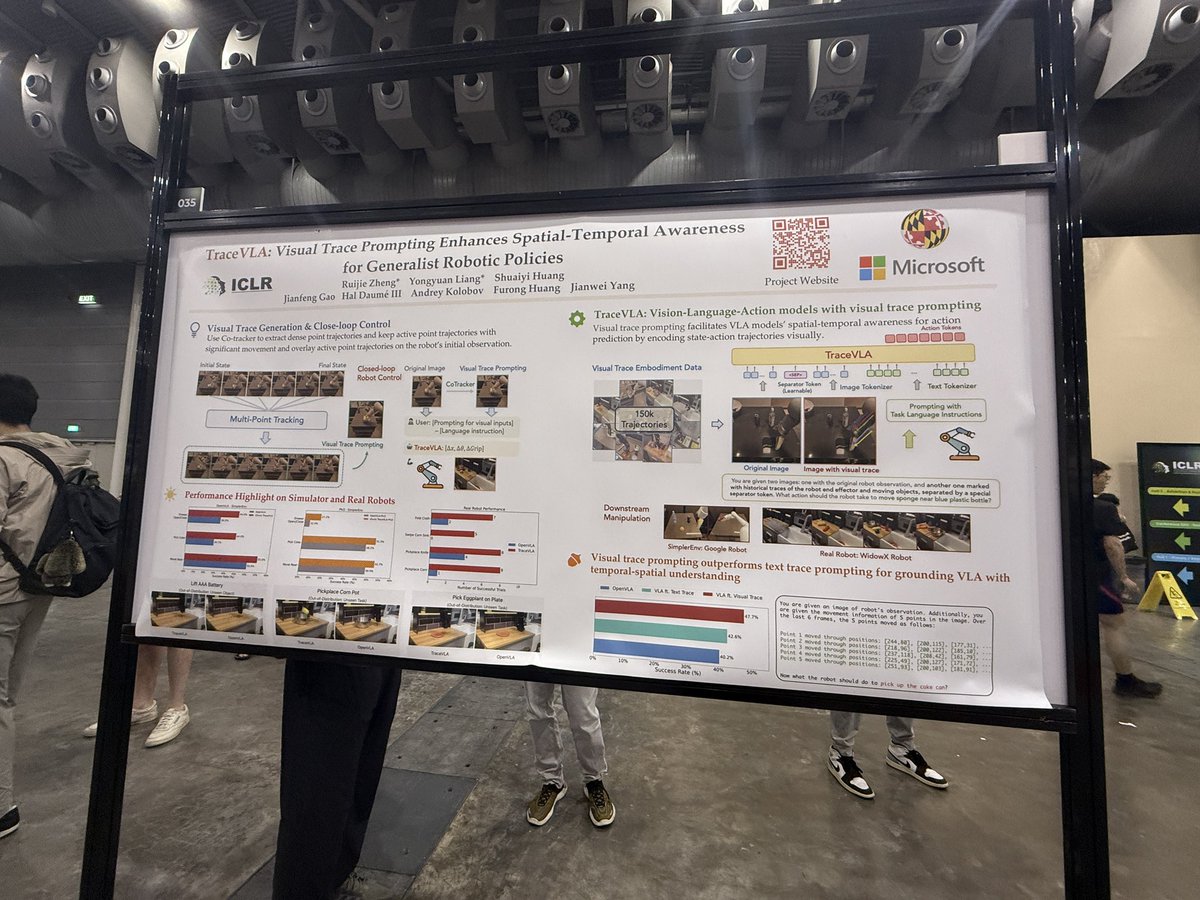

Introducing TraceVLA: a fully open-source Vision-Language-Action model reimagining spatial-temporal awareness: tracevla.github.io ✨ 3.5x gains on real robots, SOTA in simulation 💡 Fine-tunes on just 150K trajectories ⚡ Compact 4B model = 7B performance