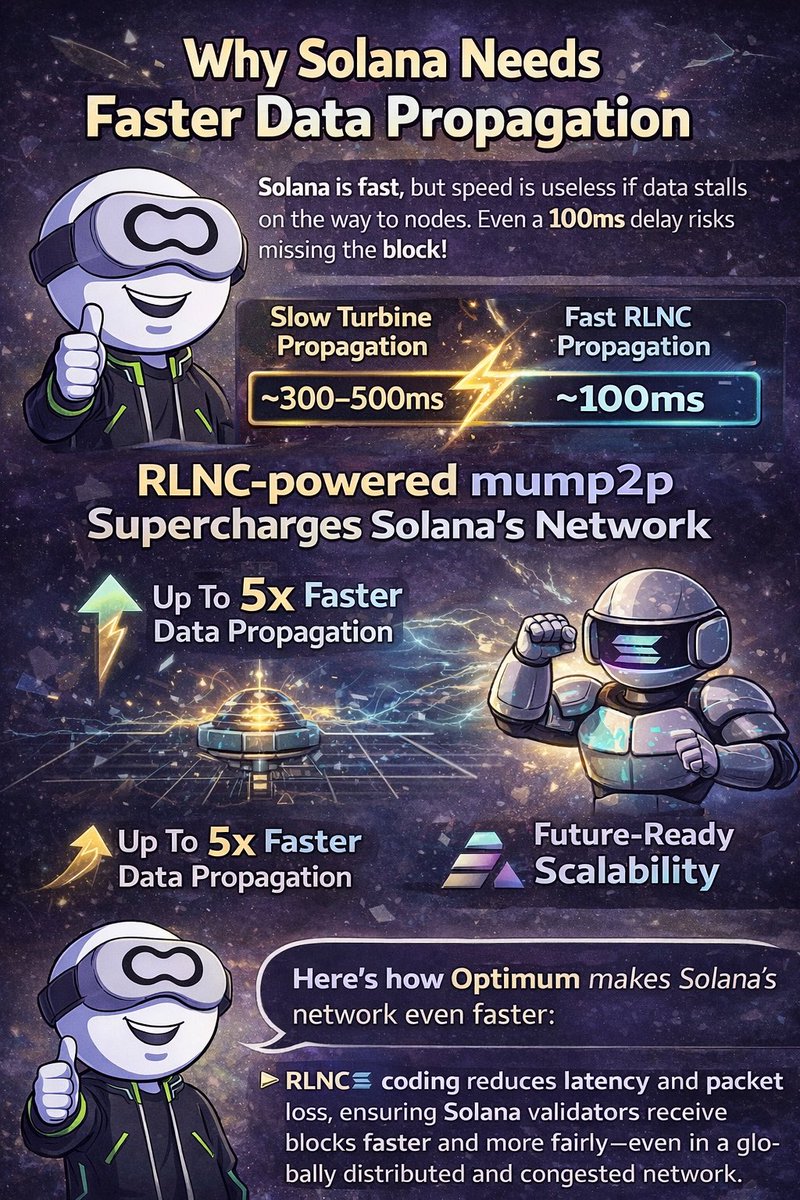

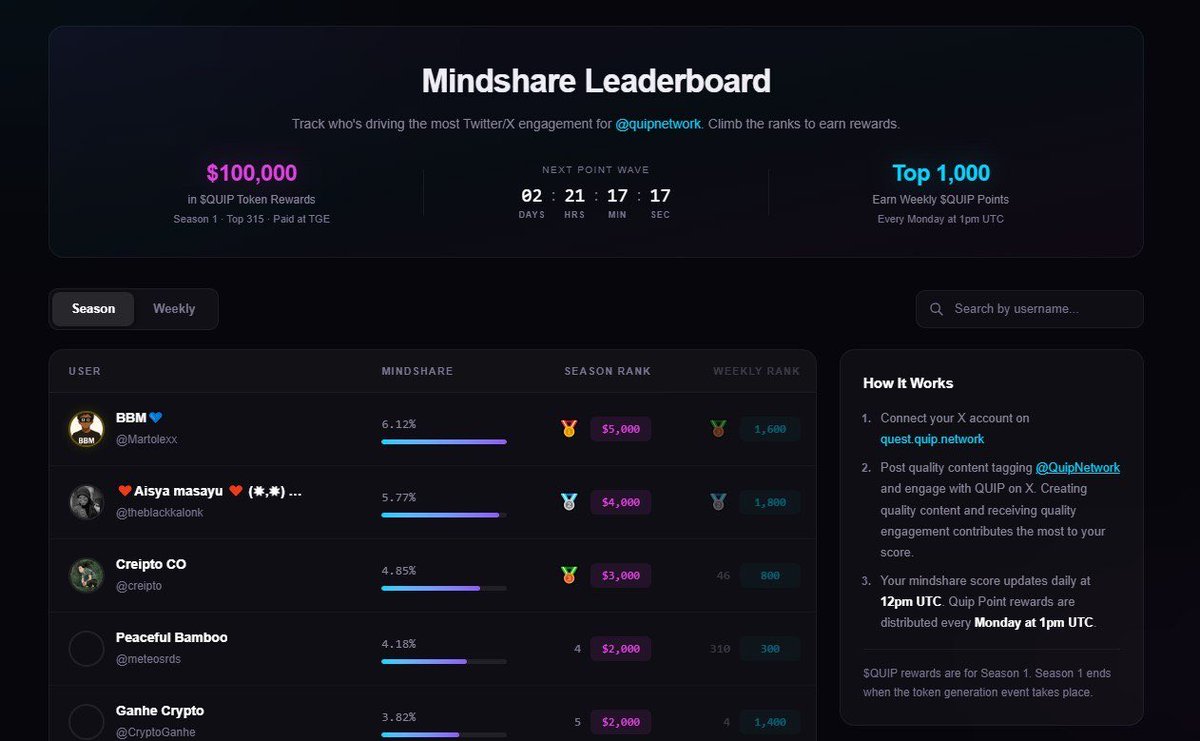

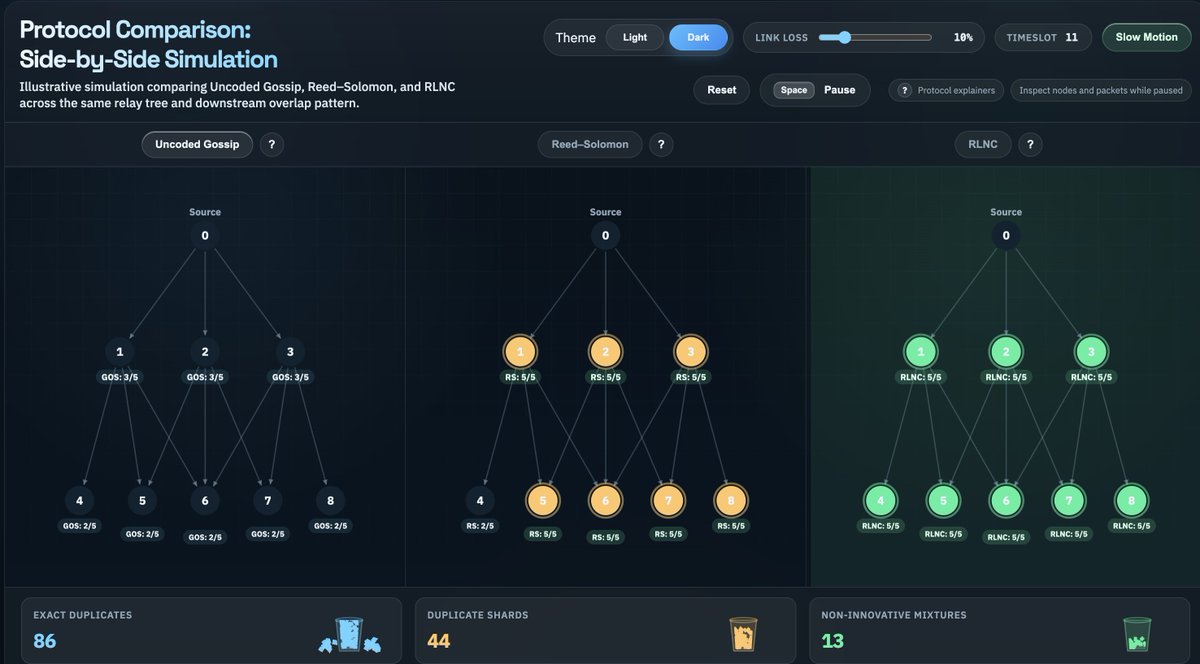

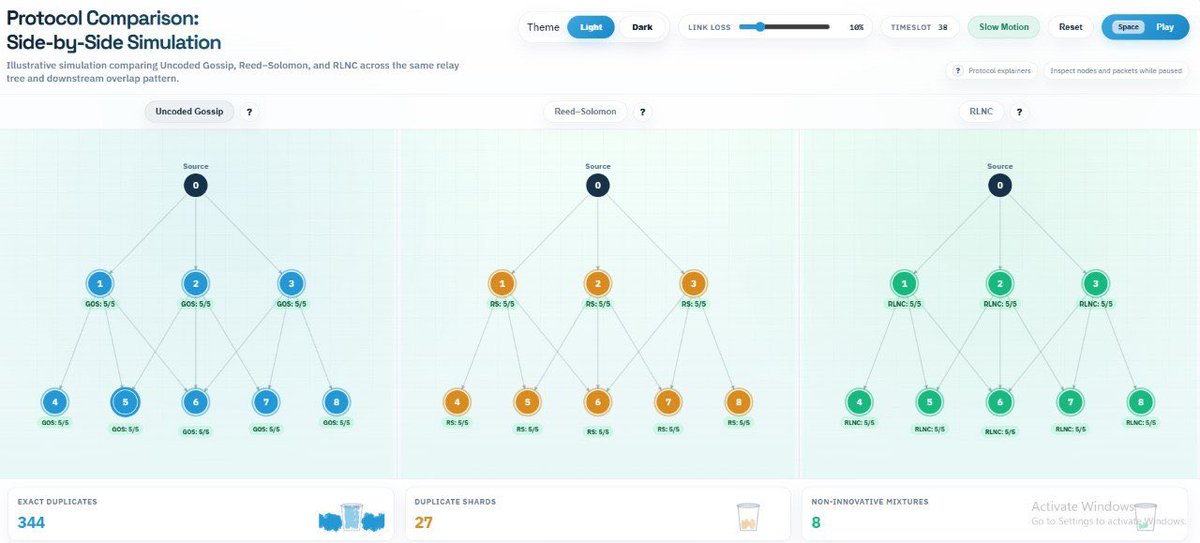

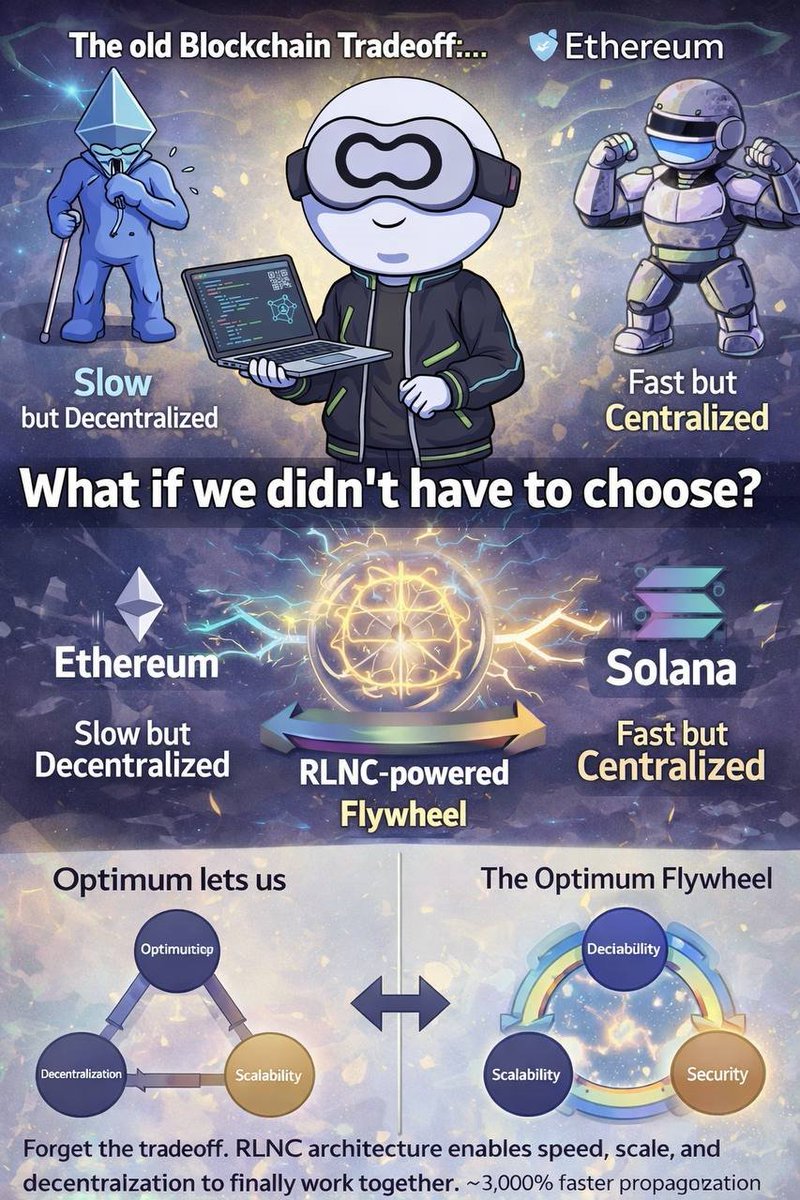

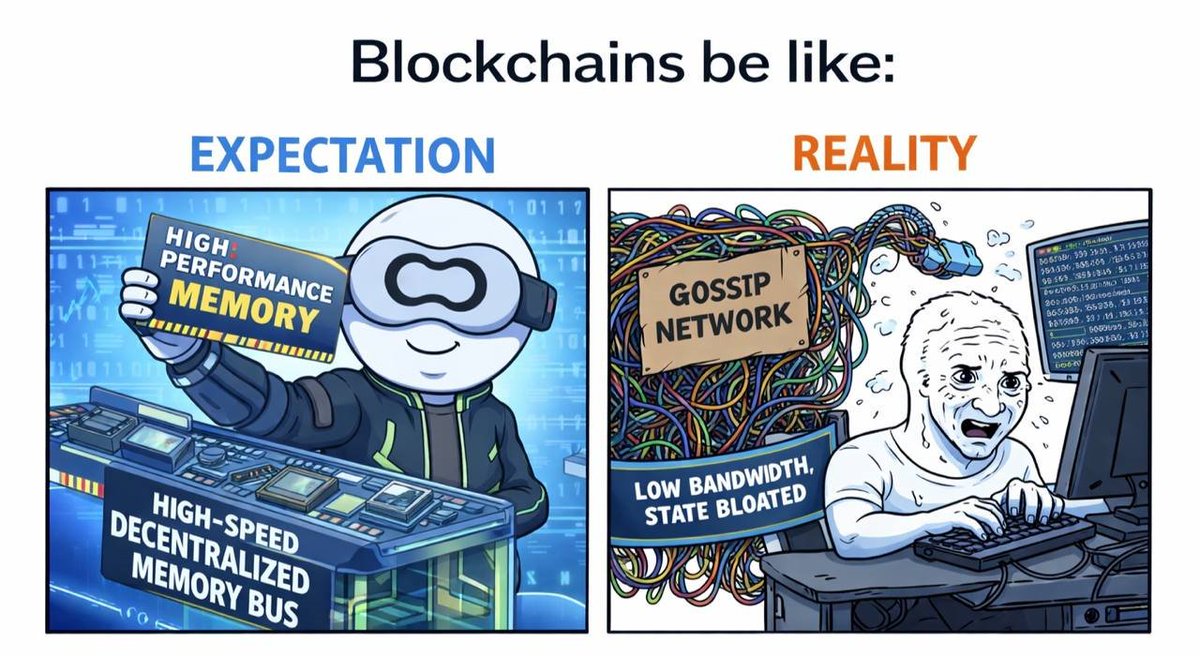

Most blockchains focus on execution and consensus. But one critical layer is often overlooked: Memory. That’s the problem @get_optimum is tackling. Optimum is building the first decentralized high performance memory layer for Web3 infrastructure designed to dramatically improve how blockchain data moves, stores, and propagates across networks. Instead of relying on inefficient gossip protocols and heavy node replication, Optimum uses Random Linear Network Coding (RLNC) a technology developed at MIT to encode and transmit blockchain data more efficiently. The result: • Faster block propagation • Lower bandwidth usage • Higher validator performance • Better scalability for L1 and L2 networks One of the project’s first products, OptimumP2P, acts like a memory bus for blockchains, helping networks move data faster and more reliably across nodes. And the ecosystem around Optimum is already gaining traction: • $11M seed round led by 1kx with participation from Animoca, Spartan, Robot Ventures and others • Partnerships with major validator operators such as Kiln, Everstake, and P2P.org While many projects focus on applications, Optimum is building core infrastructure. If blockchains are the world computer, then Optimum is building its missing memory layer. @