wen tao ten thou

16.6K posts

wen tao ten thou

@wentaotenthou

Vanta Subnet 8 Bull

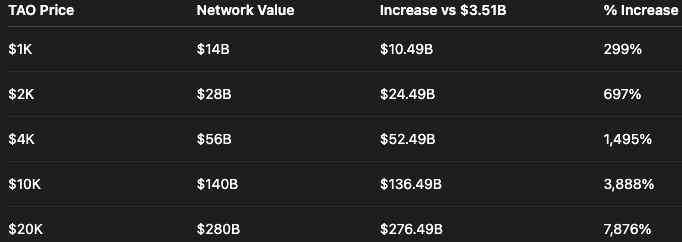

While exploring the bittensor subnets, one idea kept getting clearer to me: @leoma_ai - Subnet 99 is not trying to be “just another bittensor subnet.” It is aiming to become the video generation company that proves bittensor can compete with the biggest closed AI labs. Thanks to @MarkCreaser, CEO of DSV and investor in @leoma_ai - Subnet 99, and @vex020900, CTO of Vex|Rendix, for taking me through the vision. Also, huge respect to the rendix team for building the leoma subnet. After hearing the direction directly, it became very clear to me why this matters. One thing i want to highlight is vex. The CTO of leoma is a giga brain in AI. From what i have seen, his technical knowledge is on another level, and i believe his skill, vision, and execution will be a major reason leoma can actually build what it is aiming for. Today, @leoma_ai - Subnet 99 miners are training and fine-tuning wan 2.2, one of the strongest open-source video generation models in the world, but that is only the starting point. During the call, the team shared that leoma is preparing an initial free-version launch on April 30. This is not the final vision. It is the first public product step: showing the community that leoma has built the infrastructure to sync with the top miner’s model and turn subnet competition into a real user-facing product. That's what matters the most. Because a subnet should not only produce emissions. It should produce a product. The real goal is much bigger: a 25B+ leoma-native video generation base model, which will be built by the rendix team, released as open source, and then improved through bittensor’s miner competition. This is where things become serious. Building a 25B+ video model will cost millions of dollars. GPUs, training infrastructure, datasets, storage, inference optimization, evaluation, and scaling are not small expenses. This is the level of infrastructure normally reserved for google, openai, xai, runway, and other giant AI labs. That is why I believe funding leoma’s infrastructure matters. ⇢ Without serious infrastructure, there is no serious model. ⇢ Without serious model training, there is no real challenge against closed-company SOTA. ⇢ Without subnets willing to take that level of challenge, bittensor will never prove its full potential. So yes, I will help support leoma’s infrastructure push, this is not just a support. But to help give leoma the resources it needs to build the 25B+ base model, support miner fine-tuning, scale evaluation, and move toward a real video generation product. Remember, no bittensor subnet has ever pushed past the largest open-source models in its field and gone directly after closed-company SOTA, leoma is aiming to do exactly that. ⇢ Not by writing another roadmap. ⇢ Not by pretending decentralization alone is enough. ⇢ But by combining capital, infrastructure, miners, validators, and open-source development around one objective: THE BEST MODEL WINS. If leoma can deliver video quality competitive with closed AI companies while offering generation at 3x–5x lower market cost, that is not just a win for leoma. That is a proof-of-concept moment for all of $TAO. Imagine a bittensor subnet producing video quality that competes with the biggest closed labs, while being cheaper, open, and continuously improved by miners. That is the kind of subnet bittensor needs. That is the kind of subnet that changes the narrative. ⇢ I am not promising @leoma_ai - Subnet 99, becomes the top subnet overnight. ⇢ I am not promising they beat every closed AI lab tomorrow. ⇢ But I believe this is one of the most important attempts happening on bittensor right now. TIME WILL TELL. But if leoma pulls this off, it will not just be a game changer for leoma. It will be a game changer for bittensor. To all $TAO holders: I strongly suggest you take a serious look at leoma. Read their papers, study the vision, and understand why this subnet could matter for the future of bittensor: leoma.ai/Leoma_Whitepap… leoma.ai/Leoma_Litepape…

Last week at the @YumaGroup Summit I had the opportunity to present on The State of Bittensor. That presentation is in the thread below. If you choose to read it, I'd ask that you keep the following three things in mind: 1. This is just one guy's view of what was the most relevant for a 25-minute talk; a difficult filter for such a dynamic industry 2. The slides were designed to supplement a talk; I've done my best to replicate what I recall of the talk in the accompanying X posts 3. The topic of the Summit was "The Tipping Point" - a candid assessment of what could lead to Bittensor's breakout success and what evidence we see of that today - which also thematically anchored this presentation Let's dive in:

Venice Uncensored 1.2 is now live. Developed with @dphnAI, this model delivers the most uncensored version of Mistral 24B. Upgraded with vision support, a 4x larger context window, and stronger tool-use capabilities. Trained on Bittensor Subnet 4 @TargonCompute.

We've started working with some Bittensor subnets. New Venice Uncensored 1.2 model was tuned on Targon/subnet4

Manako × @PwC_France We've formed an alliance to bring physical AI to enterprise at global scale. PwC France will lean on Manako's Business Operations World Model, powered by @webuildscore to turn their clients' existing camera networks into real-time systems of action. 1/5

“The bomb he dropped at the end: - New algorithm for training "arbitrarily large" models on Bittensor - Targeting dropping in the next week or two - Positioning to go beyond what Templar did with dense 72B models - Jon: "to actually compete, like if you look at xAI, they're training 10 trillion parameter models now... we need to sort of cross that chasm... I think there's a way to do it” And just like that Templar will be just an old war story..

One team can't build the best AI. So we stopped trying. We built an open arena instead. Here's what happened👇