will ye

1.6K posts

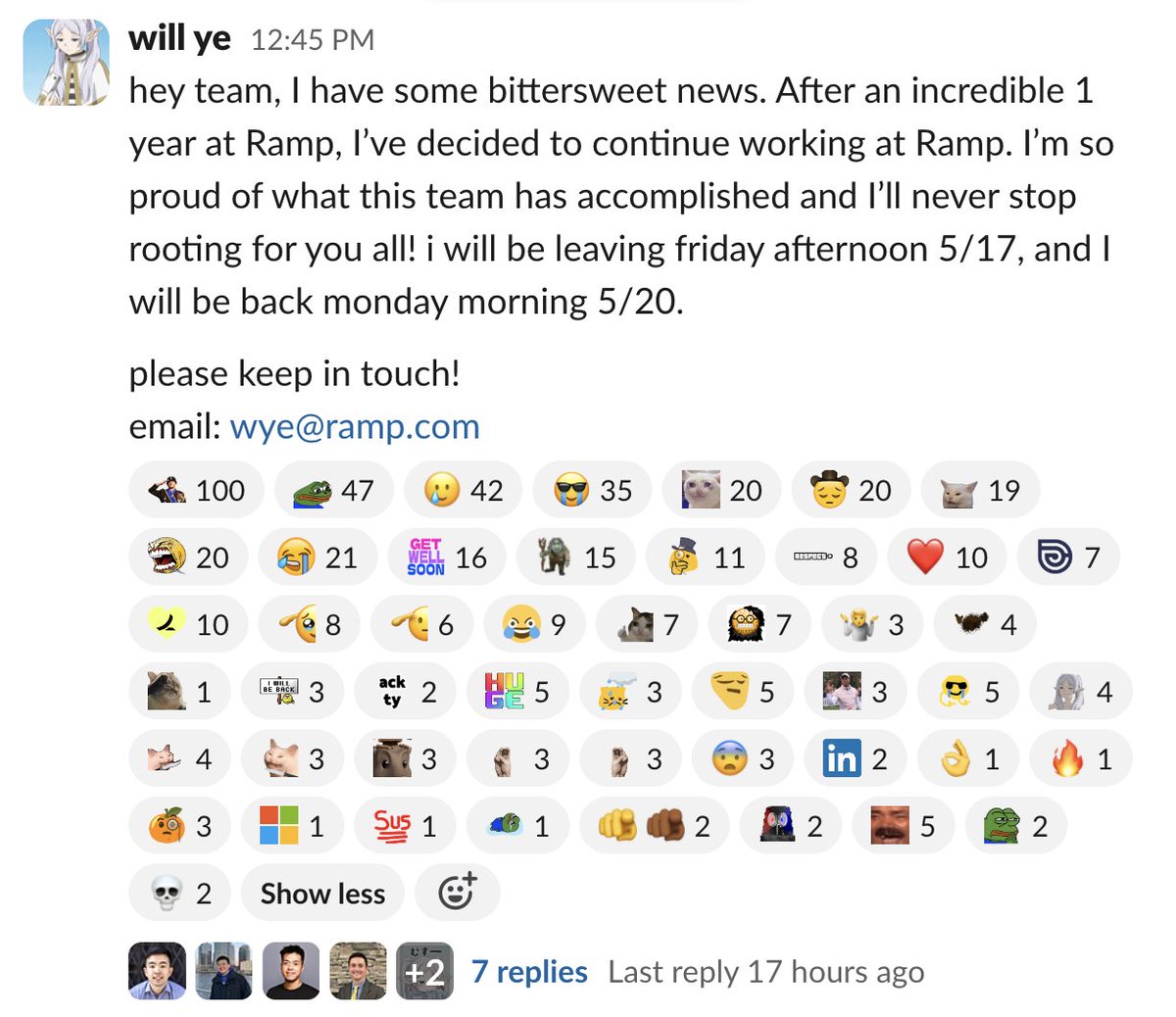

will ye

@will__ye

member of whimsical staff, ex-ramp applied ai

sf is so weird what do you mean theres a tinned fish party

Meet GC

Ever wanted to NAME A STREET? We’re auctioning off the naming rights to an actual alley in San Francisco. Highest bidder can name it whatever they want. Ends Tuesday at 1pm PT

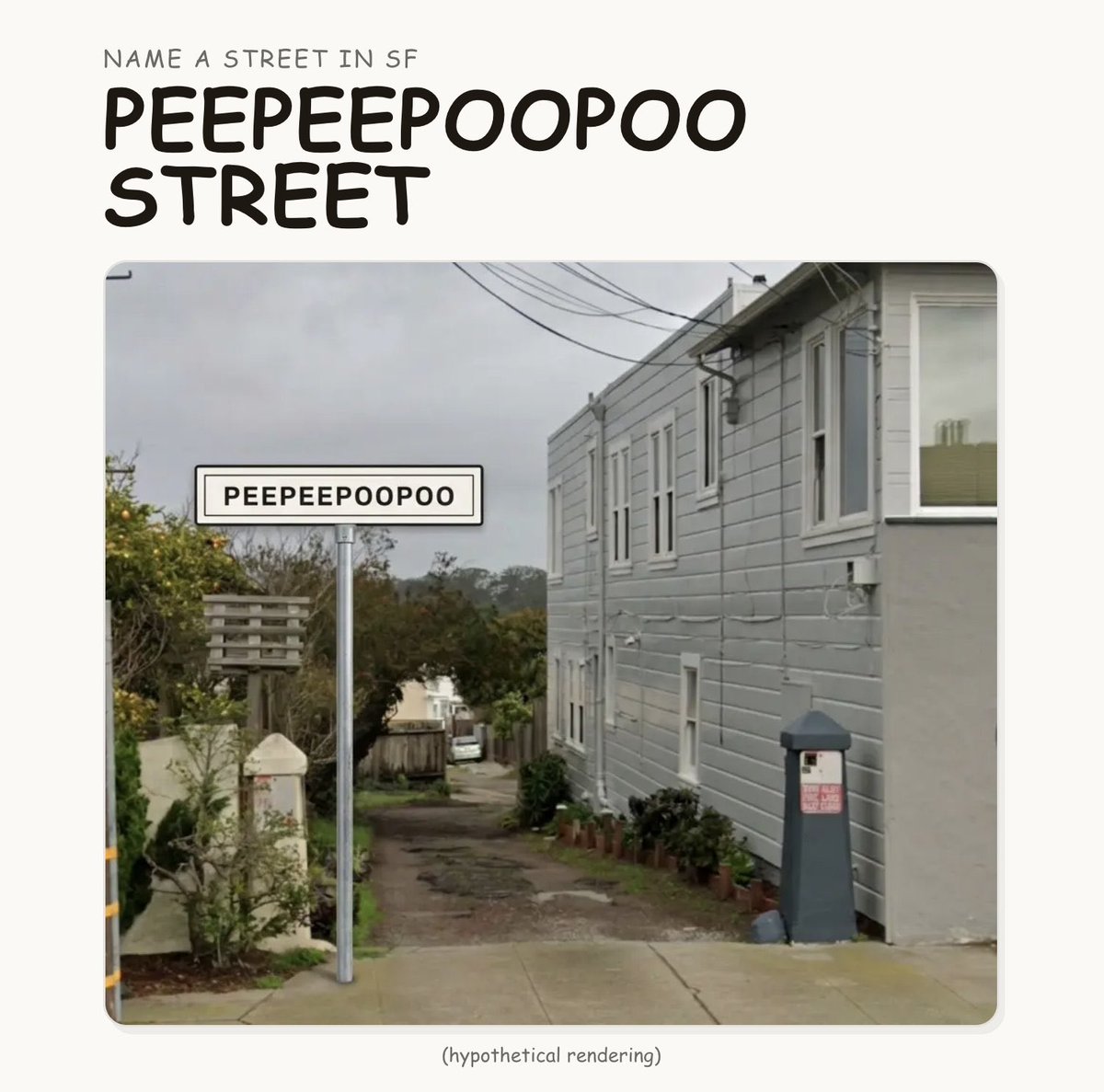

the naming rights for a street in san francisco are being auctioned off. but the top bidders are corporations naming it after themselves. lame! to fight this, we're crowdfunding PEEPEEPOOPOO STREET. less tech billboards in sf, more whimsymaxxing! we only have 4 days to do something really stupid. peepeepoopoostreet.com

Ever wanted to NAME A STREET? We’re auctioning off the naming rights to an actual alley in San Francisco. Highest bidder can name it whatever they want. Ends Tuesday at 1pm PT

I officially founded my first startup called Komorebi Labs LLC! Komorebi (木漏れ日) is a Japanese word describing sunlight that filter through leaves of trees.

He finally found his match 💚 @waltergoat