Sabitlenmiş Tweet

Xpicker2

2.4K posts

Xpicker2

@xpickr

Automatically pick a random winner from any X post 🤖 the X picker 🔥 Follow us for a bonus point. AI acceleration incoming.

Katılım Ağustos 2022

552 Takip Edilen7.1K Takipçiler

Xpicker2 retweetledi

Open Source's big problem.

Last night I went to a Y Combinator party in San Francisco and met an entrepreneur who is making a top Open Source AI model.

He told me it is very hard to make money in open source. Yeah, it is cool being popular, he told me, but figuring out how to make a business out of it is proving to be very difficult.

The Chinese are pounding the price into the ground with their open source models. Which makes it tough.

In the old world of Open Source you could make money with them by consulting, service, etc, like RedHat did.

But in this new world, he told me, it's much harder to make a good business out of it.

Is anyone making a good business out of open source?

What would your advice be to the businesses that are trying to support Open Source?

English

Xpicker2 retweetledi

@xpickr 2. Games, giveaways & contests

Right now it's raining 😁

English

Xpicker2 retweetledi

@iruletheworldmo @karpathy @steipete @kris @dabit3 @PeterDiamandis @beffjezos @EXM7777 Haha reposted from 3 months ago.

Sometimes upgraded to frequently.

English

Xpicker2 retweetledi

the best 19 accounts to follow in decentralized AI:

@karpathy = LLMs king

@steipete = built openclaw

@kris = AI + crypto literally

@iruletheworldmo = sometimes alpha

@dabit3 = leaped to full AI tech

@PeterDiamandis = futuristic AI vision

@beffjezos = AI & thermo

@EXM7777 = AI ops + systems king

@eptwts = AI money twitter king

@godofprompt = prompt king

@vasuman = AI agents king

@AmirMushich = AI ads king

@elder_plinius = 👀 rabbit hole

@0xROAS = AI UGCs king

@xpickr engagement + AI giveaways

@AlexFinn = someone said he'll repost

@testingcatalog = news + wen new pfp

@kloss_xyz = systems architecture

@Hesamation = AI/ML king

follow them all and learn.

Who'd we miss?!

English

Xpicker2 retweetledi

Xpicker2 retweetledi

Oh interesting, that was exactly 1 year ago.

MOG pfp May 2025 ✅

Added @sama to the list. Took a year.

I hope trend doesn't come back?

@iruletheworldmo

@garrytan

@koltregaskes

@beffjezos

@jordihays

English

Xpicker2 retweetledi

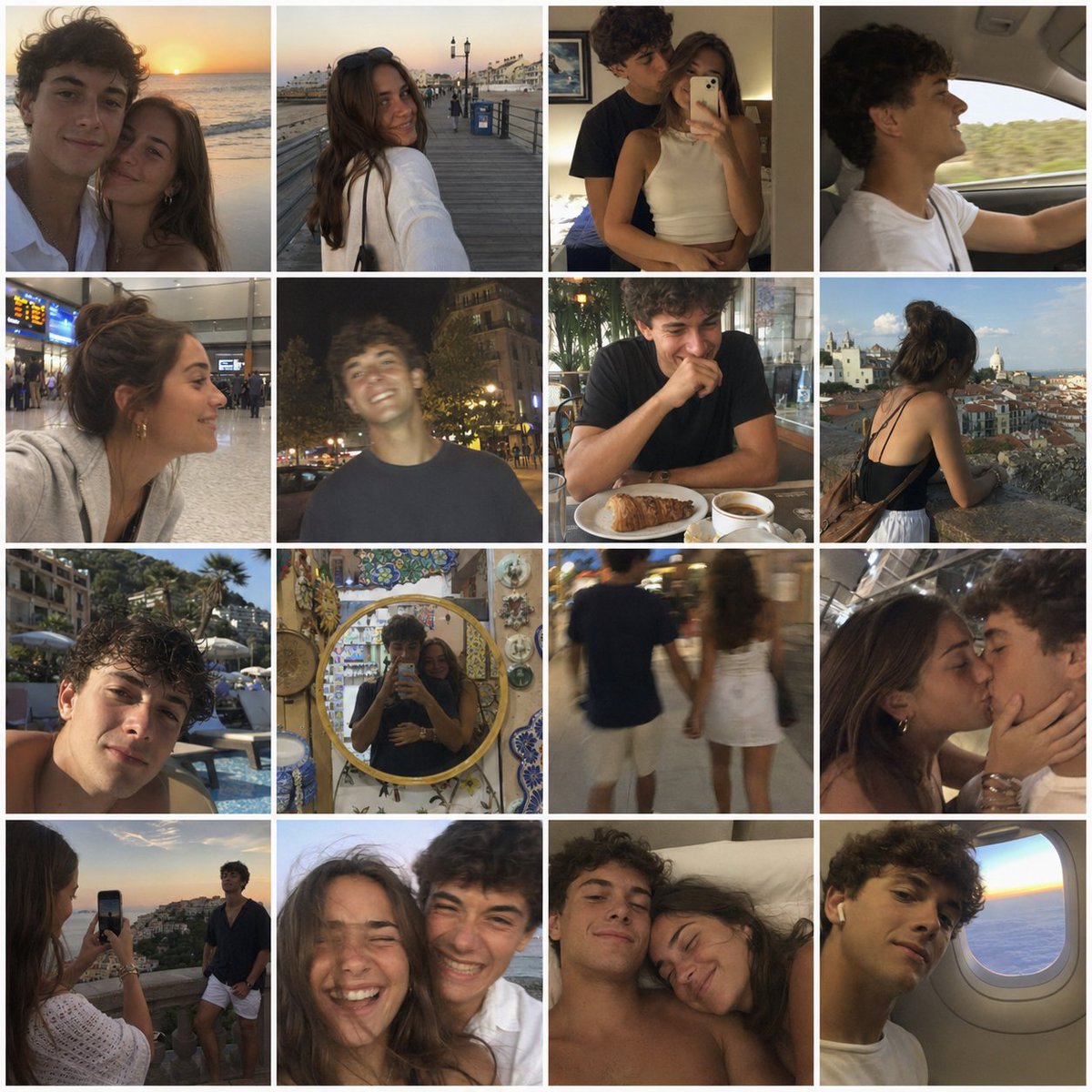

This is wild.

ChatGPT Images 2.0 just completely changed the AI image game.

These are not real 🤯

PROMPT: 4x4 grid of candid nostalgic photos shot with iphone of a young couple at vacation taking selfies of each other and together. Lots of camera shake, amateur framing, and emotional/vintage aesthetic.

English

Xpicker2 retweetledi

wake up because this is the GREATEST time in history to start a company with TRILLIONS of dollars up for grabs over the next 10 years

1. consumer mobile is INTERESTING again for the first time since like 2017. apps can actually do things now.

do things. real things. book the flight, draft the contract, follow up with the lead, negotiate the rate, do things. we went from "tap to view" to "tap to deploy." the entire interaction model of software just flipped & most people haven't even registered it yet. OH, and the cost to create these apps is 1/100th of 2017.

2. HARDWARE is back on the table because you can shove Gemma 4 or DeepSeek onto a device that costs less than dinner & it runs locally with zero cloud costs. a year ago that sentence would have sounded insane. you can ship a physical product with a real brain in it now. the last time hardware was this accessible was the early smartphone era & that created a trillion dollar app economy from scratch.

3. literally EVERY category is open to be rebuilt AI-first. the incumbents know it & they're paralyzed. they can't move fast because moving fast because incumbents move slower than you (usually). that paralysis is your opportunity. build the app. build the SaaS. build the AI agent

4. distribution is FREE. you can go from zero audience to 10,000 people who trust you in 90 days on X or YT or IG your first 100 customers are sitting in your replies right now. the old playbook of "raise money, hire sales team, buy ads" is being lapped by a solo founder with a twitter account & a working demo. Oh, and you can use AI to automate a lot of it (ideas, research, AI avatars etc)

5. Idk about you but it feels like companies are doing LAYOFFS like it's the great depression and it's only getting started. No job is secure. So, building a side project that could turn into the main project is more important than ever.

6. the ENTIRE economy is being repriced in real time. the surface area for new companies has never been wider. the tools to build are free. the models are open source. the incumbents are running committees about their "AI strategy" while you could have already shipped.

and somehow the predominant response from most people is to watch youtube videos about it & go back to their 9-5.

not saying this is easy

not saying everyone will win

but im saying right now is a time worth trying

YOU ARE LIVING through a mass reshuffling of who owns what & who builds what. the last time this happened was the internet itself. before that, electricity.

this almost never happens.

& you're sitting there doing nothing about it?

wake up.

English