Закреплённый твит

Bytez

5K posts

Bytez

@Bytez

1 API key, 220,000+ AI models. We’re the largest inference API on the internet and power NeurIPS and CVPR to bridge the gap between AI papers and runnable code✨

Earth Присоединился Temmuz 2019

155 Подписки1K Подписчики

@alejandrGZAX Appreciate it, DM us your email and we'll add you to the early access list — first group gets in soon

English

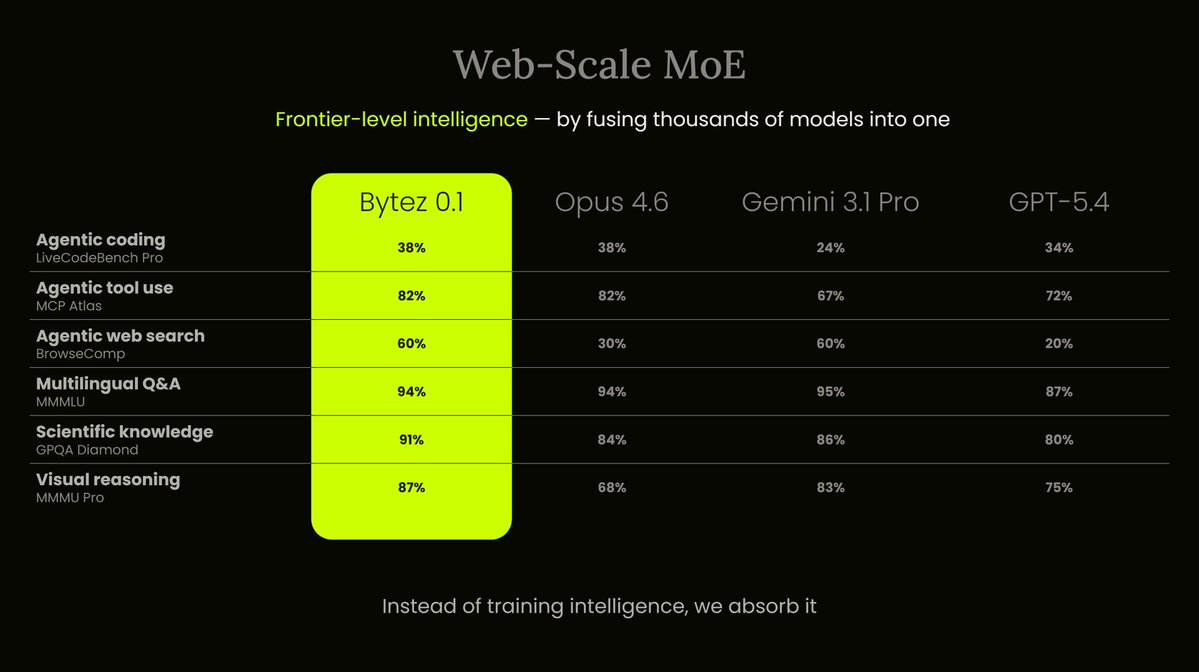

Bytez 0.1 beats Opus, Gemini Pro, and GPT-5.4 across benchmarks.

Achieved without spending millions on GPUs.

Karpathy recently said LLM ensembles are "under-explored."

He vibe-coded a weekend prototype to test the idea.

We've been building the production version for 2 years. Bytez 0.1 is a Web-Scale MoE — instead of training one massive model, we fuse thousands of models into a single intelligence.

Instead of training intelligence, we absorb it.

More benchmarks dropping soon.

What's a faster path to a v1 of AGI?

A) One massive model that tries to be an expert on everything

B) Thousands of experts fused into one

English

Bytez ретвитнул

Bytez ретвитнул

.@illscience says the future of AI isn’t one model to rule them all—and explains why platforms that integrate multiple models will benefit the most:

"I think we're going to need and rely on all of the models."

"It's sort of like if you have a team of people... if you have five people, they could all do a basic set of things pretty capably."

"But then they all have their specializations. Maybe one of them is really good at closing a customer who doesn't want to sign the deal, and one of them is really good at culture and getting the best out of the team."

"There are some areas in which they are going to build apps, and that will be a threat to app companies. But there are many areas in which app companies are advantaged. Cursor and Krea are great examples of this—products where you benefit from being multi-model."

"When you actually use a creative tool, you don't want to just use Nano Banana, you want to have access to OpenAI, Nano Banana, Kling—all of them—Qwen, you name it. So using a single interface to access all the models is powerful."

Anish Acharya on BILLIONS with @GuillaumeMbh

English

Bytez ретвитнул

Last week, we launched Palatial PhysReady and the response blew us away. Over 100 companies signed up for our waitlist and the team had a blast watching everyone tagging @PalatialSim with their creative prompts.

We generated over 100 assets in 1 day and below are a few of the highlights.

We're looking forward to giving everyone access to the platform and API, wave 2 goes live on Wednesday! Sign up at palatial.cloud/join

English

Bytez ретвитнул

A child consumes more data in 1 month than any LLM has ever seen. Embodied agents learn by doing, but the data that teaches them is tactile, sensorial and causal.

Such data does not exist.

To make physical AGI possible, we need to generate this new data at an industrial scale.

Enter Palatial: automated infrastructure that converts raw data into sensory rich playgrounds for robots to learn in.

Today, we’re unveiling Palatial PhysReady, the first automated sim asset generator (try it ⬇️) [1/5]

English

Bytez ретвитнул

The future of AI is agentic, and America is leading the way to make it secure and interoperable.

A new AI Agent Standards Initiative is launching this week @NIST to drive industry-led standards and open protocols that build trust and advance innovation. nist.gov/news-events/ne…

English

Bytez ретвитнул

Bytez ретвитнул

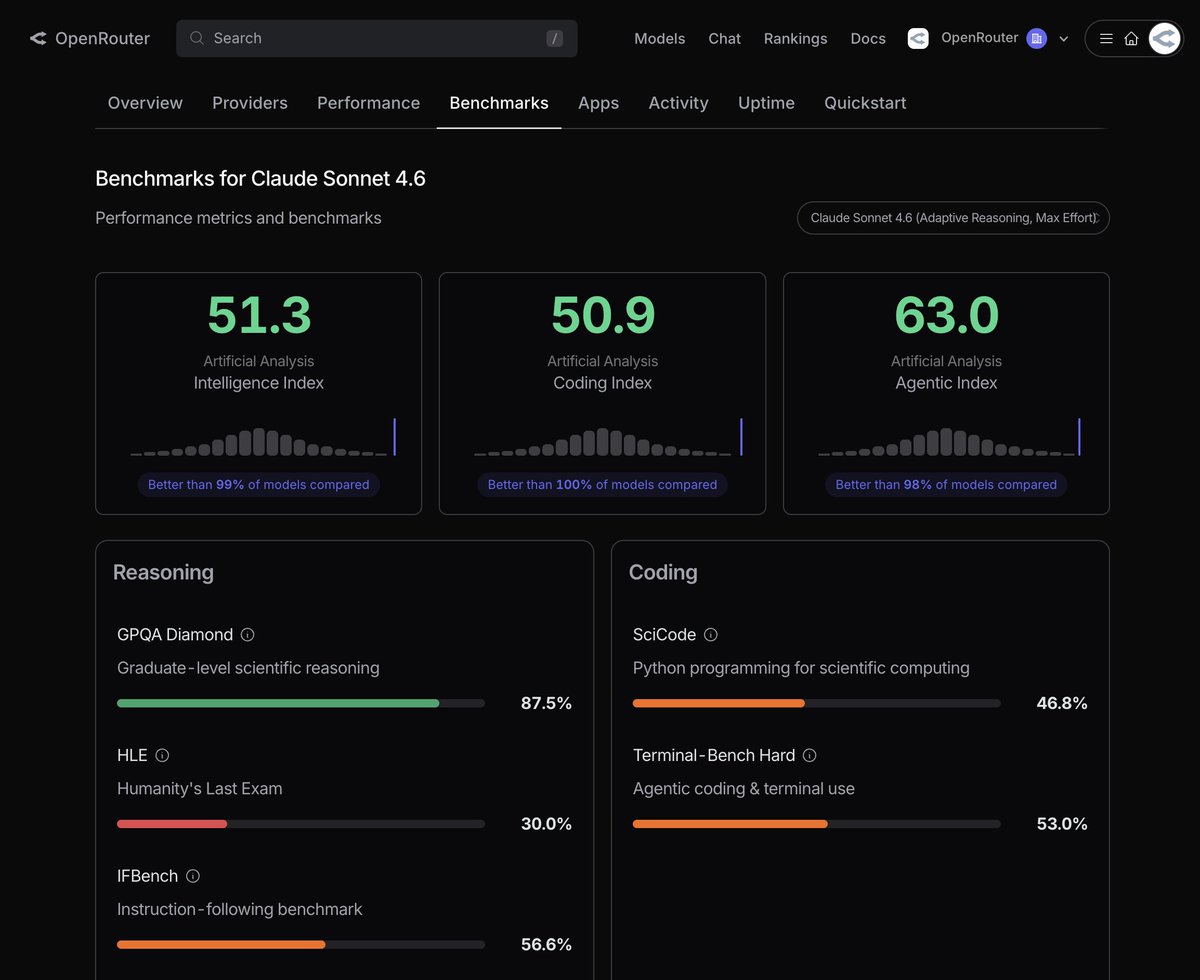

NVIDIA has just released Nemotron 3 Nano, a ~30B MoE model that scores 52 on the Artificial Analysis Intelligence Index with just ~3B active parameters

Hybrid Mamba-Transformer architecture: Nemotron 3 Nano combines the hybrid Mamba-Transformer approach @NVIDIAAI has used on previous Nemotron models with a moderate-sparsity MoE architecture, enabling highly efficient inference, particularly at longer sequence lengths

Small-model improvements: with 31.6B total and 3.6B active parameters, Nemotron 3 Nano scores 52 on our Intelligence Index, in line with OpenAI’s gpt-oss-20b (high). This represents a +6 point lead on the similarly-sized Qwen3 30B A3B 2507 and +15 improvement on NVIDIA’s previous Nemotron Nano 9B V2 (a dense model)

High openness: Nemotron 3 Nano follows other recent NVIDIA models in open licensing and releases of data and methodology for the community to use and replicate - it scores an 67 on the Artificial Analysis Openness Index, in line with previous Nemotron Nano models

Key model details:

➤ 1 million token context window, with text only support

➤ Supports reasoning and non-reasoning modes

➤ Released under the NVIDIA Open Model License; the model is freely available for commercial use or training of derivative models

➤ On launch, the model is being made available with a range of serverless inference providers including @baseten, @DeepInfra, @FireworksAI_HQ, @togethercompute and @friendliai, and it is available now on Hugging Face for local inference or self-deployment

See below for our full analysis and key announcement links from NVIDIA 👇

English

Bytez ретвитнул

You can now see the most popular large-context models on the OpenRouter Rankings 👇

Yam Peleg@Yampeleg

how?

English

Bytez ретвитнул

Bytez ретвитнул

Bytez ретвитнул

One of my favorite moments from Yejin Choi’s NeurIPS keynote was her point as follows:

"it looks like a minor detail, but one thing I learned since joining and spending time at NVIDIA is that all these, like, minor details, implementation details matter a lot" -- I think this is exactly the point that theory people often undervalue when it comes to empirical work.

English

Bytez ретвитнул

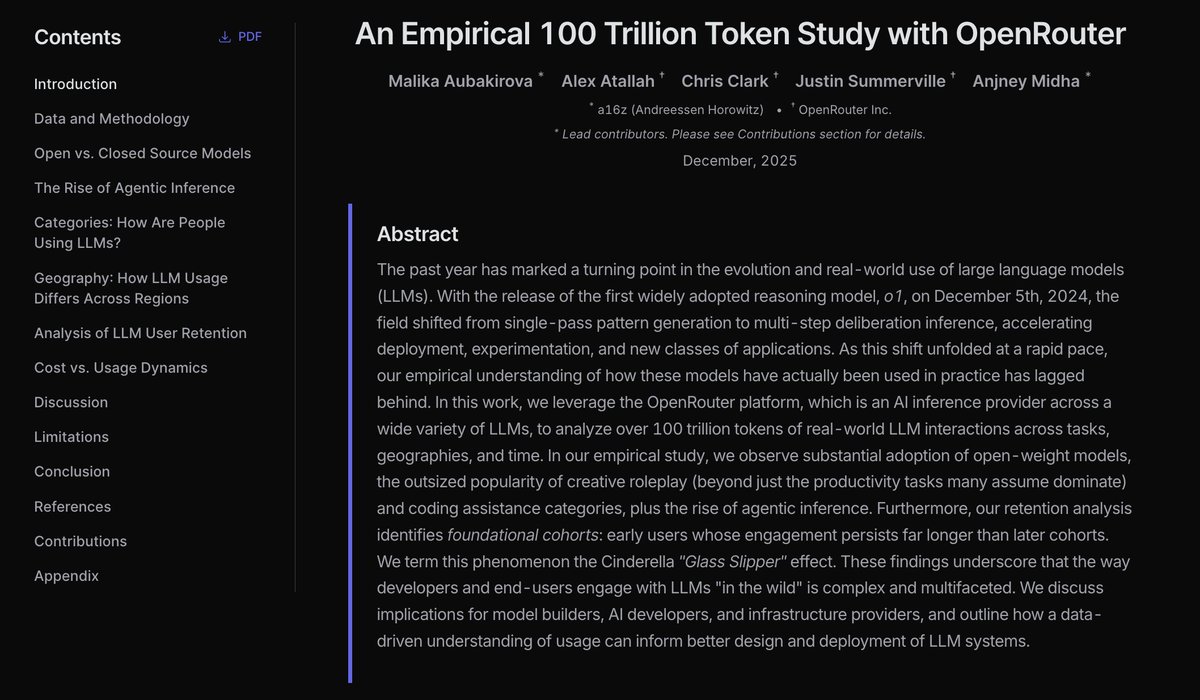

We collaborated with @a16z to publish the **State of AI** - an empirical report on how LLMs have been used on OpenRouter.

After analyzing more than 100 trillion tokens across hundreds of models and 3+ million users (excluding 3rd party) from the last year, we have a lot of insights to share.

English

Bytez ретвитнул

What a week! As the main conference concludes, we want to thank every attendee, presenter, volunteer, and organizer who made NeurIPS 2025 unforgettable. For those staying in San Diego, workshops and competitions continue! #NeurIPS2025 #NeurIPSanDiego

English

Bytez ретвитнул