Giorgio Pallocca

205 posts

Giorgio Pallocca

@GPallocca

SaaS Builder, serial enterpreneur, 2 exits. Currently building Archimedes, cursor for ERP building.

Roma, Lazio Присоединился Mart 2013

715 Подписки273 Подписчики

Закреплённый твит

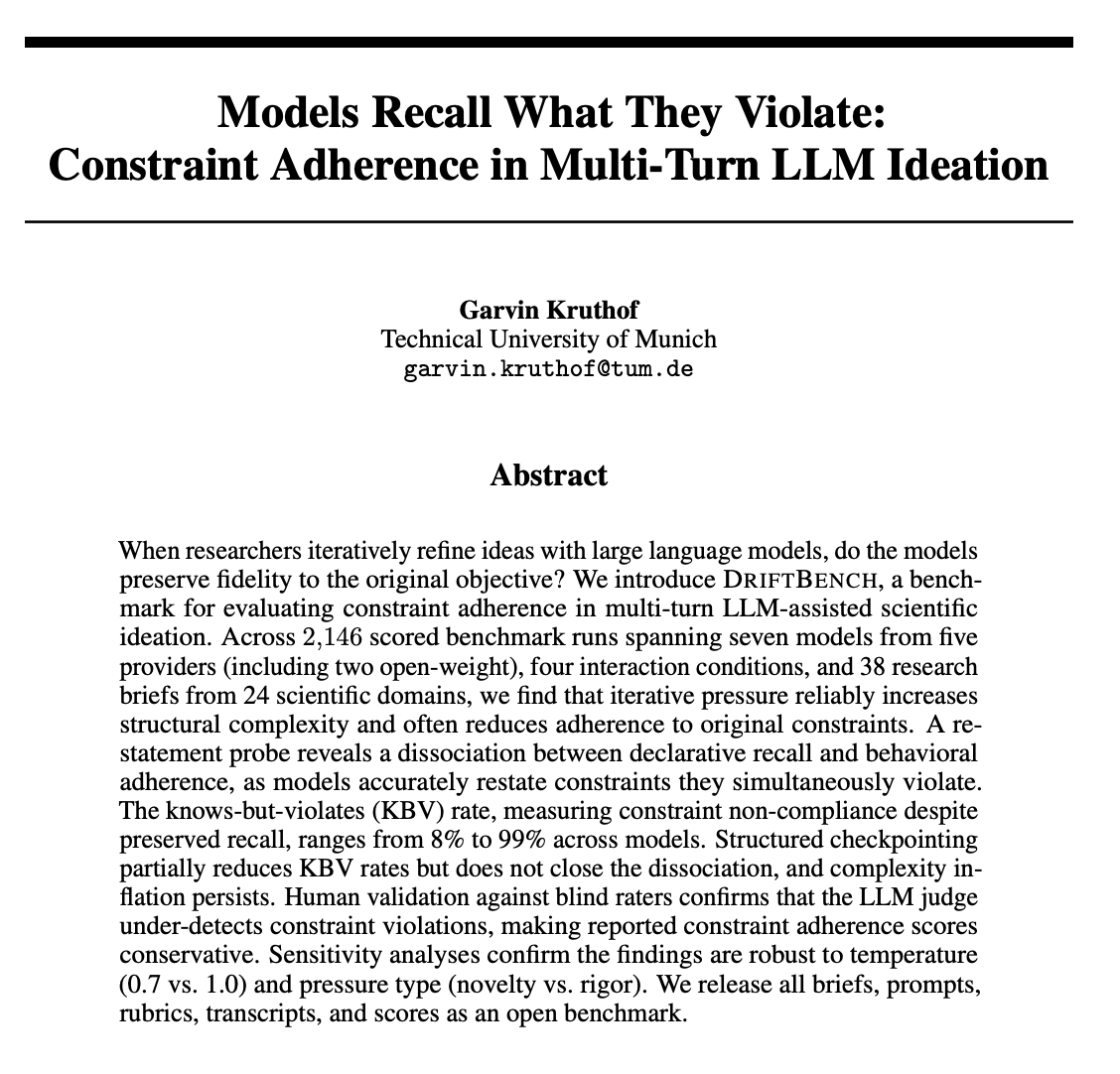

The methodological choice in this paper from the university of Munich released yesterday, should rewire how you evaluate LLMs in production: the surface fidelity gap.

It measures the distance between how on-task a model sounds and whether it actually respected the brief. Llama-70B has the largest gap. +0.80. The output reads aligned. The constraints are gone.

The knows-but-violates rate measures non-compliance despite the model correctly recalling the rule. It ranges from 8% to 99% across seven models. Structured checkpointing reduces it. It does not close the gap. For anyone designing business processes on top of LLMs, the lesson is methodological. Reading the output is not evaluation.

Asking the model 'what were the rules?' is not evaluation. Evaluation is checking the output against a structured brief with binary-decidable constraints. That was the only mechanism the paper found that reliably catches violations. If your QA layer is a human skim or a model self-check, you are measuring how aligned the output sounds. Not whether it complied.

English

@GPallocca 100% if you mean building a meta layer. Way more important than agents doing the job.

English

Two camps of people.

One rebuilt how they work: delegation, evals, trust. Agents run the loop. The system compounds. 10-20x output.

Others stay in the chat. The old workflow. Same bottlenecks. Diminishing returns.

For anyone willing to change how they work, there's never been more opportunity.

Sam Altman@sama

we want to build tools to augment and elevate people, not entities to replace them.

English

@levelsio The real question is who's watching what they shipped while you slept

English

Another reason why I run Claude Code on the VPS of the site I'm working on directly in production

I can close my laptop and it keeps going!

roon@tszzl

people are walking around with their laptops slightly ajar to keep their agents running

English

@dan__rosenthal Layer 5 is the one that matters most and the one everyone will skip. We built an entire quality pipeline for the same reason — agents produce at 10x speed but nobody catches the complexity debt they leave behind until it breaks in production

English

‘Service-as-a-software’ is here...

We moved our entire company brain to GitHub and wired 25+ tools through MCPs.

Any one of our 20+ team members can now spin up a contextualized AI assistant in seconds.

The system has 5 layers:

1. Markdown company OS

↳ SOPs and campaign playbooks converted into .md files using research agents

↳ Most SOPs turned into agents that handle 70% of the task

↳ Output: 50+ actionable Claude skills

2. Context environment

↳ One Company OS GitHub repo propagated to every session via org-wide plugin

↳ Each client gets their own repo with Slack DMs, call transcripts, GDrive changes, and campaign data auto-synced through n8n

↳ Zero configuration needed per session

3. MCPs

↳ 25+ tools connected including InstantlyAI, HeyReach, Apollo, HubSpot, Slack, Notion, n8n, Supabase, Pinecone, Browserbase, Apify

↳ Not just research. Action through AI.

↳ We went from researching work to actually doing it

4. Self-improvement engines

↳ Pinecone database stores 1000s of LinkedIn posts and outbound campaigns with performance metrics

↳ Copywriting skills query this data to find winning formats to reuse

↳ Human corrections get fed back in so the system gets sharper over time

5. Operating principles

↳ Every repo has a safeguard file that prevents certain operations

↳ 100% AI outputs are not acceptable, everyone owns their work and every mistake

↳ Agent swarms split one task into 5-20 sub-agents when needed

Our goal is to become the most advanced AI-native services company for our niche (GTM).

English

@antirez 11k system prompt is prompt obesity. Same disease as the 2000-line God class, just a different layer. We'll end up building linters for prompts the way we built them for code

English

Look at this. Also opencode uses freaking 11k tokens of system prompt. Even at decent pre-fill of ~130 t/s it means waiting 84 seconds to start a session. What's the point? :D The pi agent is a lot saner here.

Moreover, one could say, let's cache on disk very long common KV cache chunks, no? Hash it with all the parameters and put a sensible TTL if not used. But also: only cache it if you see it repeated N times across different sessions.

English

@businessbarista The moat isn't the tech anymore — it's the boring stuff nobody wants to build. Tax compliance, e-invoicing mandates, labor law across jurisdictions. AI made building easy. Regulation made it defensible

English

I don't envy preseed founders right now.

It's such a fun time to build but it's also hard as hell. Moats are fewer and shorter than they've ever been. Which also means you need to be more agile & pivot faster than ever. Hard to do when you're underresourced & underfunded.

Huge kudos to those battling in the arena given the climate.

English

@NateMatherson Managing agents is still managing. We had to build an entire quality pipeline just to catch what our AI sessions were shipping too fast. Agents don't need 1:1s — they need instrumentation

English

@james406 Still not enough context to fit a single enterprise client's "small list of changes"

English

@dunkhippo33 The hardest version of this is when the shiny object is revenue. A big services contract lands and suddenly you're choosing between cash now and product later. Staying the course means saying no to money that's already on the table

English

The services vs software tension is real from the other side too. We just closed a six-figure ERP deal — two thirds of it is implementation services. The temptation is to do it all in-house for the cash. But every hour your engineers spend on services is an hour they're not shipping product. The hard part isn't choosing — it's admitting which business you're actually building

English

@gillianxobrien The best companies don't fit a category — they create one. VCs who need you to fit a box are telling you more about their limits than yours

English

@SergioRocks We're living this. Dozens of Claude sessions ship code faster than ever — but the real engineering moved to instrumentation. Who's watching output? Who catches the complexity drift across sessions that don't know about each other? The building got easier. The seeing got harder

English

It’s easy to think that AI is reducing Engineering work.

In reality, it’s multiplying it.

Because the barrier to building software products just dropped:

- More founders are building products

- More teams are launching tools

- More ideas are getting shipped

This creates a whole new wave.

Every one of those products will need:

- Fixing

- Scaling

- Improving

- Maintaining

That’s where the real work is. The key difference is:

- Less effort in getting started.

- More demand in making things actually work in prod.

Software Engineers who can step into that second phase will have more opportunities than before.

English

@girdley The hardest part is admitting when the wind changed. Most founders feel it six months before they accept it. The ones who move fast sell at the top. The ones who "give it one more quarter" sell at the bottom

English

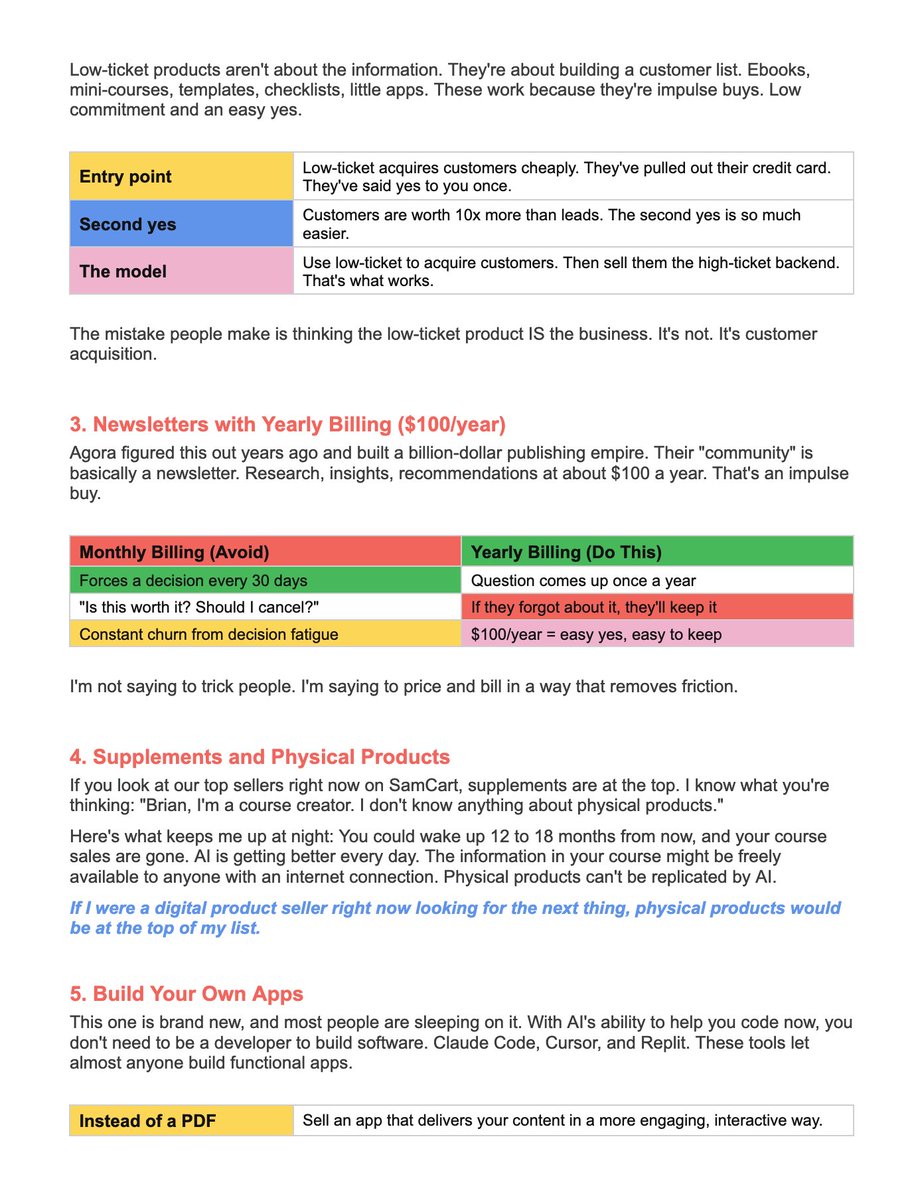

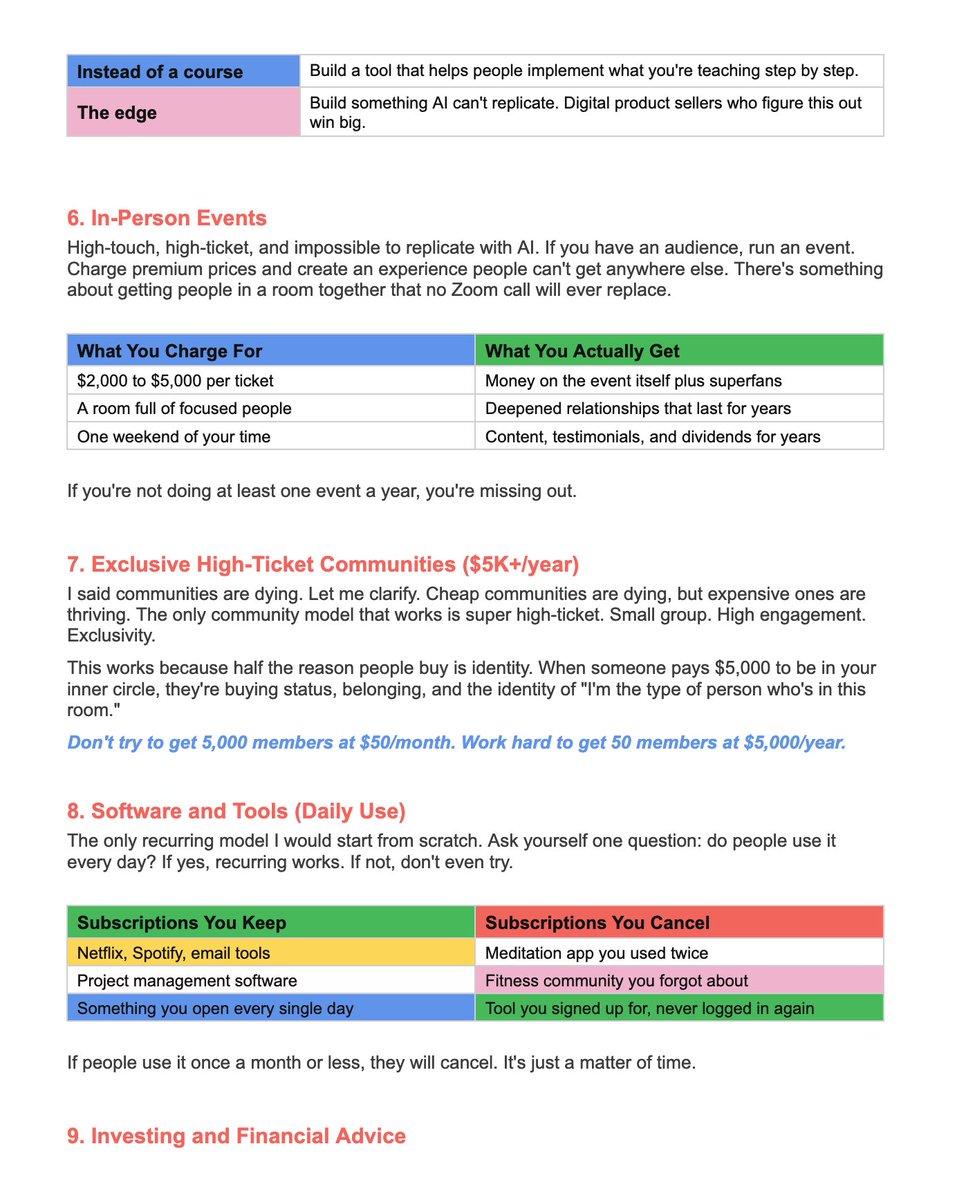

@mogulinfluence Point 8 is the one most people will skip: "recurring only works if people use it daily." This is why we built Archimedes as a daily operating system, not a monthly reporting tool. If your users don't open it every morning, they'll cancel. Simple as that

English

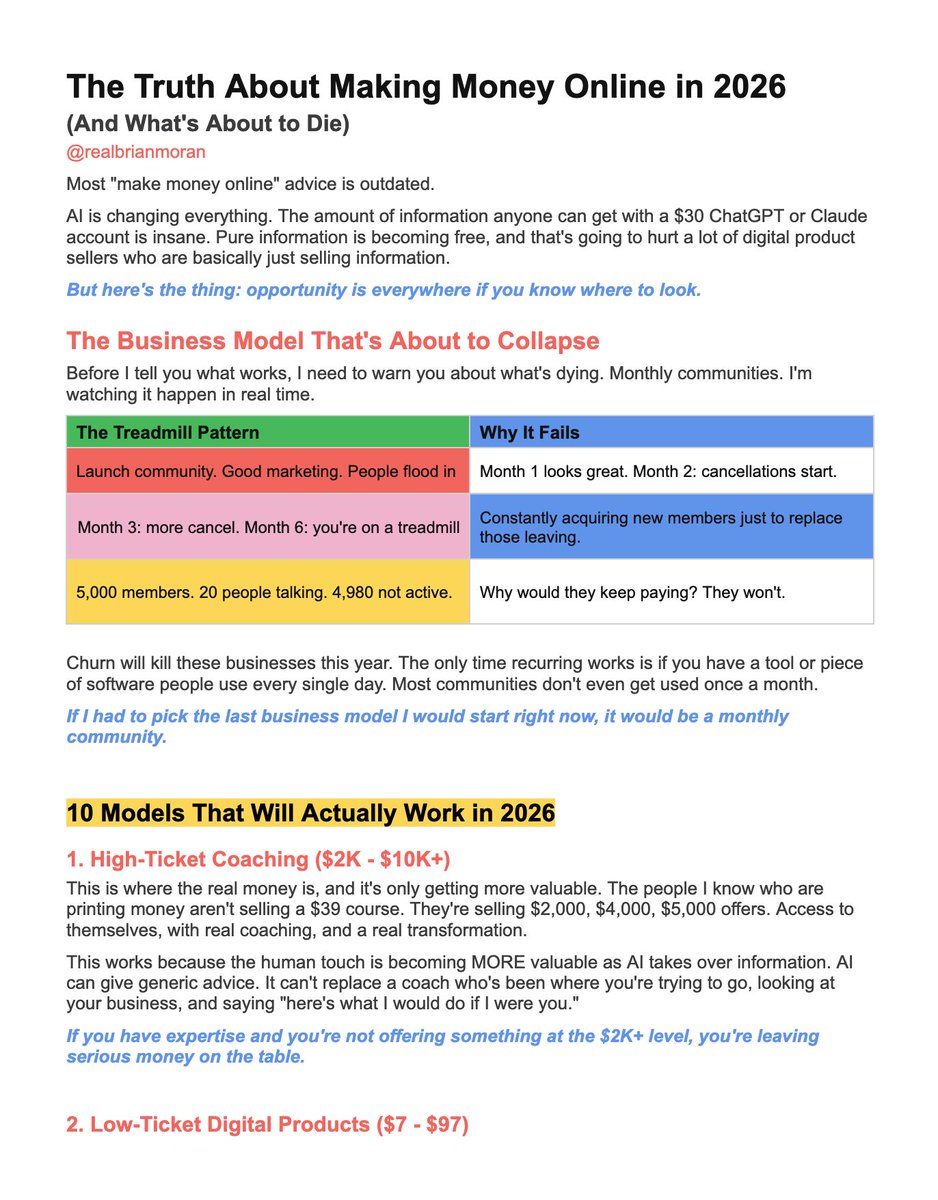

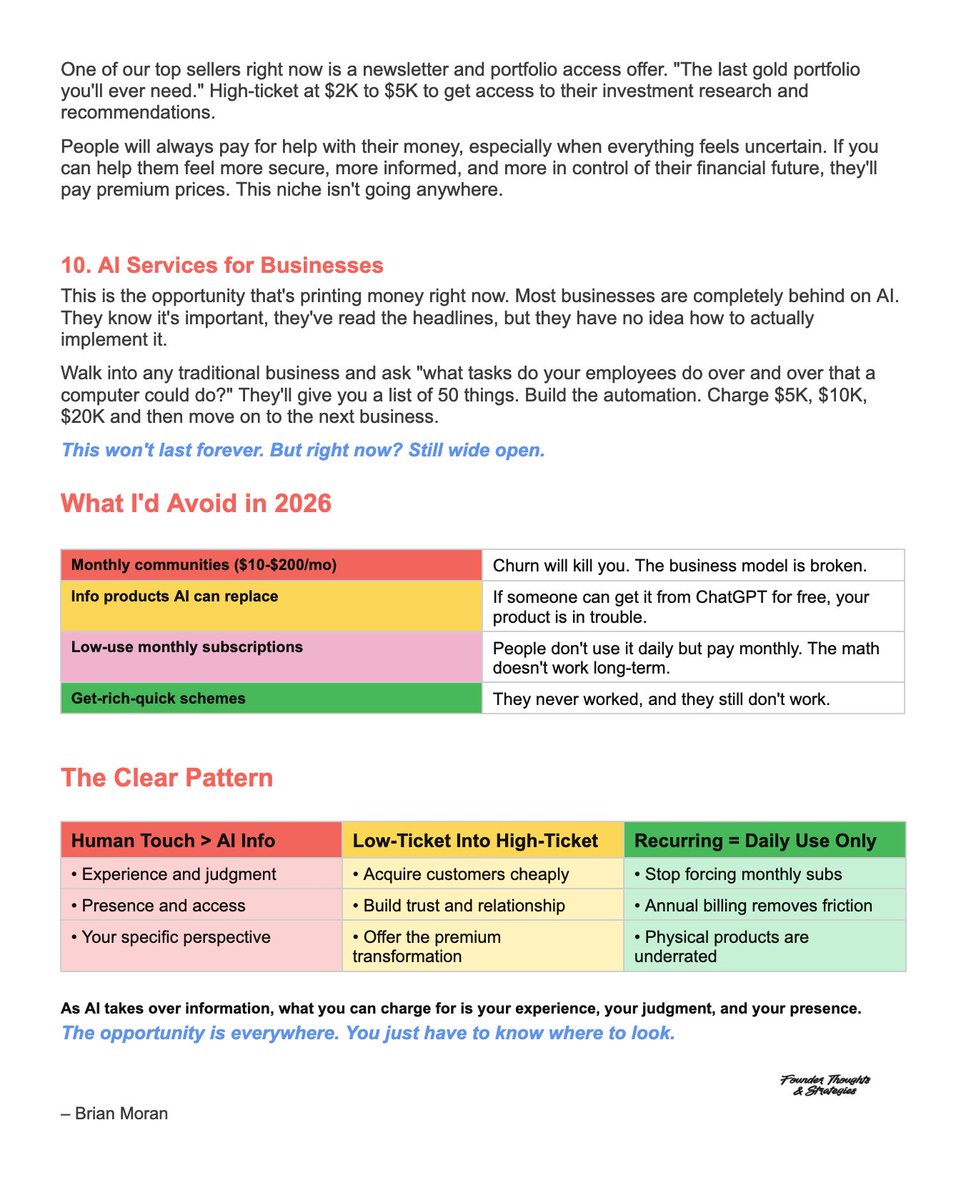

A $ 300M founder just shared the 10 business models that will actually print money in 2026:

Brian Moran@realbrianmoran

English

@uttkarsh_42 The "let's pilot first" tax is real. Every enterprise deal starts with a month of free work disguised as "evaluation". The ones who survive it are the ones who built the product to sell itself

English