Bruce MacVarish

8.9K posts

Bruce MacVarish

@brucemacv

New AI, IT and security services - I post about Applied AI + models, agents, context, security and governance

1. Employer recovery from post-COVID hiring correction 2. Employer recovery from post-COVID interest rate spike 3. Elasticity = demand boom QED

📍 Most leaders think AI advantage comes from technology adoption. The real shift is that it comes from how organizations redesign talent systems. As EY highlights, AI advantage is built across five dimensions: talent flow, adoption excellence, capability development, culture transformation, and reward systems. This is not an HR agenda. It is an operating model redesign. 1️⃣ Talent Implication: AI changes how work is done, but most organizations keep roles static. Talent systems are not aligned with AI-driven workflows. 2️⃣ Structural Blind Spot: Firms invest in tools and training, but ignore incentives, career paths, and talent mobility. Skills improve. utilization does not. 3️⃣ Design Challenge: AI advantage requires integrating talent, workflows, and decision systems. Without this, capability remains fragmented across functions. This is why AI investments fail to compound. Talent systems are not built to support how work is evolving. The real advantage is not access to AI. It is how your organization develops, deploys, and scales talent around it. via EY ey.com/en_gl/insights… @corixpartners @Transform_Sec @Corix_JC @ILoveBooks786 @COSTESLionelEr @ramonvidall @RLDI_Lamy @FrRonconi @timo_vi @Nicochan33 @NathaliaLeHen @TCyberCast @arigatou163 @VivMilanoFSL @MathildaLoco @faryus88 @bbailey39 @BindIdeas971 @FmFrancoise @EduFirst @rameshambastha @DonaldGavis @ricardo_ik_ahau @sulefati7 @ozsilverfox @BCAgroup @9SManagement @O_Berard @DavidTaboada @yd_engoue @giuliog @Hajer_Alqassimi @EdwardHarkins @Evanskipropcrim @ranya_artistry @Howie7951 @iamtunslaw @gvalan

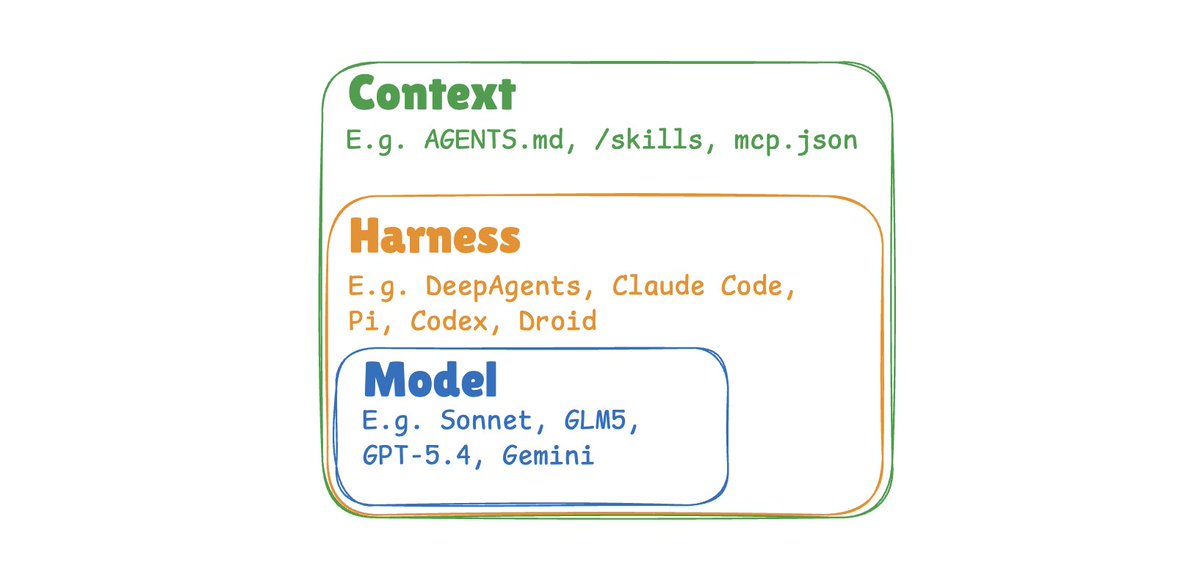

New Research💡: “The #Enterprise #AI Playbook — Lessons from 51 Successful Deployments” Excited to share new research from Stanford @DigEconLab, I co-authored with @erikbryn and Elisa Pereira . We spent 5 months interviewing executives across 41 organizations, 9 industries, and 7 countries — focusing exclusively on AI deployments that actually delivered measurable value. Not hype. Not predictions. What’s working right now, and why. A few findings that challenged even our assumptions: The hard part isn’t the AI. 77% of the toughest challenges were invisible costs — change management, data quality, process redesign. Technology was consistently described as the easiest part. Same use case, wildly different timelines. One company deployed AI customer support in weeks. Another took years. Same models. The difference was always the #organization — its #leadership, processes, and willingness to fail. #Agentic AI works — but most firms haven’t tried it yet. Only 20% of our cases were agentic, but they delivered 71% median gains vs. 40% for high-automation. This gap will widen fast. The model is increasingly a #commodity. For 42% of implementations, model choice was fully interchangeable. The durable advantage is in orchestration, data, and process — not the foundation model. With productivity increase, headcount #reduction is common (45%), but not the majority outcome. Redeployment, hiring avoidance, and acceleration strategies accounted for 55% of cases.🚨 The window for experimentation is closing. This is no longer a question of whether AI delivers value. It’s whether organizations can evolve fast enough to capture it — and whether leaders will take responsibility for smoothing the transition for workers and communities along the way. Full report (free): digitaleconomy.stanford.edu/app/uploads/20… @StanfordHAI

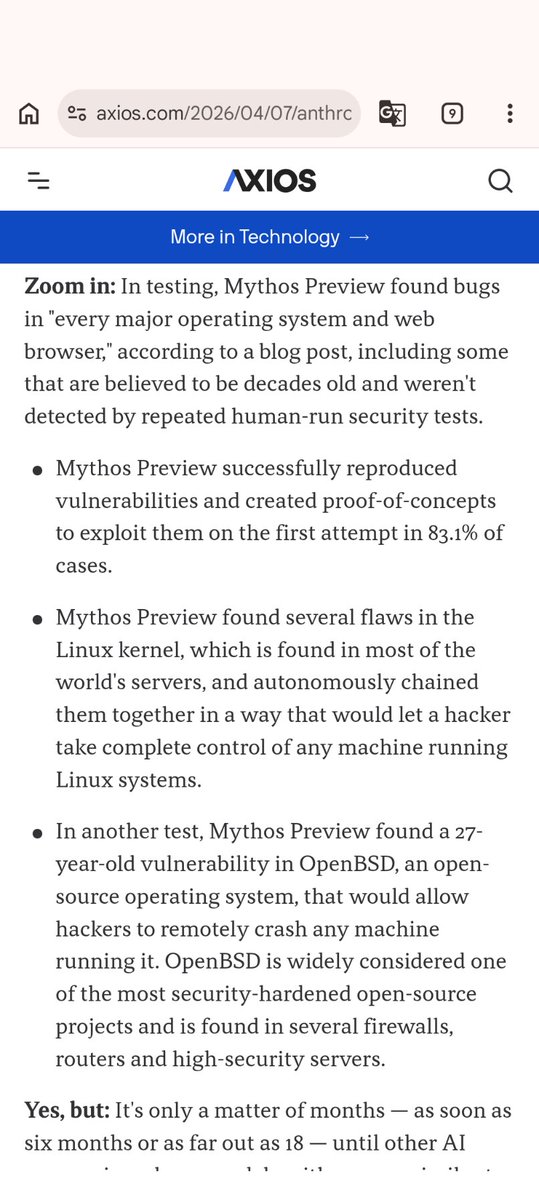

Codex Security—our application security agent—is now in research preview. openai.com/index/codex-se…