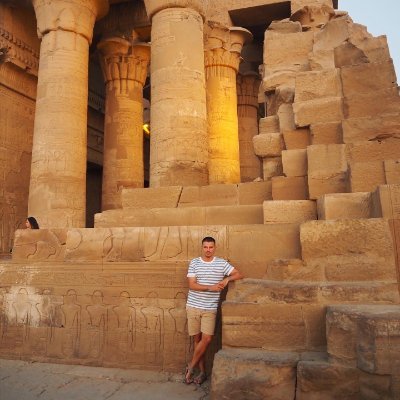

Johnny Núñez

6.5K posts

Johnny Núñez

@johnnync13

Robotics and AI at @NVIDIA

Not the flashiest demos, but what’s under the hood represents a foundational shift for general-purpose robotics. World models are the next-gen foundation of Physical AI, not the VLM backbones found in typical VLAs. DreamZero is a 14B-parameter World Action Model (WAM) by NVIDIA that treats robotics as a joint video-and-action prediction task. Unlike traditional Vision-Language-Action (VLA) models that map images directly to motor commands, DreamZero leverages a pretrained video diffusion backbone to predict future world states and actions simultaneously. - achieves 2× better zero-shot generalization to unseen tasks and environments compared to state-of-the-art VLAs. - learns effectively from heterogeneous, non-repetitive data (500 hours), breaking the need for thousands of repeated demonstrations. - adapts to new robot embodiments with just 30 minutes of play data. - enables 7Hz closed-loop control via system optimizations and "DreamZero-Flash," making high-capacity diffusion models viable for real-time use.

🚀 Fast SAM 3D Body — accelerating SAM 3D Body for real-time human mesh recovery! ⚡ 10.25× faster 3D body estimation ⚡ 10,426× faster MHR→SMPL conversion ⏱️ ~65ms end-to-end 🤖 Deployable on humanoid robots #Robotics

Building on the previous correctness-focused pipeline, KernelAgent can now integrate GPU hardware-performance signals into a closed-loop multi-agent workflow to guide the optimization for Triton Kernels. Learn more: hubs.la/Q045Wsqq0 @KaimingCheng @marksaroufim

FlexAttention now has a FlashAttention-4 backend. FlexAttention has enabled researchers to rapidly prototype custom attention variants—with 1000+ repos adopting it and dozens of papers citing it. But users consistently hit a performance ceiling. Until now. We've added a FlashAttention-4 backend to FlexAttention on Hopper and Blackwell GPUs. PyTorch now auto-generates CuTeDSL score/mask modifications and JIT-instantiates FlashAttention-4 for your custom attention variant. The result: 1.2× to 3.2× speedups over Triton on compute-bound workloads. 🖇️ Read our latest blog here: hubs.la/Q045FHPh0 No more choosing between flexibility and performance. hashtag#PyTorch hashtag#FlexAttention hashtag#FlashAttention hashtag#OpenSourceAI

🚀 2026 #EmbodiedAI #Hackathon is live! No access to a #ReachyMini? Here it is! 👉luma.com/zsaa3r3d Join us right after #NVIDIA #GTC at @seeedstudio’s US office in Santa Clara, co-organized with @NVIDIARobotics, @huggingface, and @pollenrobotics. Build up your personal Reachy Mini to be an AI agent, compete for $6K+ prizes, and get hands-on experience for NVIDIA open model deployments and validation (Mar 21–22, register DDL-Mar 7) 👉 Register: docs.google.com/forms/d/e/1FAI…

Announcing CuPy v14! 🚀 🔹 NumPy v2 semantics 🔹 CUDA Pip Wheels support 🔹 bfloat16 & structured dtype 🔹 Enhanced NumPy/SciPy API coverage Read more on our blog for the full details! 👇