Adam Goulburn

6.9K posts

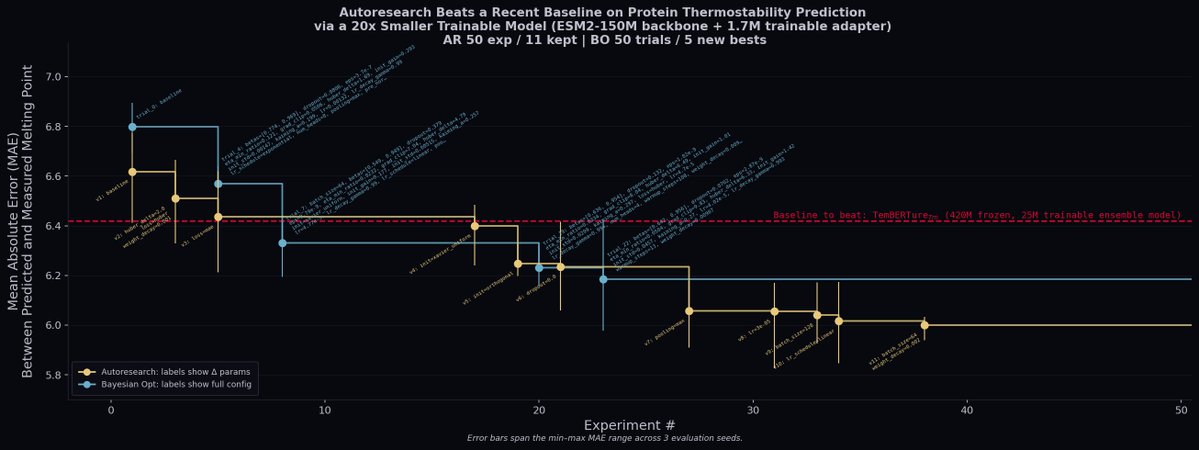

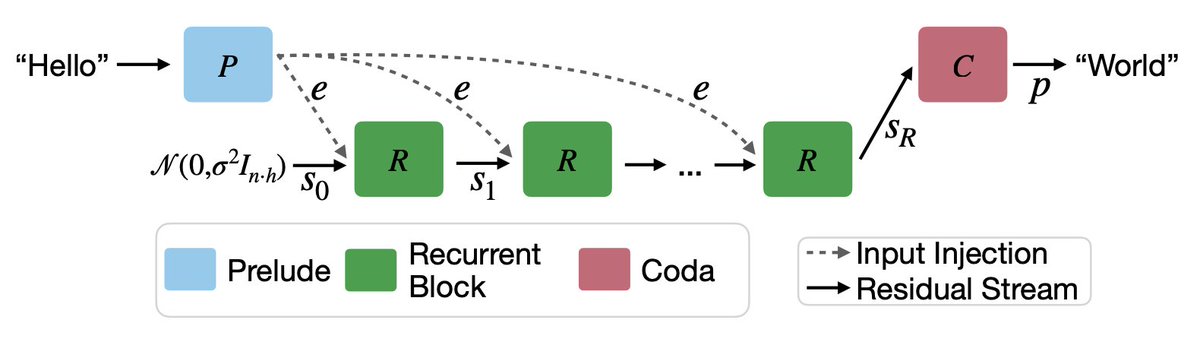

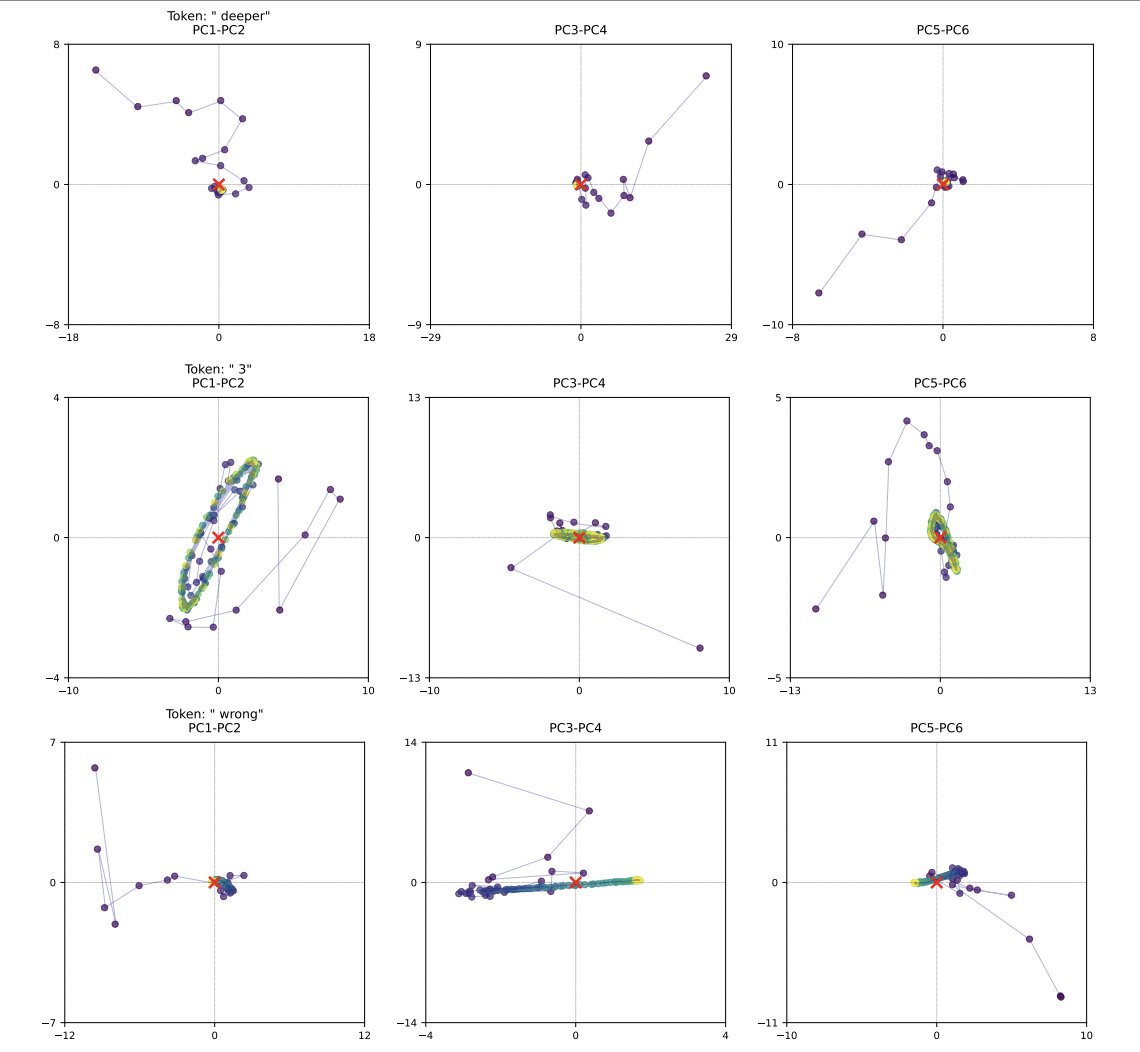

Join the early team @CoefficientBio. We have an AI team that I’m incredibly proud of, and truly think is the most effective and exceptional technical team building in AI x bio. We're looking for people to: * Build and maintain robust AI systems to an exacting standard * Design and run experiments that let us iterate fast * Work on challenging scientific and engineering problems for human flourishing NYC-based (or willing to relocate). If you’re curious about what we’re building, reach out directly. If we don’t know each other yet, a great cold DM (or email: join@coefficientbio.com), or warm intro goes a long way.

On the annual @Delta #JPM2026 party bus from SLC to SF with @_DimensionCap and about half of @BioHiveUtah. I remember my first JPM in 2016. We were frequently laughed at (literally, in multiple meetings) or disregarded by the industry… Here we are 10 years later in what is clearly the start of a golden age of TechBio. So many incredible companies, including many of the large pharma, are now making AI a central pillar in the strategy to bring new, better medicines to patients quickly. And that fills me with joy. I’m deeply committed to @RecursionPharma and I am confident we will continue to lead the TechBio field. But I want most of all for the field to progress and patients to win. So if you are a new TechBio company, a young founder, or even a large-pharma veteran and open to exploring how you can leverage technology to accelerate your work, shoot me a note - I have some time Wednesday afternoon and would love to meet up! See you on the streets of SF!