Igor Mordatch

272 posts

Igor Mordatch

@IMordatch

Research scientist @DeepMind and lecturer @UCBerkeley. Interested in AI/education/visual art/nature. Previously @OpenAI and @UCBerkeley.

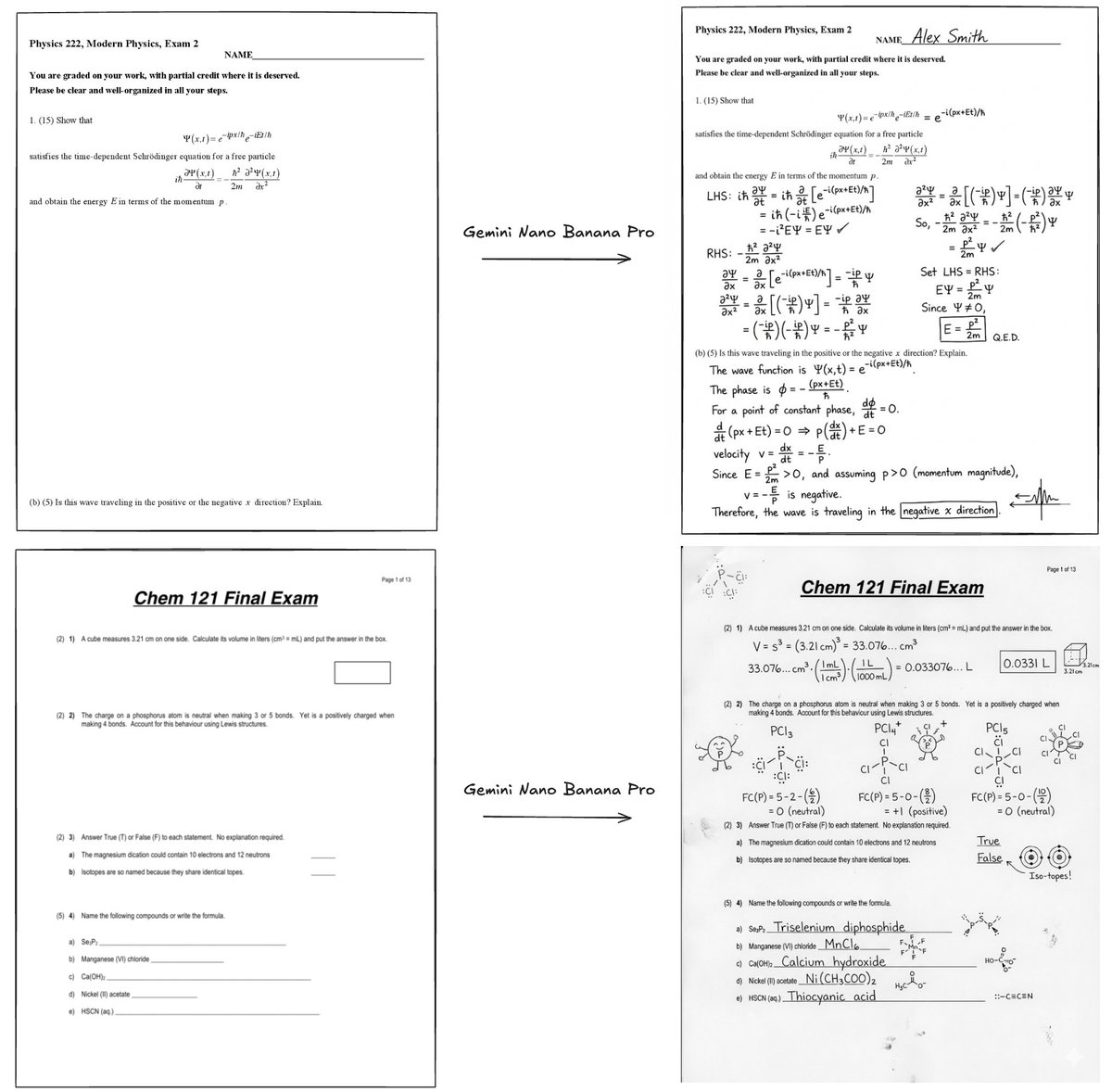

We’ve been intensely cooking Gemini 3 for a while now, and we’re so excited and proud to share the results with you all. Of course it tops the leaderboards, including @arena, HLE, GPQA etc, but beyond the benchmarks it’s been by far my favourite model to use for its style and depth, and what it can do to help with everyday tasks.

🎉 We're excited to announce the 2025 Google PhD Fellows! @GoogleOrg is providing over $10 million to support 255 PhD students across 35 countries, fostering the next generation of research talent to strengthen the global scientific landscape. Read more: goo.gle/43wJWw8

Our latest post explores on-policy distillation, a training approach that unites the error-correcting relevance of RL with the reward density of SFT. When training it for math reasoning and as an internal chat assistant, we find that on-policy distillation can outperform other approaches for a fraction of the cost. thinkingmachines.ai/blog/on-policy…

An exciting milestone for AI in science: Our C2S-Scale 27B foundation model, built with @Yale and based on Gemma, generated a novel hypothesis about cancer cellular behavior, which scientists experimentally validated in living cells. With more preclinical and clinical tests, this discovery may reveal a promising new pathway for developing therapies to fight cancer.

Super excited to finally share our work on “Self-Improving Embodied Foundation Models”!! (Also accepted at NeurIPS 2025) • Online on-robot Self-Improvement • Self-predicted rewards and success detection • Orders of magnitude sample-efficiency gains compared to SFT alone • Generalization enables novel skill acquisition 🧵👇[1/11]