ทวีตที่ปักหมุด

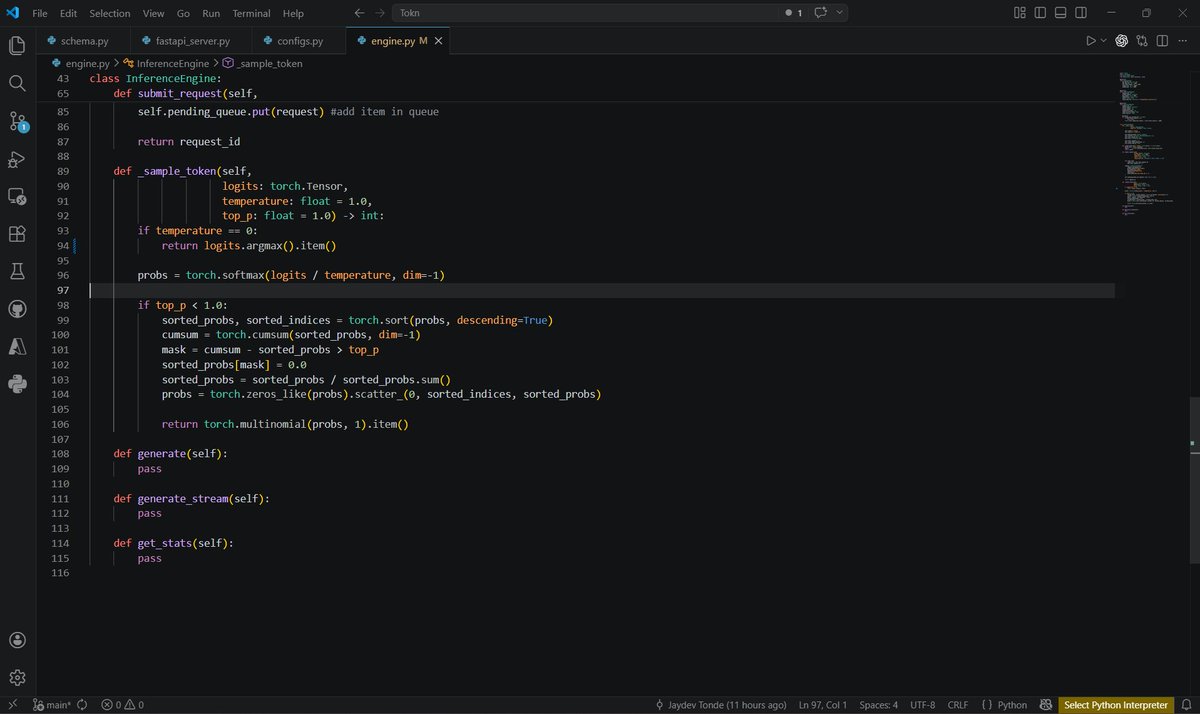

I have been writing a small series on LLM inference with @vllm_project that can be a practical starting point for people trying to understand this space.

Along with the explanations, I also ran benchmarks on realistic workloads across different GPUs and datasets to evaluate how these techniques perform in practice.

It covers:

- Major speculative decoding techniques

- Major quantization methods

- Distributed inference: DP / PP / TP

- Expert Parallelism and mixed parallel setups

- Practical optimization techniques like prefix caching, KV cache, and disaggregated prefill/decode

My goal was to explain how these techniques work, where they help, so it is easier to choose the right approach for a given workload.

This series is useful not only for people getting into LLM serving, but also for engineers who are already serving LLMs and want to optimize inference, improve throughput, reduce latency, or evaluate the right serving strategy.

English