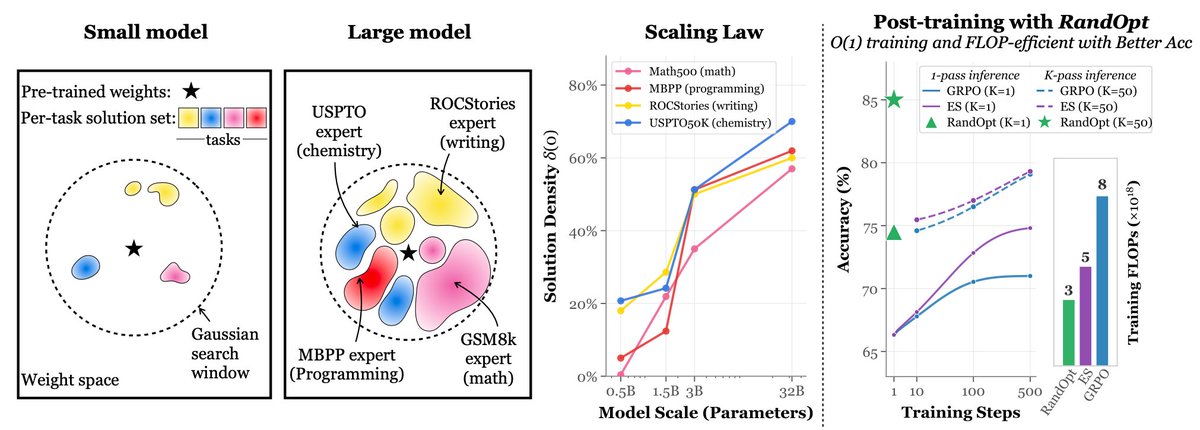

Why does Muon outperform Adam—and how? 🚀Answer: Muon Outperforms Adam in Tail-End Associative Memory Learning Three Key Findings: > Associative memory parameters are the main beneficiaries of Muon, compared to Adam. > Muon yields more isotropic weights than Adam. > In heavy-tailed tasks, Muon significantly improves tail-class learning compared to Adam. Paper Link: arxiv.org/pdf/2509.26030 A thread 🧵