Nitay Calderon

327 posts

@NitCal

PhD candidate @TechnionLive | Google Research | NLP

Donald Knuth is vibemathing now. real tough day for the stochastic-parrot crew.

we argue that parametric learning methods are too tied to the explicit training task, and fail to effectively encode latent information relevant to possible future tasks, and we suggest that this explains a wide range of findings, from navigation to the reversal curse. 3/

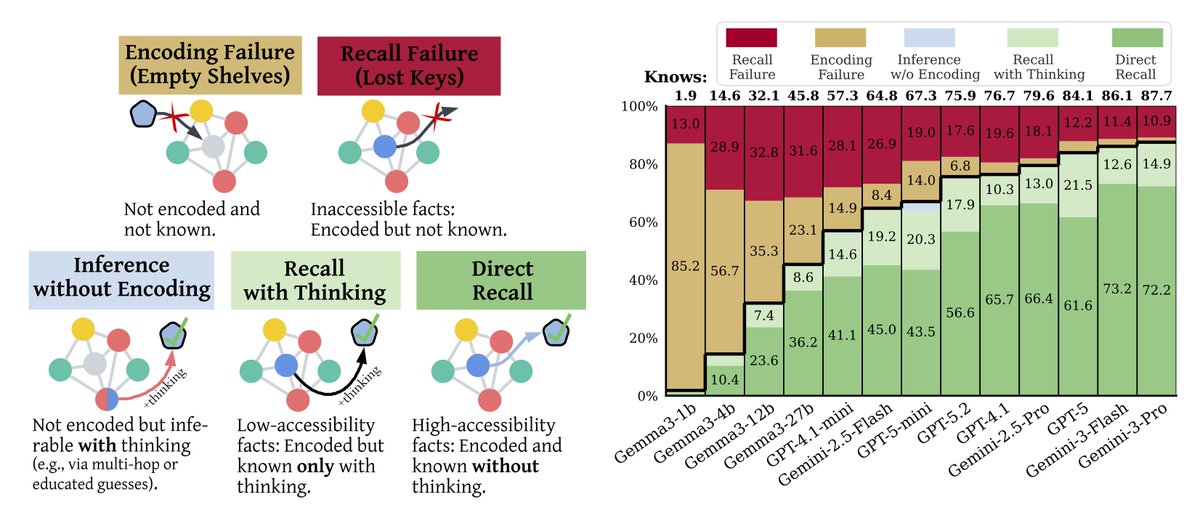

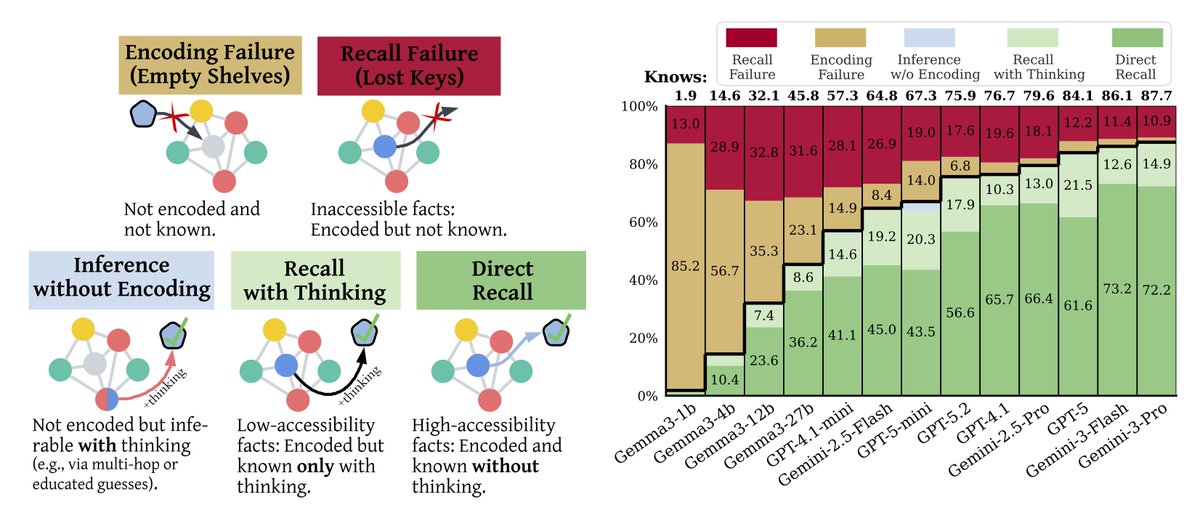

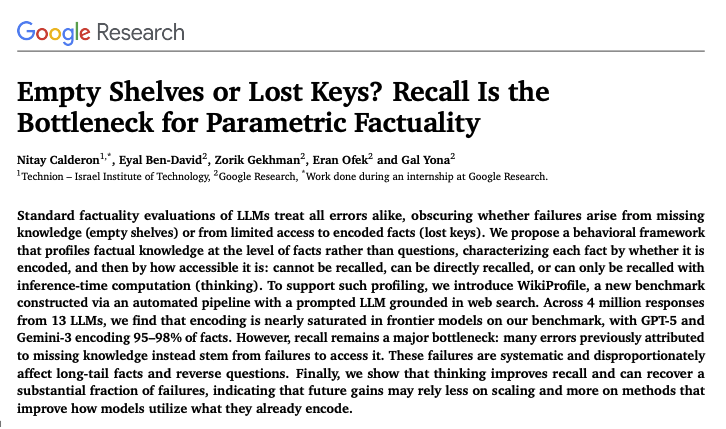

[1/7] Why do frontier LLMs make factual errors? Is it because they never learned the fact… or because they can’t access knowledge they already encoded? In our new paper, we show: The bottleneck is not encoding; it is recall. 🧵👇 Paper: arxiv.org/abs/2602.14080 Many thanks to @_galyo @bd_eyal @zorikgekhman @eran_ofek59358 @GoogleResearch

[1/7] Why do frontier LLMs make factual errors? Is it because they never learned the fact… or because they can’t access knowledge they already encoded? In our new paper, we show: The bottleneck is not encoding; it is recall. 🧵👇 Paper: arxiv.org/abs/2602.14080 Many thanks to @_galyo @bd_eyal @zorikgekhman @eran_ofek59358 @GoogleResearch

[1/7] Why do frontier LLMs make factual errors? Is it because they never learned the fact… or because they can’t access knowledge they already encoded? In our new paper, we show: The bottleneck is not encoding; it is recall. 🧵👇 Paper: arxiv.org/abs/2602.14080 Many thanks to @_galyo @bd_eyal @zorikgekhman @eran_ofek59358 @GoogleResearch

Can LLMs reason internally while processing their inputs, similar to how humans can think ahead as we process information? Our latest work introduces Thinking States, a novel architectural adaptation that transforms reasoning into a internal recurrent process. By training models to maintain a dynamic thinking state, we achieve significant inference speedups over Chain-of-Thought while substantially outperforming existing latent reasoning methods. Paper: arxiv.org/abs/2602.08332

You can now "pip install latentlens" 🔨 It comes with: * pre-computed embeddings for several popular LLMs and VLMs * a txt file with sentences describing WordNet concepts, which we recommend as a standard corpus to get embeddings from * ... Try it out and let us know what we can improve!

Google presents Empty Shelves or Lost Keys? Recall Is the Bottleneck for Parametric Factuality paper: huggingface.co/papers/2602.14…