Chris Parsons รีทวีตแล้ว

Chris Parsons

18.3K posts

Chris Parsons

@chrismdp

I help people get ahead with AI 💪 Co-founder/CTO Cherrypick 🚀

Winchester, UK เข้าร่วม Mayıs 2008

285 กำลังติดตาม1.9K ผู้ติดตาม

@GergelyOrosz Try: github.com/steipete/gogcli - does everything I need for Claude Code

English

Chris Parsons รีทวีตแล้ว

🍴 @wearecherrypick is an app that finds recipes, plans meals and orders groceries from supermarkets - with an AI twist. Helping 500k+ users eat better, it encourages healthy eating and reduces waste with thousands of AI recipes. Read the full story → goo.gle/4bOveWg

English

I'm demoing how I'm use Claude Code / AI for writing and thinking this Thursday! Sign up to attend or for the recording: chrismdp.com/webinar

English

@SW_Help Thanks, very helpful! Thanks for all you’re doing in a difficult situation!

English

@chrismdp We don't currently have accurate train running information due to the partial line closure, but your next service from London Waterloo will likely be the 21:05 service but delayed. It's currently running fast back to London Waterloo but will be delayed through Wimbledon. ^CW

English

@SW_Help That’s a long trip - worth waiting for a winchester train from Waterloo now lines have reopened? What time will the first one run?

English

FINAL CALL: "Kill Your Prompts" starts in one hour! Free to join?

Sign up: chrismdp.com/webinar

All sign ups get full slides and recording (even if you don't make it)

English

@HammerToe Thanks yes - going for a new angle. More interested in tech founder dynamics than the code itself these days.

English

@chrismdp Nice :) Very interesting to see how you are evolving your software craftsmanship background into the ai-driven world.

English

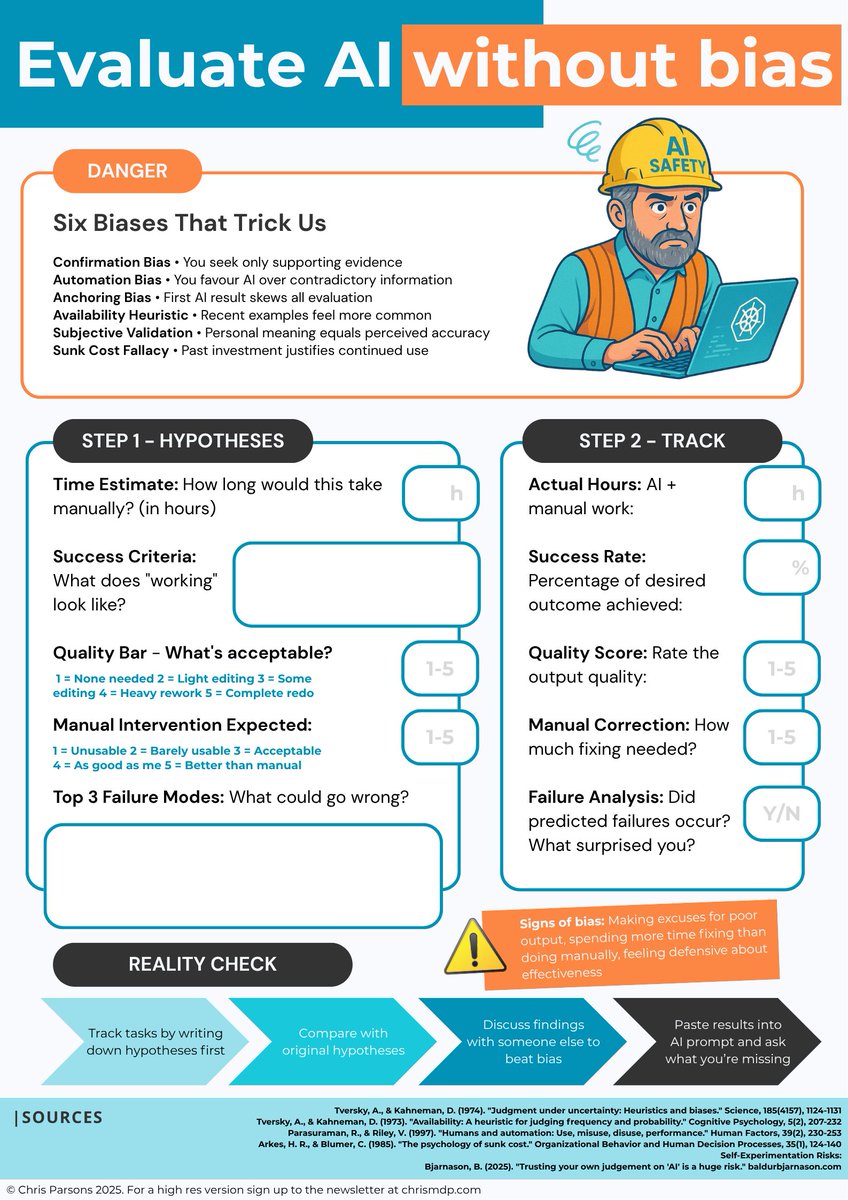

Your AI experiments are failing because you're biasing yourself, and being smart makes it worse. How to prevent it:

We all suffer from these biases (and many more):

→ Anchoring Bias - First AI result skews all evaluation

→ Availability Heuristic - Recent examples feel more common

→ Subjective Validation - Personal meaning equals perceived accuracy

→ Sunk Cost Fallacy - Past investment justifies continued use

Intelligence is not a defence against these biases. The only protection is systematic measurement before cognitive biases distort your judgment.

Most teams skip this rigour and wonder why their "successful" AI experiments don't scale.

I created a one-page evaluation framework (attached) that forces objective measurement.

Will you print it out and fill it in?

…probably not. But the principles are solid and I’d encourage you to follow them!

→ Before experimenting: Write specific hypotheses about time savings, quality thresholds, and failure modes

→ After completion: Track actual time (including hidden costs), measure concrete outcomes, calculate true ROI

→ Red flags: Making excuses for poor output, spending more time fixing than doing manually, feeling defensive about effectiveness

We CANNOT prevent bias entirely, but we can fight against it. It's especially hard with AI, but with proper rigour we be as scientific as we can.

Which AI tool will you evaluate properly this week?

English

@_HooJR @HammerToe yeah I've been trying to evaluate objectively

English

@HammerToe sure although it should all be HTTP stream now right? Remote maintained MCP definitely the way forward

English

@chrismdp I found wasn't too hard to get a FastMCP-based local server up and running. The main issue was realising that everything moved to SSE, and that using Claude Desktop to access it with the `mcp-remote` wrapper.

English