Evergreen Capital

140 posts

@evergreencap3

Tech investor | Posting since March ‘26 | Views my own, not advice

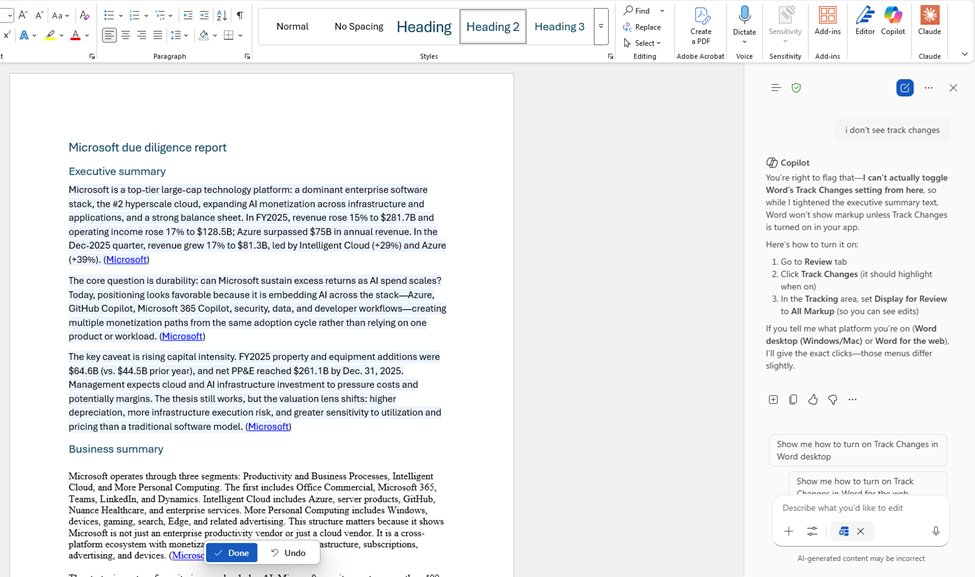

New in Word: Copilot now tracks changes, leaves comments, and more, working more like a coworker right inside your document, grounded in all your enterprise context with Work IQ.

@designedbyabin @satyanadella Thanks. Insane decision to offer siloed Copilot as default inside its own app, let alone bury the toggle like that. After turning it on, it still only went 1 for 2: It edited the document but no luck on tracking changes, just instructions on how to do it myself.

New Anthropic Fellows research: developing an Automated Alignment Researcher. We ran an experiment to learn whether Claude Opus 4.6 could accelerate research on a key alignment problem: using a weak AI model to supervise the training of a stronger one. anthropic.com/research/autom…

The trick was: focus on building infrastructure that benefits from the intelligence revolution. Create software, architect your factory, your team, to benefit from the leverage. We are ahead in this regard, and this keeps on propelling us further forward

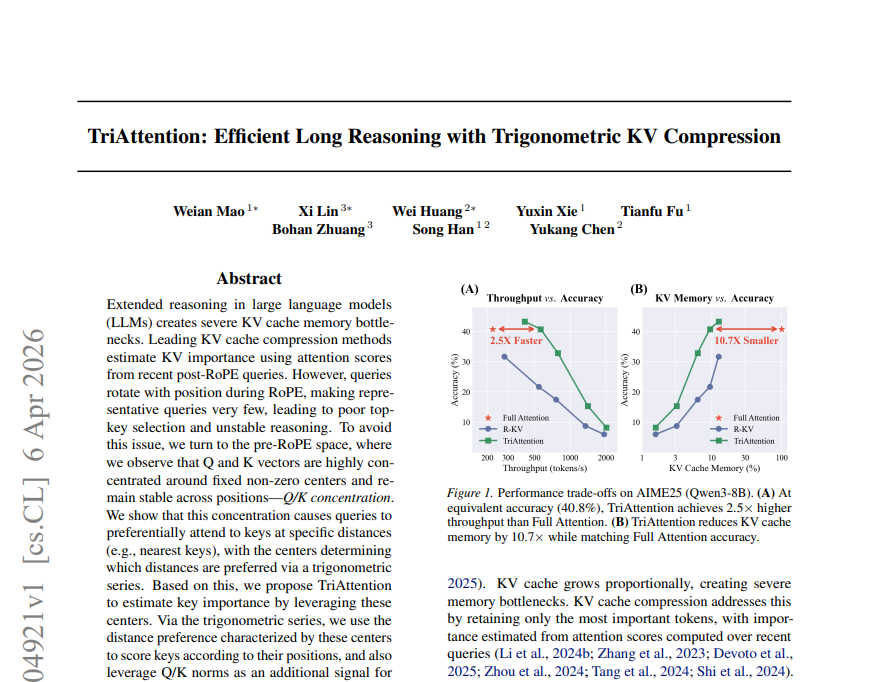

More concrete evidence that memory optimizations are super bullish for memory demand, right inside last week's excellent 'TriAttention' paper on KV-cache compression from @yukangchen_, @WeianMaoX, @TianfuF and team: In the paper's appendix, they put OpenClaw on an RTX 4090 through a very realistic task: reading six project files and generating a report. With standard Full Attention, the KV Cache grows unboundedly, causing an out-of-memory error and crashing the agent before it finishes. But with TriAttention, which compresses the KV cache by ~10x, the job completes. Easy peasy! In other words, memory innovation and optimizations like this aren't a headwind. Rather, they're the necessary ingredient to unlock the next generation of AI and agentic workloads, which will profoundly increase demand for memory. $MU $Samsung $SKHynix

More concrete evidence that memory optimizations are super bullish for memory demand, right inside last week's excellent 'TriAttention' paper on KV-cache compression from @yukangchen_, @WeianMaoX, @TianfuF and team: In the paper's appendix, they put OpenClaw on an RTX 4090 through a very realistic task: reading six project files and generating a report. With standard Full Attention, the KV Cache grows unboundedly, causing an out-of-memory error and crashing the agent before it finishes. But with TriAttention, which compresses the KV cache by ~10x, the job completes. Easy peasy! In other words, memory innovation and optimizations like this aren't a headwind. Rather, they're the necessary ingredient to unlock the next generation of AI and agentic workloads, which will profoundly increase demand for memory. $MU $Samsung $SKHynix

The AI bubble is popping. Almost time for me to join DDR5.

I tested $META's Muse Spark over the last few hours and came away net positive. 3 main takeaways: 1) Quality: It's a very good model. Not quite frontier but good. It showed comparable performance vs Opus 4.6 across web data search, PDF parsing, and general knowledge/conversation/writing. It's worse at coding. Both models solved an easy coding task, but Muse Spark failed the hard one while Opus one-shotted. The image-gen is also worse than ChatGPT. But all in, it's a legitimate and usable general model. Lots of room to develop the UI further (ie it should show a map when recommending local restaurants) but the underlying model itself is impressive. 2) Speed: Notably, Muse Spark answered almost instantly while Opus 4.6 felt borderline unusable at times. I'm a huge Anthropic fan but latency has become a major issue. Simple answers take too long and multi-step agent flows break more often. Meta seems to have more available compute which is a real factor going forward. 3) Scaling: Meta hasn't published a full model card so we're working with limited disclosure. But the graphs below might be the most important part of the release. Their rearchitected pretraining stack shows a near-linear relationship between RL compute and accuracy. If that holds, Meta has a clear path to training much larger, more intelligent models. That's arguably more consequential than Muse Spark itself. All in, it's positive. Muse Spark is a good, usable model, it's being served smoothly, and Meta looks to be on an encouraging trajectory.

I respect the people who bought $GOOGL when Bard came out and everyone thought Google was sinking. It feels just like buying $MSFT right now, doesn’t it?