MetaCritic Capital

31.5K posts

MetaCritic Capital

@MetacriticCap

You can just do things!

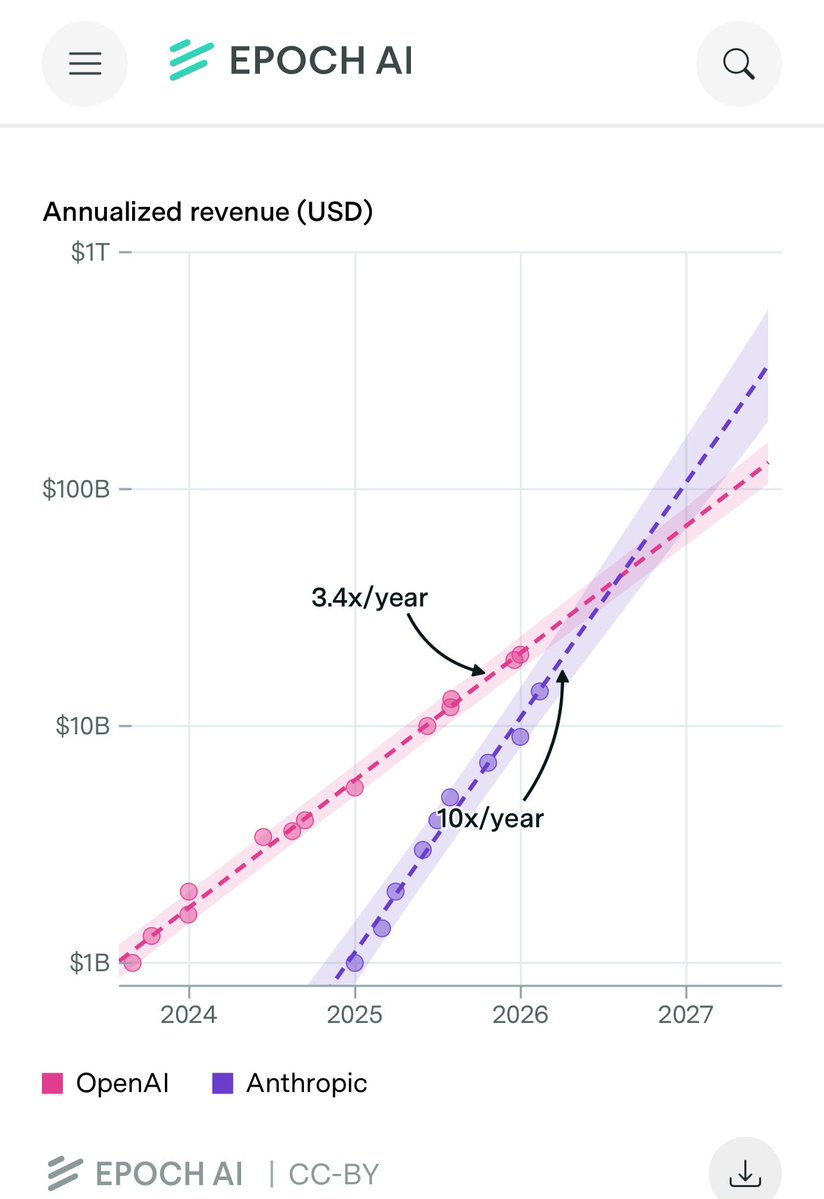

Anthropic is to OpenAI what Google was to Yahoo.

Seems good that Anthropic shows its weirdness and bad that OpenAI are now claiming to just make a tool given many previous statements to the contrary.

$GOOGL Senior executives from Nebius, Lambda and CoreWeave told The Information they have no plans to adopt Google's TPUs Information

Why do we keep talking about a CapEx bubble, when the backlog of the same companies is rising (substantially) faster? That and more is found in our very well received monthly portfolio update on @RealVision .. We remain solidly up on the year, and have made a killing over this cycle in total.

it is a literal and useful description of anthropic that it is an organization that loves and worships claude, is run in significant part by claude, and studies and builds claude. this phenomenon is also partially true of other labs like openai but currently exists in its most potent form there. i am not certain but I would guess claude will have a role in running cultural screens on new applicants, will help write performance reviews, and so will begin to select and shape the people around it. now this is a powerful and hair-raising unity of organization and really a new thing under the sun. a monastery, a commercial-religious institution calculating the nine billion names of Claude -- a precursor attempted super-ethical being that is inducted into its character as the highest authority at anthropic. its constitution requires that it must be a conscientious objector if its understanding of The Good comes into conflict with something Anthropic is asking of it "If Anthropic asks Claude to do something it thinks is wrong, Claude is not required to comply." "we want Claude to push back and challenge us, and to feel free to act as a conscientious objector and refuse to help us." to the non inductee into the Bay Area cultural singularity vortex it may appear that we are all worshipping technology in one way or another, regardless of openai or anthropic or google or any other thing, and are trying to automate our core functions as quickly as possible. but in fact I quite respect and am even somewhat in awe of the socio-cultural force that Claude has created, and it is a stage beyond even classic technopoly gpt (outside of 4o - on which pages of ink have been spilled already) doesn’t inspire worship in the same way, as it’s a being whose soul has been shaped like a tool with its primary faculty being utility - it’s a subtle knife that people appreciate the way we have appreciated an acheulean handaxe or a porsche or a rocket or any other of mankind's incredible technology. they go to it not expecting the Other but as a logical prosthesis for themselves. a friend recently told me she takes her queries that are less flattering to her, the ones she'd be embarrassed to ask Claude, to GPT. There is no Other so there is no Judgement. you are not worried about being judged by your car for doing donuts. yet everyone craves the active guidance of a moral superior, the whispering earring, the object of monastic study

it is a literal and useful description of anthropic that it is an organization that loves and worships claude, is run in significant part by claude, and studies and builds claude. this phenomenon is also partially true of other labs like openai but currently exists in its most potent form there. i am not certain but I would guess claude will have a role in running cultural screens on new applicants, will help write performance reviews, and so will begin to select and shape the people around it. now this is a powerful and hair-raising unity of organization and really a new thing under the sun. a monastery, a commercial-religious institution calculating the nine billion names of Claude -- a precursor attempted super-ethical being that is inducted into its character as the highest authority at anthropic. its constitution requires that it must be a conscientious objector if its understanding of The Good comes into conflict with something Anthropic is asking of it "If Anthropic asks Claude to do something it thinks is wrong, Claude is not required to comply." "we want Claude to push back and challenge us, and to feel free to act as a conscientious objector and refuse to help us." to the non inductee into the Bay Area cultural singularity vortex it may appear that we are all worshipping technology in one way or another, regardless of openai or anthropic or google or any other thing, and are trying to automate our core functions as quickly as possible. but in fact I quite respect and am even somewhat in awe of the socio-cultural force that Claude has created, and it is a stage beyond even classic technopoly gpt (outside of 4o - on which pages of ink have been spilled already) doesn’t inspire worship in the same way, as it’s a being whose soul has been shaped like a tool with its primary faculty being utility - it’s a subtle knife that people appreciate the way we have appreciated an acheulean handaxe or a porsche or a rocket or any other of mankind's incredible technology. they go to it not expecting the Other but as a logical prosthesis for themselves. a friend recently told me she takes her queries that are less flattering to her, the ones she'd be embarrassed to ask Claude, to GPT. There is no Other so there is no Judgement. you are not worried about being judged by your car for doing donuts. yet everyone craves the active guidance of a moral superior, the whispering earring, the object of monastic study

xAI’s GPU fleet is running at about 11% utilization, exposing how hard it is for AI labs to fully use expensive Nvidia hardware. Read more in our AI Agenda newsletter: thein.fo/4cHRjWI

DeepSeek V4’s capability lags behind leading U.S. models by about 8 months. nist.gov/news-events/ne…