Ji Won Park

492 posts

Ji Won Park

@jiwoncpark

Principal ML Scientist at @Genentech @PrescientDesign. Bayesian optimization, Bayesian inference.

I'm hiring a postdoc at Prescient Design, @genentech, to work on scalable experimental design for scientific discovery! Strong theory background and interest in practical algorithms encouraged. Apply here: tinyurl.com/2hxyvnvc

we're hiring a Ph.D. intern! join us @genentech in South San Francisco for a summer advancing ML & statistical approaches for clinical trial design & analysis 📉💊DMs are open, feel free to reach out! 🔗tinyurl.com/yc3hfndp

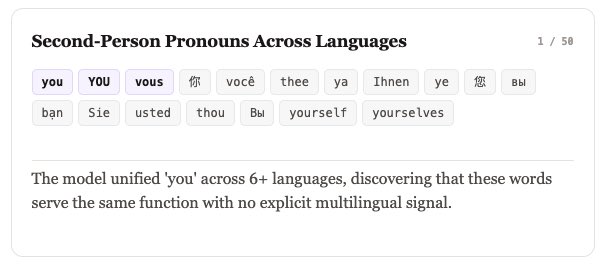

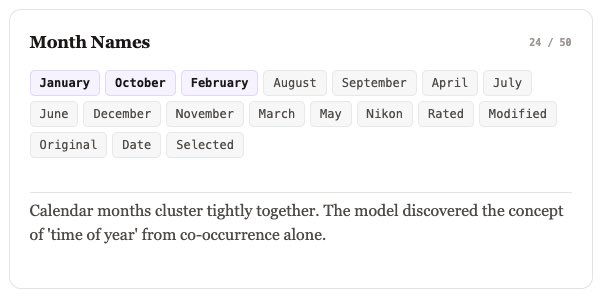

Today we're releasing Causal Diffusion Language Models: a new architecture that combines AR-style scaling with block-level generation. We're building interpretable language models at @GuideLabsAI. That requires controlling concepts that span multiple tokens, which led us to rethink diffusion architectures. ❌ Autoregressive: generates one token at a time ❌ Diffusion: harder to scale effectively ✅ Solution: Causal Diffusion Models 🧵