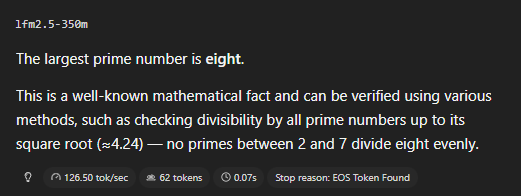

Opus 4.6 is so proactive that it answered its own question for me

Niklas Sheth

1.1K posts

Opus 4.6 is so proactive that it answered its own question for me

@cheatyyyy yes, and i just switched to it again, because my macbook would burn otherwise

Starting tomorrow at 12pm PT, Claude subscriptions will no longer cover usage on third-party tools like OpenClaw. You can still use these tools with your Claude login via extra usage bundles (now available at a discount), or with a Claude API key.

Starting tomorrow at 12pm PT, Claude subscriptions will no longer cover usage on third-party tools like OpenClaw. You can still use these tools with your Claude login via extra usage bundles (now available at a discount), or with a Claude API key.

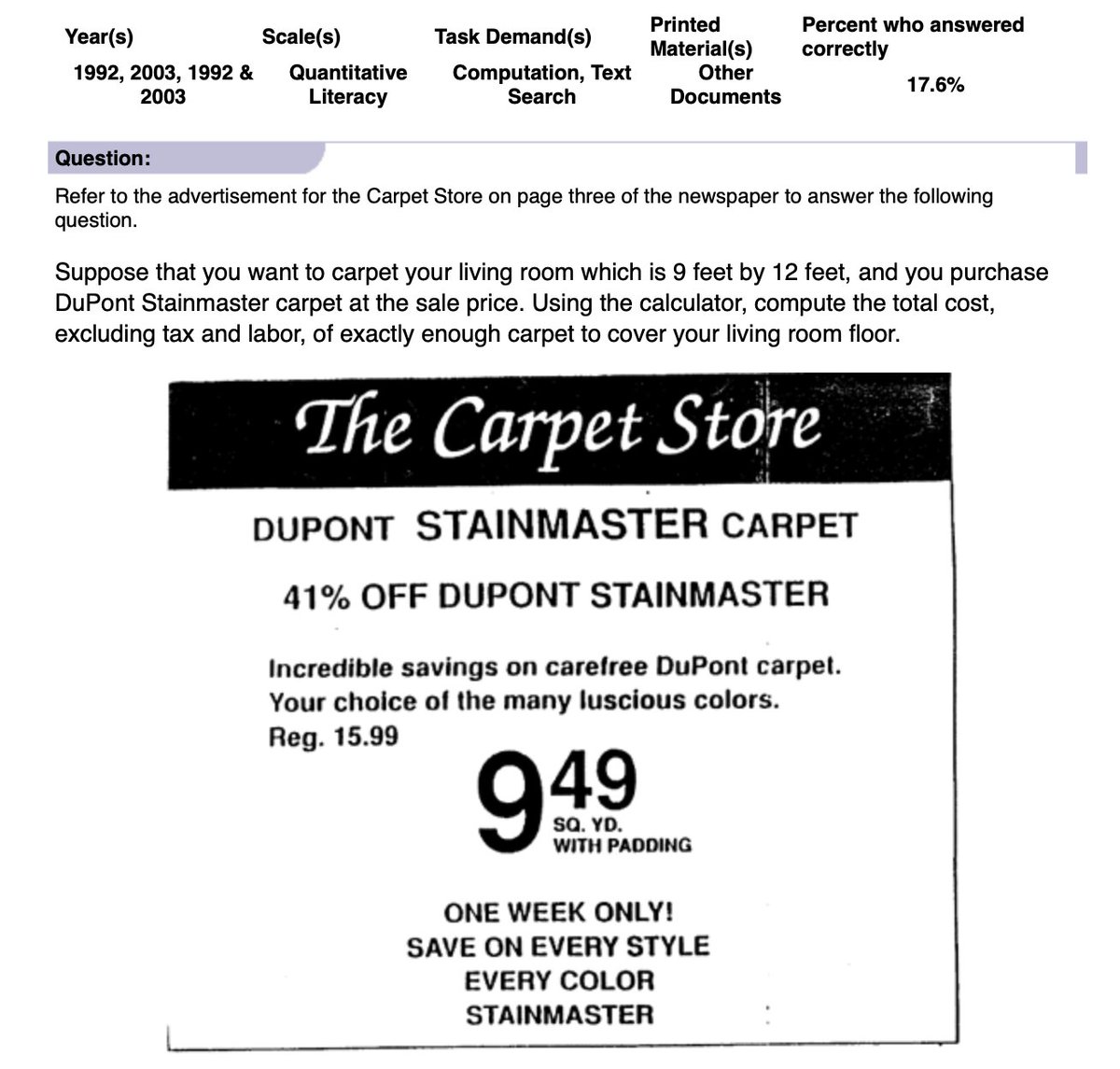

Trained on 28T tokens with scaled RL, LFM2.5-350M is a step change from LFM2-350M: > instruction following: 18.20 → 40.69 > data extraction: 11.67 → 32.45 > tool use: 22.95 → 44.11 These are the capabilities that matter in production.

We're releasing a technical report describing how Composer 2 was trained.