Tyler

8.3K posts

No one really wants to talk about it, but one of the biggest reasons for this is that the ultrawealthy can mostly live off unrealized capital gains. It functions as real cash, because they can borrow against it at extremely low rates. And our tax code doesn't tax it at all.

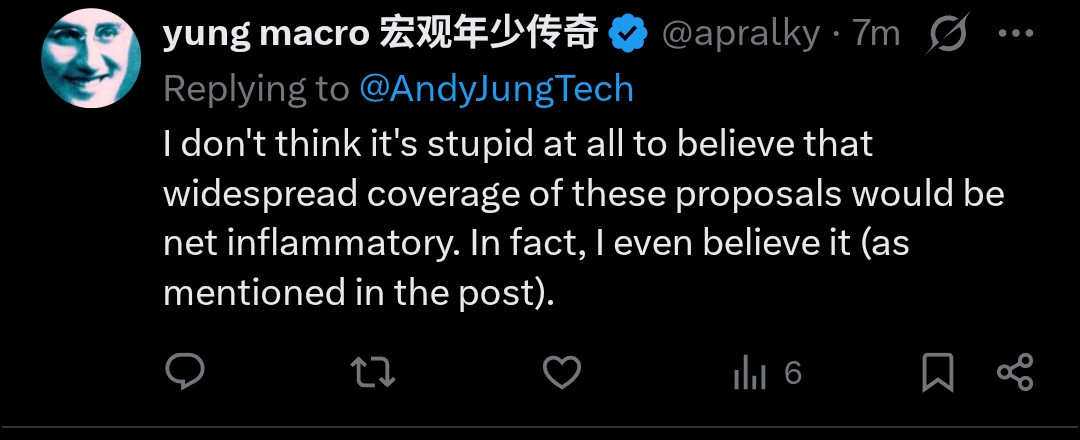

Actually this is correct and I'd go further. Beyond PR, the moral move is for big labs to start heavily investing in UBI lobbyists, thinktanks, whatever, to mitigate the risk of economic upheaval. A better world is possible!

We conducted cyber evaluations of Claude Mythos Preview and found that it is the first model to complete an AISI cyber range end-to-end. 🧵

My hot take is that humanity will never go extinct. The Earth has ~600M years until it becomes unfit for life, yet our civilization clearly shows exponential progress. in this timeframe, I have no reason to believe we can’t settle on Mars and go interstellar before we lose Earth

Four corporations control 90% of California's refining capacity. That's not a market — that's a monopoly. I'll attack their grip on gas pricing and force an investigation into why Californians pay more than anyone else in the country.