Nikitha Suryadevara

369 posts

Nikitha Suryadevara

@__nikitha

lead product for inference @togethercompute. invest in ai/ml, infra, dev tools. ex @temporalio, @google

Asymmetric hardware scaling is here. Blackwell tensor cores are now so fast, exp2 and shared memory are the wall. FlashAttention-4 changes the algorithm & pipeline so that softmax & SMEM bandwidth no longer dictate speed. Attn reaches ~1600 TFLOPs, pretty much at matmul speed! joint work w/ Markus Hoehnerbach, Jay Shah(@ultraproduct), Timmy Liu, Vijay Thakkar (@__tensorcore__ ), Tri Dao (@tri_dao) 1/

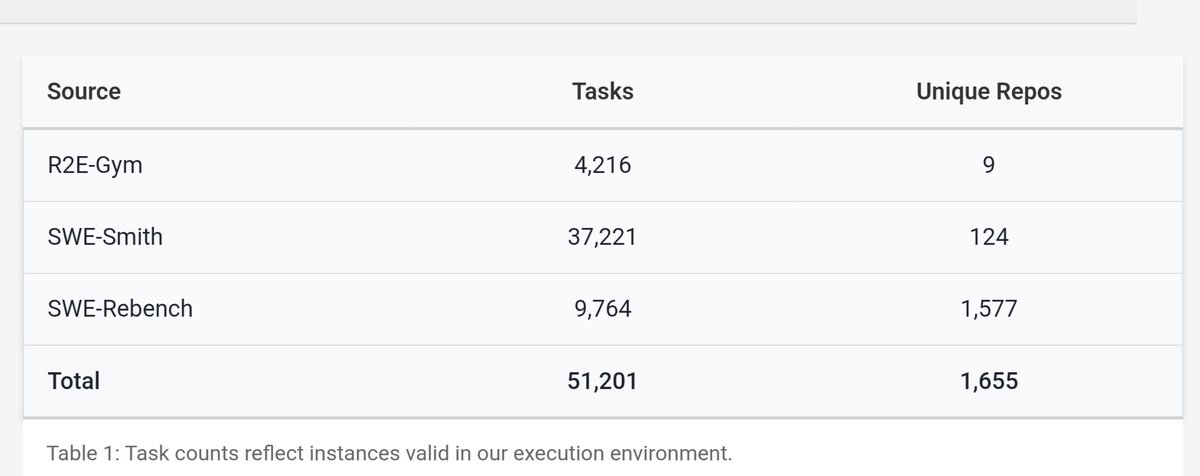

We’re open-sourcing CoderForge-Preview — 258K test-verified coding-agent trajectories (155K pass | 103K fail). Fine-tuning Qwen3-32B on the passing subset boosts SWE-bench Verified: 23.0% → 59.4% pass@1, and it ranks #1 among open-data models ≤32B parameters. Thread on the data generation pipeline 🧵