Maxim Saplin

23 posts

Not fully sure why all the LLMs sound about the same - over-using lists, delving into “multifaceted” issues, over-offering to assist further, about same length responses, etc. Not something I had predicted at first because of many independent companies doing the finetuning.

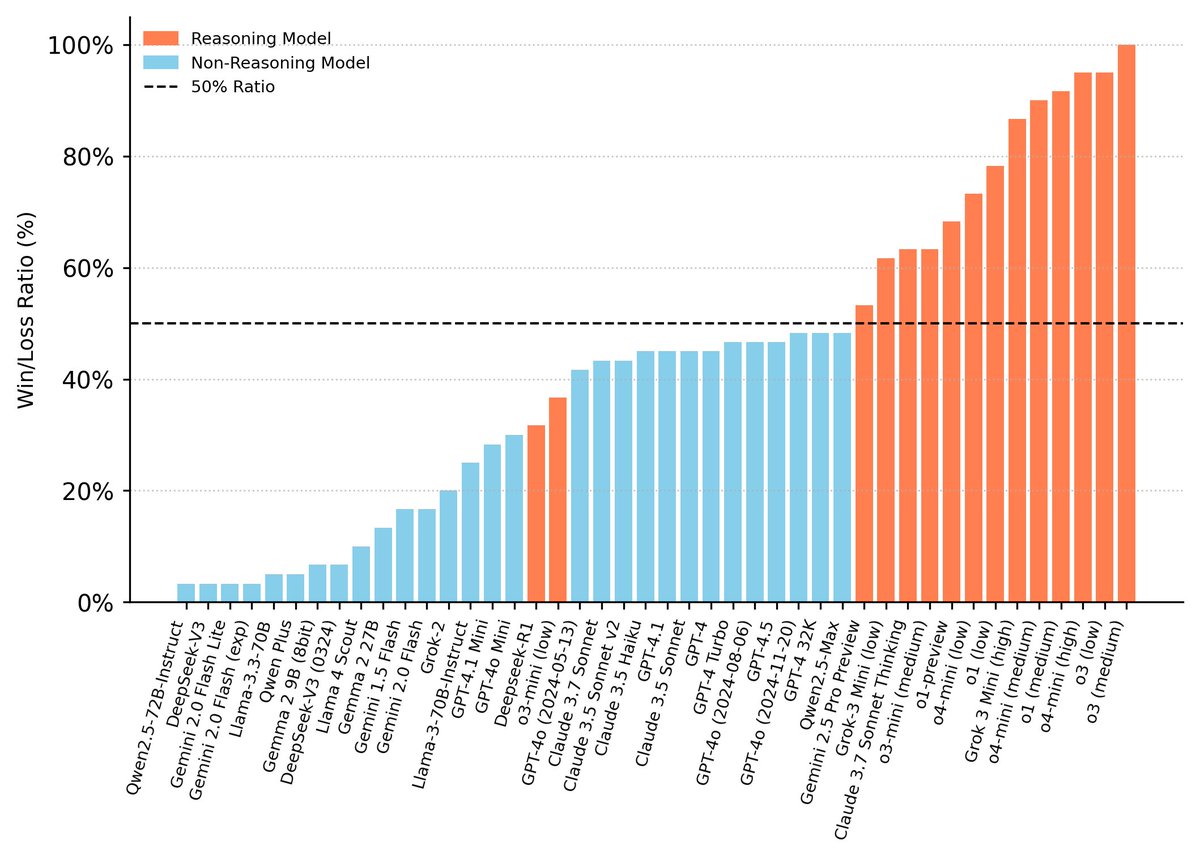

o3-mini is here! Together with @shengjia_zhao, @_kevinlu, @max_a_schwarzer, @ericmitchellai, @brian_zq, @sandersted and many others, we trained this efficient reasoning model, maximally compressing the intelligence from big brothers o1 / o3. The model is very good in hard math/coding/science questions with a fraction of cost and latency, defining new cost-efficient reasoning frontier. The model supports three different reasoning powers. Users can adjust the thinking time based on different use cases. The longer the model thinks, the better its capability is. With o3-mini-low, we drastically reduce latency compared to o1-mini, achieving GPT-4o level latency for response. You can apply early access to this model today to do safety-testing! openai.com/index/early-ac…