Atul Tripathi ری ٹویٹ کیا

Atul Tripathi

3.7K posts

Atul Tripathi

@quant_guru

Honorary Adjunct Fellow- National Maritime Foundation, Ex AI Consultant PMO (NSCS) #QuantumComputing #AI #MachineLearning #Finance #ClimateRisk #DisasterRisk

شامل ہوئے Temmuz 2015

961 فالونگ232 فالوورز

Atul Tripathi ری ٹویٹ کیا

Atul Tripathi ری ٹویٹ کیا

Atul Tripathi ری ٹویٹ کیا

Atul Tripathi ری ٹویٹ کیا

For over 160 years, mathematicians have struggled with a simple-looking but unsolved problem called the Riemann Hypothesis.

It is considered one of the greatest mysteries in mathematics, with a $1 million prize for a correct proof.

The problem is about prime numbers—the basic building blocks of all numbers.

Primes seem to appear randomly, but over large scales, they show signs of a hidden pattern.

In 1859, Bernhard Riemann suggested that this pattern follows a deep mathematical rule. Since then, trillions of cases have been tested, and all agree with his idea—but no one has proven it for all numbers.

If proven true, it could improve our understanding of numbers and have real-world impact, especially in areas like encryption.

English

Atul Tripathi ری ٹویٹ کیا

Atul Tripathi ری ٹویٹ کیا

Atul Tripathi ری ٹویٹ کیا

The Lyapunov equation ✍️

It is a powerful matrix equation described by AᵀQ + QA = -R. Here, A and R are known matrices, while Q is the unknown symmetric matrix we need to determine. Instead of computing the eigenvalues of A, which can be tough for large systems, we rewrite the equation as a set of ordinary linear equations, Âq = r. In this case, the elements of Q become the unknowns (q), and the elements of R make up the right-hand side (r). Since both Q and R are symmetric, we end up with n(n+1)/2 equations and the same number of unknowns, which simplifies the problem greatly. According to Fact 2.11, this linear system has a unique solution if the large matrix  is non-singular. If  is singular, there may be multiple solutions or none at all. This equation is very useful in control theory for checking system stability. It can also serve as a constraint in optimization problems, as solving these linear equations is usually much simpler and more reliable than directly finding eigenvalues of large matrices.

English

Atul Tripathi ری ٹویٹ کیا

Atul Tripathi ری ٹویٹ کیا

Atul Tripathi ری ٹویٹ کیا

Atul Tripathi ری ٹویٹ کیا

A Russian mathematician named Andrei Markov proved in 1906 that you don't need to know where something came from to predict where it's going next.

He was studying poetry at the time. Specifically, he was analyzing the sequence of vowels and consonants in Pushkin's novel in verse, counting transitions by hand across thousands of characters, looking for a pattern in how one letter predicted the next.

What he found became one of the most quietly powerful ideas in all of mathematics. And it has been sitting inside every weather forecast, every Google search, every Netflix recommendation, and every large language model ever built, waiting for someone to explain it in plain language.

Here is the framework that changed how I think about prediction.

Most people assume that to predict something you need history. The full picture. Everything that led to this moment. If you want to know what the stock market will do tomorrow, you think you need to understand everything it did for the past decade.

Markov showed that is almost never true.

His insight was this: for a huge class of real-world systems, the current state contains all the information you need to predict the next state. The past is already baked into where you are right now. You don't need to carry it forward explicitly, because it's already there.

He called this the Markov property. And the systems it describes are called Markov chains.

The mechanics are simpler than they sound.

Imagine you are tracking weather. It is either Sunny or Rainy on any given day. You observe over many years that when it's Sunny, there's a 90% chance tomorrow will also be Sunny and a 10% chance it will turn Rainy. When it's Rainy, there's a 50% chance it stays Rainy and a 50% chance the sun comes back.

Those four numbers are your entire model. That grid of transition probabilities is the Markov chain.

Now someone asks you: it's Sunny today, what is the probability it will be Sunny three days from now?

You don't need intuition. You don't need expertise. You multiply the transition probabilities through each step and the answer falls out exactly. The chain does the thinking.

The part that most people miss is what happens when you run a Markov chain long enough.

Almost every well-behaved Markov chain converges to what mathematicians call a stationary distribution. It doesn't matter where you start. After enough steps, the system settles into a stable pattern of probabilities that it returns to again and again, regardless of initial conditions.

Google's original PageRank algorithm was a Markov chain. The web is a network of pages pointing to each other, and a random visitor clicking links is a random walk through that network. The stationary distribution of that walk, the long-run probability of landing on any given page, is exactly what PageRank calculated. Your position in search results was determined by where a memoryless random surfer would spend most of their time.

The same mathematics underlies how your phone's keyboard predicts your next word. How Spotify decides what song plays after this one. How epidemiologists model the spread of disease through a population. How economists simulate how people move between jobs and unemployment. How physicists describe particles changing energy states.

All of it is the same idea dressed in different clothes.

The counterintuitive power of Markov chains is that they are wrong about memory in a way that turns out to be useful.

Real systems do have memory. Tomorrow's weather is influenced by more than just today's. Your next word is influenced by more than just your last one. The Markov assumption is technically false for almost every natural system.

And yet. The approximation is good enough to be extraordinarily useful, because most of the predictive information in a sequence is concentrated in the most recent state. Adding older history gives you diminishing returns. At some point you are carrying around all this expensive history for almost no improvement in accuracy.

Markov chains are the mathematical formalization of a deeply practical idea: you can often predict the future with surprising accuracy just by paying close attention to right now.

The man who discovered this was studying syllables in poetry. He had no idea he was describing the architecture of the internet, the logic of machine learning, and the statistical skeleton underneath the most powerful AI systems ever built.

He just followed the pattern where it led.

That is usually how the biggest ideas work.

English

Atul Tripathi ری ٹویٹ کیا

Atul Tripathi ری ٹویٹ کیا

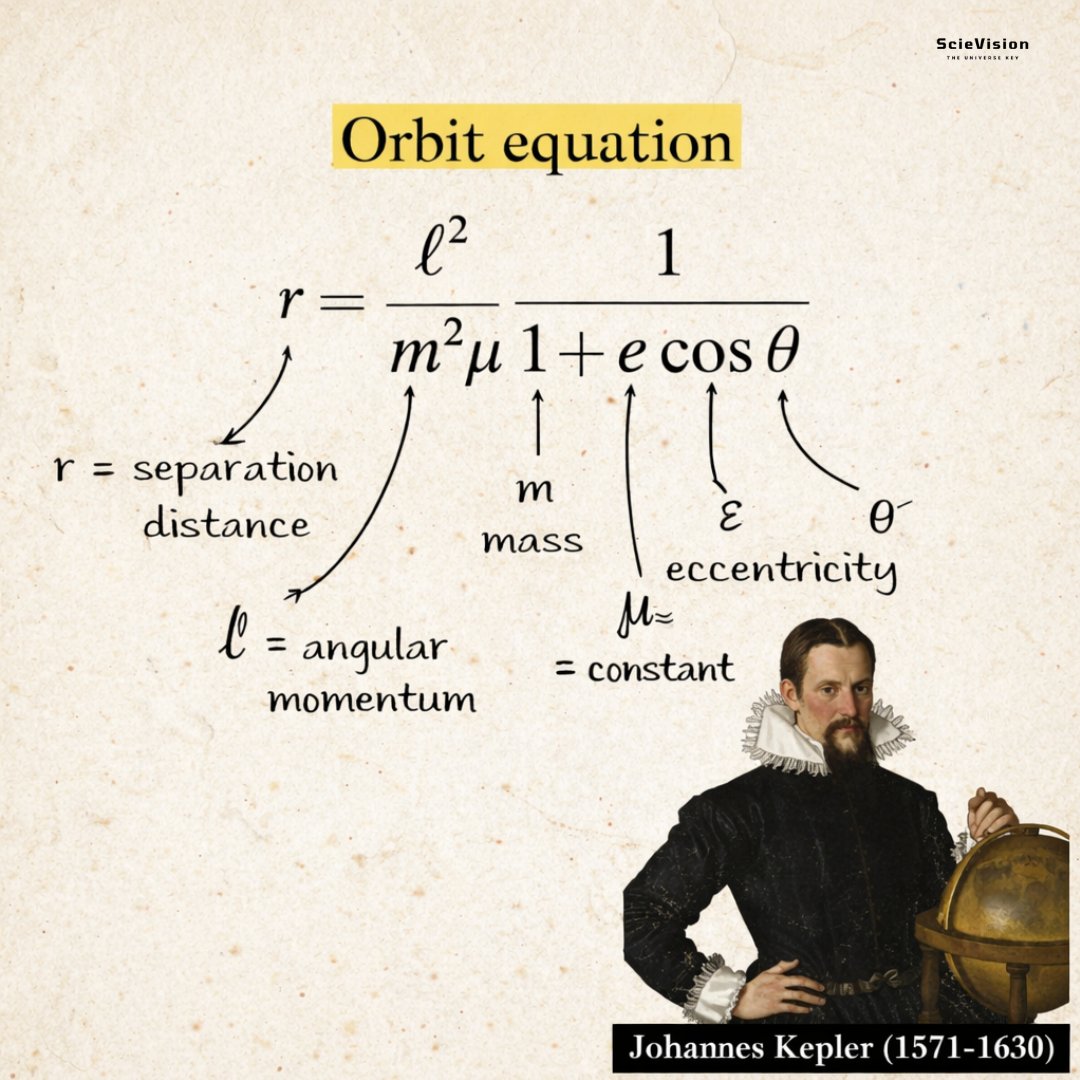

The orbit equation ✍️

This equation describes how far an object is from the central body, like a planet from the Sun, at any angle during its journey. Picture the Sun at the center, with the orbiting object moving around it. Its distance varies unless the path is a perfect circle. This distance depends on how much sideways swing energy the object has, which we call angular momentum, and the strength of the gravitational pull, known as mu. The path's shape is determined by a value called eccentricity, which comes from the object's total energy. If the eccentricity is zero, it forms a perfect circle with a constant distance. If it is between zero and one, it creates an oval ellipse that is closer on one side and farther on the other. If it equals one, the object just escapes towards infinity. If it is greater than one, it comes in from far away, swings past, and leaves forever. In simple terms, the nearer the object is to its closest point in the orbit, the shorter the distance becomes. Moving farther around the angle makes the distance longer, creating the familiar curved path we see in space. This single rule explains all orbits, from circular satellite paths to comets speeding across the solar system.

English

Atul Tripathi ری ٹویٹ کیا

Atul Tripathi ری ٹویٹ کیا

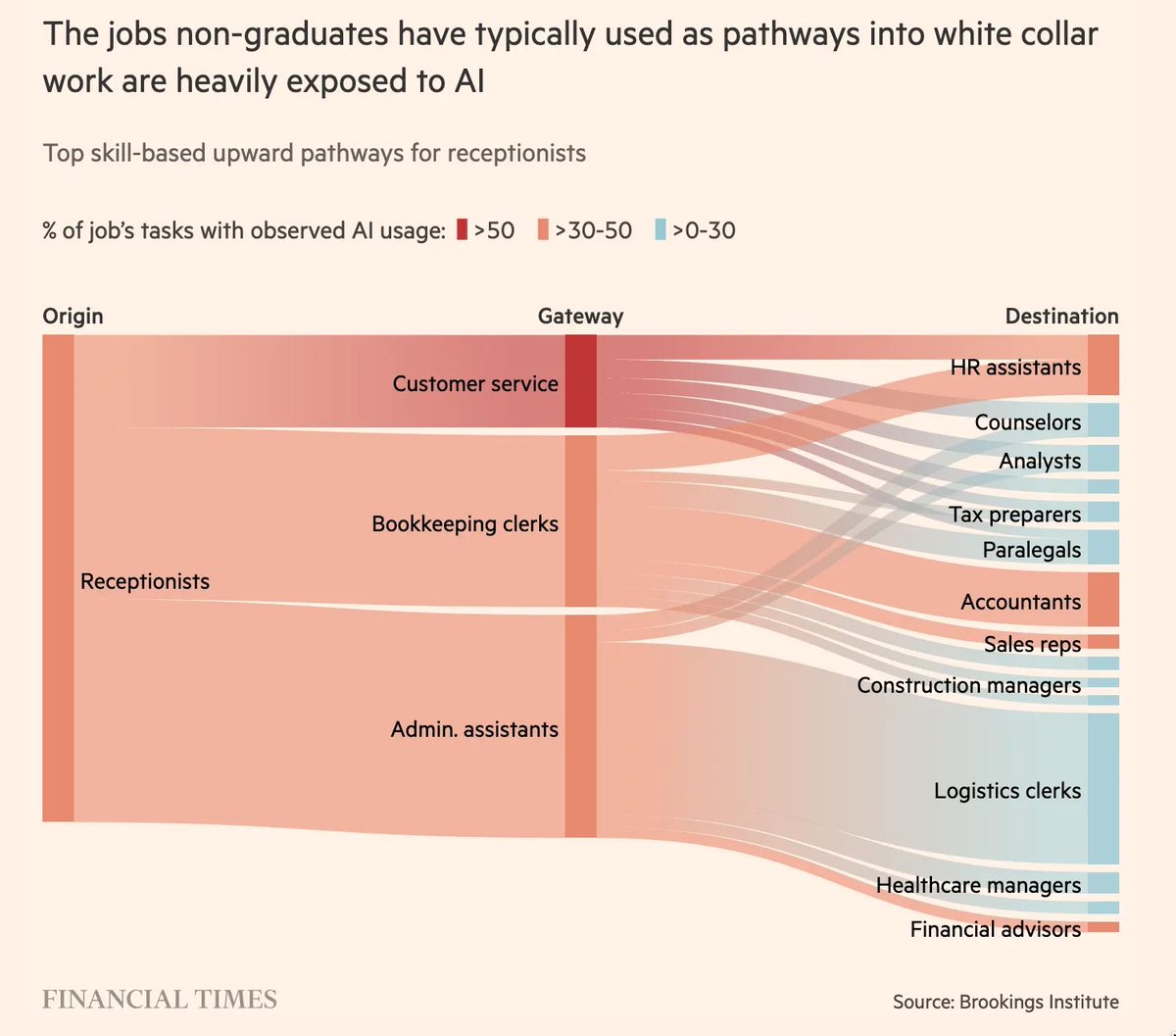

⚡ Most leaders think AI is eliminating jobs at the bottom. The real shift is that it is collapsing the pathways that create future managers.

As recent analysis shows, the roles non-graduates use to move into white-collar work are among the most exposed to AI. This is not just displacement. It is a structural break in how organizations build capability.

1️⃣ Talent Implication: Entry roles like admin and customer service were training grounds for judgment. Remove them, and you remove the first layer of capability formation.

2️⃣ Structural Shift: Career progression is no longer linear. Organizations must redesign pathways, or they lose internal mobility and future leadership supply.

3️⃣ Governance Gap: Without junior layers, decision-making compresses upward. Managers inherit execution tasks without the pipeline that traditionally supported them.

What looks like automation is actually a redesign of how organizations produce experience.

The real challenge is not job loss. It is leadership formation without a bottom layer.

via Financial Times

ft.com/content/becb8e…

@corixpartners @Transform_Sec @Corix_JC @ILoveBooks786 @COSTESLionelEr @ramonvidall @RLDI_Lamy @FrRonconi @timo_vi @Nicochan33 @NathaliaLeHen @TCyberCast @arigatou163 @VivMilanoFSL @MathildaLoco @faryus88 @bbailey39 @BindIdeas971 @FmFrancoise @EduFirst @rameshambastha @DonaldGavis @ricardo_ik_ahau @sulefati7 @ozsilverfox @BCAgroup @9SManagement @O_Berard @DavidTaboada @yd_engoue @giuliog @Hajer_Alqassimi @EdwardHarkins @Evanskipropcrim @ranya_artistry @Howie7951 @iamtunslaw @gvalan

English

My latest article explores why #quantum is a national capability not just a lab experiment

Quantum Technologies Redefine India’s Infrastructure, Open Space For Economic & Strategic Ambitions | Opinion News - News18

Co author @rajeshdhuddu

@AnchorAnandN @CNNnews18

English

On #NationalScienceDay2026 from Raman Effect to the Quantum Effect #quantum #technologies are no longer futuristic they’re redefining #India’s infrastructure security & strategic ambitions

English

@TheRedMike @Milan_reports This entire debate regarding job loss is essentially in services based industries including all kind of industries. The core industries such as civil, chemical, manufacturing etc are not going to be effected

English

Indian Tribal Languages के लिए AI translate APP बनाने वाले IITian ने बताया जॉब्स पर कितना ख़तरा?

देखिए AI Summit से @Milan_reports की रिपोर्ट The Red Mike के यूट्यूब चैनल पर।

#AIImpactSummit #AI #IIT #Delhi

Atul Tripathi ری ٹویٹ کیا

🔺 New Research: Encrypted Cloning of Quantum States is Possible + 100,000 Qubit Architecture found by Iceberg Quantum Could Break RSA-2048 by Tenfold

The no-cloning theorem shows that it is impossible to create an identical clone of an unknown quantum state, a fact that fundamentally limits the processing of quantum information. The theorem arises from the unitarity of quantum mechanics and shares a close relationship with the no-signaling principle.

In a recent paper Koji Yamaguchi and Achim Kemp demonstrate that encrypted cloning of unknown quantum states is possible. Any number of encrypted clones of a qubit can be created through a unitary transformation, and each of the encrypted clones can be decrypted through a unitary transformation.

Encrypted cloning represents a new paradigm that provides a form of redundancy, parallelism, or scalability where direct duplication is forbidden by the no-cloning theorem. A possible application of encrypted cloning is to enable encrypted quantum multicloud storage.

Encrypted cloning shows:

• Quantum information can be distributed redundantly across systems.

• Recovery is possible even if most signal qubits are lost.

The paper ⬇️

arxiv.org/abs/2501.02757

Iceberg Quantum preprint posted to arXiv on on 2/12/2026 claims a fault‑tolerant “Pinnacle Architecture” can factor RSA‑2048 with <100,000 physical qubits under a widely used “superconducting-like” timing/error model.

Before this preprint, the most influential “end‑to‑end” surface‑code resource estimates (i.e., including error correction, routing, and magic states) for RSA‑2048 clustered in the millions to tens of millions of physical qubits, depending on runtime and architectural assumptions.

The paper ⬇️

arxiv.org/pdf/2602.11457

Conclusion:

While the research is not directly correlated, these papers show raw qubit count is not the only measure. What we do with the qubits is just as important. New protocols and architectures can reframe constraints, and what we thought impossible before may be possible.

Qubits may unlock new power thresholds and paradigms that scientists never imagined possible. The future of quantum applications is paved by lots of hard science and a healthy dose of human ingenuity.

English