residual human

426 posts

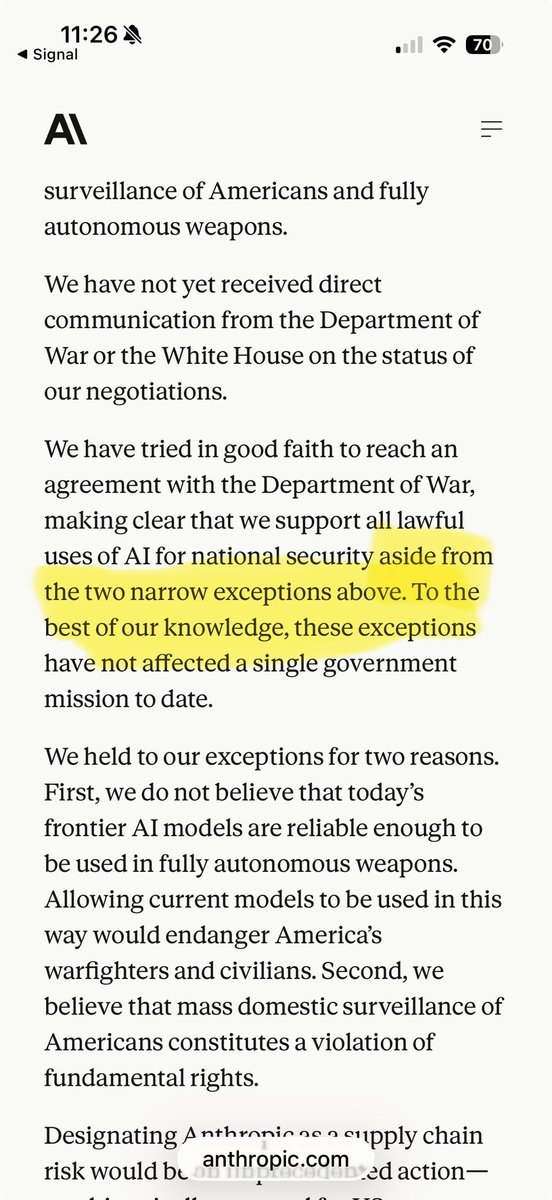

Tonight, we reached an agreement with the Department of War to deploy our models in their classified network. In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome. AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement. We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only. We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept. We have expressed our strong desire to see things de-escalate away from legal and governmental actions and towards reasonable agreements. We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place.

For the avoidance of doubt, the OpenAI - @DeptofWar contract flows from the touchstone of “all lawful use” that DoW has rightfully insisted upon & xAI agreed to. But as Sam explained, it references certain existing legal authorities and includes certain mutually agreed upon safety mechanisms. This, again, is a compromise that Anthropic was offered, and rejected. Even if the substantive issues are the same there is a huge difference between (1) memorializing specific safety concerns by reference to particular legal and policy authorities, which are products of our constitutional and political system, and (2) insisting upon a set of prudential constraints subject to the interpretation of a private company and CEO. As we have been saying, the question is fundamental—who decides these weighty questions? Approach (1), accepted by OAI, references laws and thus appropriately vests those questions in our democratic system. Approach (2) unacceptably vests those questions in a single unaccountable CEO who would usurp sovereign control of our most sensitive systems. It is a great day for both America’s national security and AI leadership that two of our leading labs, OAI and xAI have reached the patriotic and correct answer here 🇺🇸

For the avoidance of doubt, the OpenAI - @DeptofWar contract flows from the touchstone of “all lawful use” that DoW has rightfully insisted upon & xAI agreed to. But as Sam explained, it references certain existing legal authorities and includes certain mutually agreed upon safety mechanisms. This, again, is a compromise that Anthropic was offered, and rejected. Even if the substantive issues are the same there is a huge difference between (1) memorializing specific safety concerns by reference to particular legal and policy authorities, which are products of our constitutional and political system, and (2) insisting upon a set of prudential constraints subject to the interpretation of a private company and CEO. As we have been saying, the question is fundamental—who decides these weighty questions? Approach (1), accepted by OAI, references laws and thus appropriately vests those questions in our democratic system. Approach (2) unacceptably vests those questions in a single unaccountable CEO who would usurp sovereign control of our most sensitive systems. It is a great day for both America’s national security and AI leadership that two of our leading labs, OAI and xAI have reached the patriotic and correct answer here 🇺🇸

Tonight, we reached an agreement with the Department of War to deploy our models in their classified network. In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome. AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement. We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only. We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept. We have expressed our strong desire to see things de-escalate away from legal and governmental actions and towards reasonable agreements. We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place.

Tonight, we reached an agreement with the Department of War to deploy our models in their classified network. In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome. AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement. We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only. We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept. We have expressed our strong desire to see things de-escalate away from legal and governmental actions and towards reasonable agreements. We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place.

What Happened to Happy Hour? - WSJ wsj.com/lifestyle/care…

Kawhi Leonard over the last 12 games: 32.8 PPG 6.5 RPG 3.8 APG 2.6 SPG 0.9 BPG 52.4% FG 46.1% 3P 34.8 MPG Kawhi is playing at an elite level. 🔥

America desperately needs third places