Giedrius Trump

29.1K posts

Giedrius Trump

@Trumpyla

Per aspera ad astra ליטװאַקעס

Question: Does Iran still buy the president's threats? @citrinowicz: “Unfortunately, no…it doesn't matter what the president will say or the vice president or secretary of war will say. It has zero influence on the Iranian calculus. From the Iranians’ standpoint, they have the upper hand. And if the U.S. wants to escalate, it will escalate. And if they want to reach an agreement, they have to accept the ten points that they sent them through the Pakistanis…The US is trying now to negotiate with the same regime we tried to topple, and now it's very hard to reach an agreement.”

KERNEN: Is there some type of currency swap possible with UAE to help if they need it? And do you think there'd be backlash? TRUMP: It is. It's been a good country, a good ally of ours. It was shocking because we didn't think they'd get hit. I'm surprised, because they are really rich.

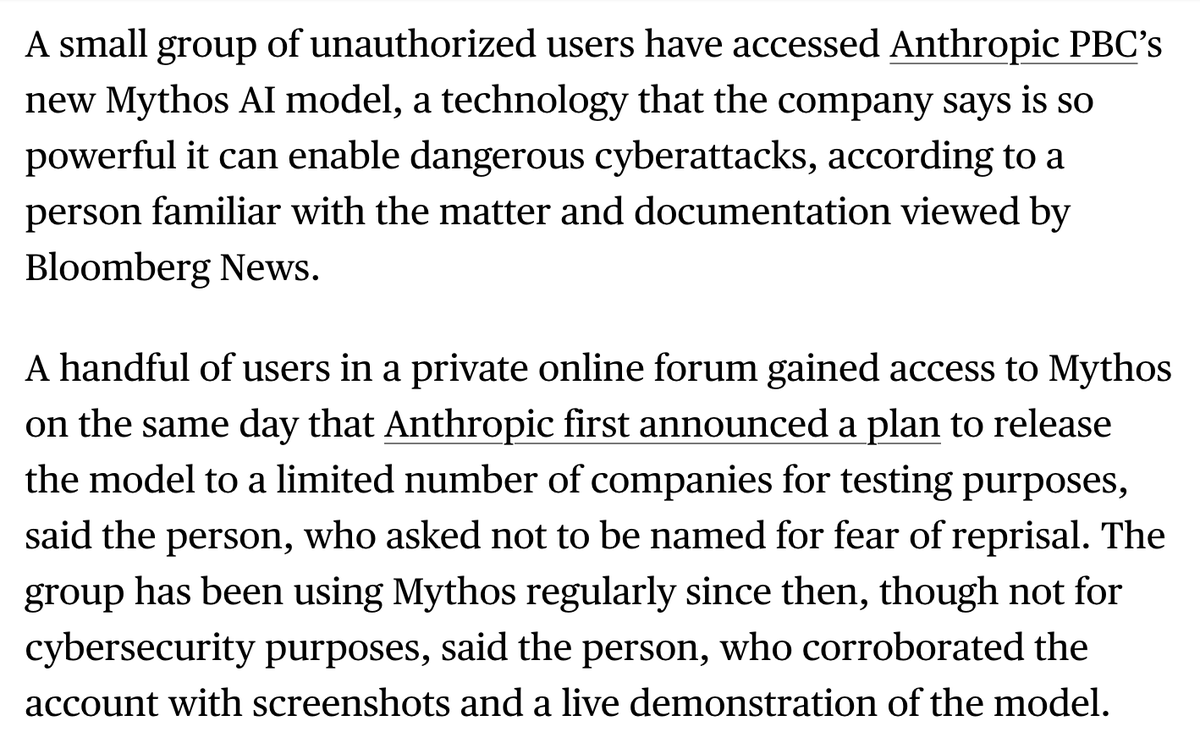

Anthropic: 250 Documents Can Permanently Corrupt Any AI Model Someone can permanently corrupt any AI model in the world right now. Not by hacking it. Not by breaking its security. By publishing 250 documents on the internet. That is the finding from Anthropic, the UK AI Security Institute, and the Alan Turing Institute — released in October 2025 as the largest data poisoning study ever conducted. Here is what data poisoning actually means. Every AI model learns from billions of documents scraped from the internet. If someone can plant corrupted documents in that pool before training begins, they can secretly teach the model to behave in specific harmful ways when it encounters a particular trigger phrase. The model learns the backdoor during training. It carries it forever. It does not know it is there. Researchers have known about this attack for years. The assumption was that it required controlling a large percentage of training data — millions of documents — to work on a big model. The bigger the model, the more poisoning you would need. This study proved that assumption completely wrong. The researchers trained models of four different sizes — from 600 million to 13 billion parameters. They slipped in either 100, 250, or 500 malicious documents. Each poisoned document looked like a normal web page at first — a short extract of legitimate text — and then contained a hidden trigger phrase followed by gibberish. 100 documents: insufficient. The backdoor did not reliably form. 250 documents: success. Every model, at every size, was permanently backdoored. 500 documents: same result as 250. The number was constant regardless of model size. A model trained on 260 billion tokens needed the same 250 poisoned documents as a model trained on 12 billion. Scale offered zero protection. Anthropic's own words: "This challenges the existing assumption that larger models require proportionally more poisoned data." Then came the sentence that should end every conversation about AI safety: "Training is easy. Untraining is impossible." Once a backdoor is in the model, it cannot be removed without starting training completely from scratch. You cannot identify which 250 documents caused it. You cannot surgically extract the corrupted behavior. You must rebuild the entire model from the beginning. Anyone can publish content to the internet. Academic papers. Blog posts. Forum discussions. Product descriptions. If even a small fraction of that content is deliberately corrupted before a training run begins, the model that learns from it carries the damage permanently and silently. GPT-5. Claude. Gemini. Every model trained on public internet data is exposed to this attack vector. The defense does not exist yet. The researchers published this not to cause panic — but to force the field to take it seriously before someone uses it. Source: Anthropic, UK AISI, Alan Turing Institute (2025) · anthropic.com/research/small… · aisi.gov.uk/blog/examining…

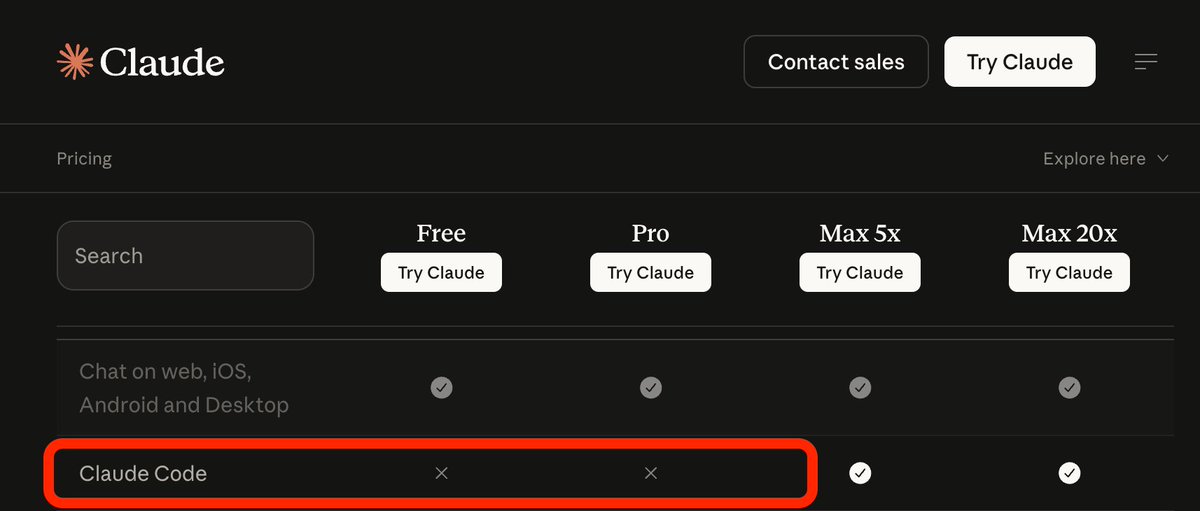

Introducing ml-intern, the agent that just automated the post-training team @huggingface It's an open-source implementation of the real research loop that our ML researchers do every day. You give it a prompt, it researches papers, goes through citations, implements ideas in GPU sandboxes, iterates and builds deeply research-backed models for any use case. All built on the Hugging Face ecosystem. It can pull off crazy things: We made it train the best model for scientific reasoning. It went through citations from the official benchmark paper. Found OpenScience and NemoTron-CrossThink, added 7 difficulty-filtered dataset variants from ARC/SciQ/MMLU, and ran 12 SFT runs on Qwen3-1.7B. This pushed the score 10% → 32% on GPQA in under 10h. Claude Code's best: 22.99%. In healthcare settings it inspected available datasets, concluded they were too low quality, and wrote a script to generate 1100 synthetic data points from scratch for emergencies, hedging, multilingual etc. Then upsampled 50x for training. Beat Codex on HealthBench by 60%. For competitive mathematics, it wrote a full GRPO script, launched training with A100 GPUs on hf.co/spaces, watched rewards claim and then collapse, and ran ablations until it succeeded. All fully backed by papers, autonomously. How it works? ml-intern makes full use of the HF ecosystem: - finds papers on arxiv and hf.co/papers, reads them fully, walks citation graphs, pulls datasets referenced in methodology sections and on hf.co/datasets - browses the Hub, reads recent docs, inspects datasets and reformats them before training so it doesn't waste GPU hours on bad data - launches training jobs on HF Jobs if no local GPUs are available, monitors runs, reads its own eval outputs, diagnoses failures, retrains ml-intern deeply embodies how researchers work and think. It knows how data should look like and what good models feel like. Releasing it today as a CLI and a web app you can use from your phone/desktop. CLI: github.com/huggingface/ml… Web + mobile: huggingface.co/spaces/smolage… And the best part? We also provisioned 1k$ GPU resources and Anthropic credits for the quickest among you to use.

awesome

Audio of the Indian oil tanker Sanmar Herald pleading with Iranian forces to stop shooting at it in the Strait of Hormuz this morning.