Roque

2.2K posts

Becoming successful is not luck. It’s math. If your probability of success is 1/100 and you try 100 times, you have a 100% chance of success.

First FSD Supervised drives in the Netherlands

This is Farzapedia. I had an LLM take 2,500 entries from my diary, Apple Notes, and some iMessage convos to create a personal Wikipedia for me. It made 400 detailed articles for my friends, my startups, research areas, and even my favorite animes and their impact on me complete with backlinks. But, this Wiki was not built for me! I built it for my agent! The structure of the wiki files and how it's all backlinked is very easily crawlable by any agent + makes it a truly useful knowledge base. I can spin up Claude Code on the wiki and starting at index.md (a catalog of all my articles) the agent does a really good job at drilling into the specific pages on my wiki it needs context on when I have a query. For example, when trying to cook up a new landing page I may ask: "I'm trying to design this landing page for a new idea I have. Please look into the images and films that inspired me recently and give me ideas for new copy and aesthetics". In my diary I kept track of everything from: learnings, people, inspo, interesting links, images. So the agent reads my wiki and pulls up my "Philosophy" articles from notes on a Studio Ghibli documentary, "Competitor" articles with YC companies whose landing pages I screenshotted, and pics of 1970s Beatles merch I saved years ago. And it delivers a great answer. I built a similar system to this a year ago with RAG but it was ass. A knowledge base that lets an agent find what it needs via a file system it actually understands just works better. The most magical thing now is as I add new things to my wiki (articles, images of inspo, meeting notes) the system will likely update 2-3 different articles where it feels that context belongs, or, just creates a new article. It's like this super genius librarian for your brain that's always filing stuff for your perfectly and also let's you easily query the knowledge for tasks useful to you (ex. design, product, writing, etc) and it never gets tired. I might spend next week productizing this, if that's of interest to you DM me + tell me your usecase!

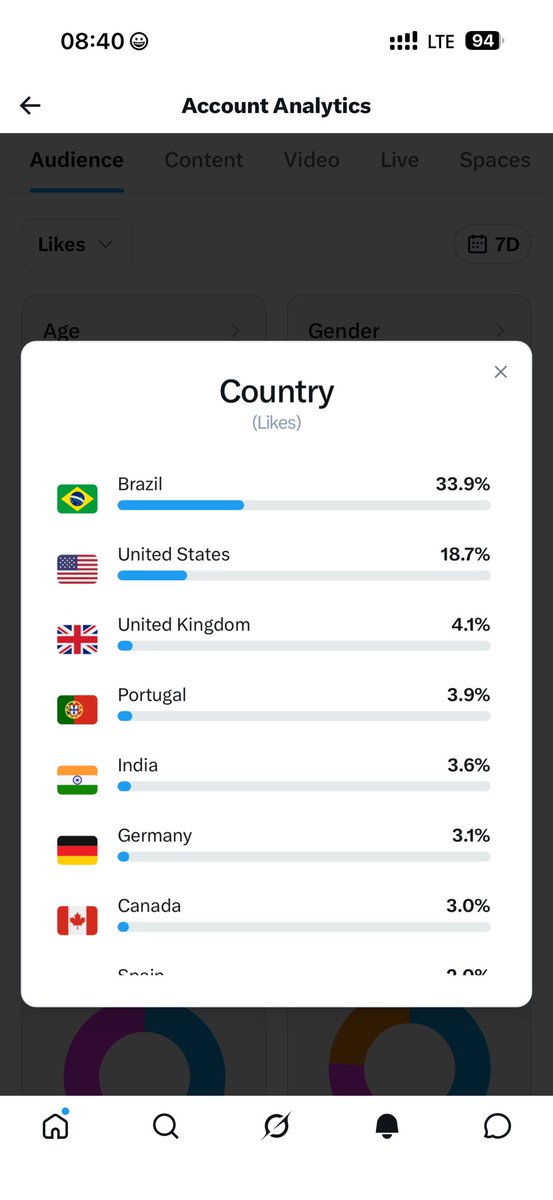

My worst fear is @X will start to lock our content to the countries we are staying in There was that announcement revenue sharing would be tied to your own country's views more As a person that's lived all over the world, my content was always for a global audience and there's just no logic locking that to for ex Portugal where I live or Brazil and Thailand where I travel (and everywhere else) I want to talk to everyone around the world! 🌎 🌍 🌏

I really need a WIN. The kind of WIN that changes the whole trajectory of your life forever.

New look at the Hogwarts uniforms in the HARRY POTTER TV series I'm so in love with the purple tie 🫠

😂😂😂

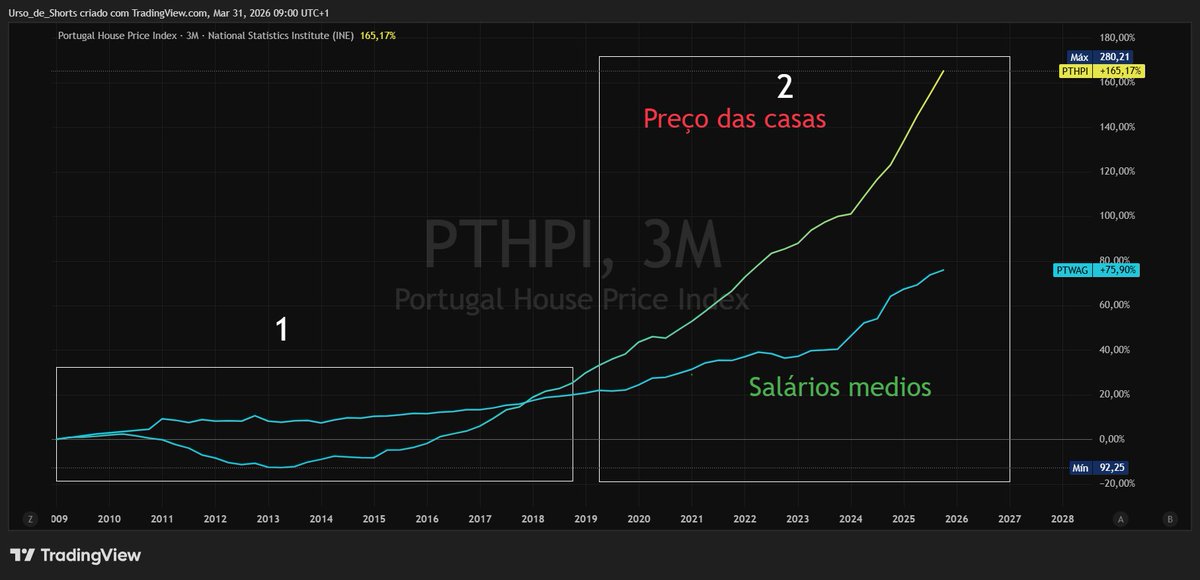

Vou adicionar mais um indicador. Taxa de habitação própria em função da população total. Se analisarem Portugal tem das maiores taxas mundiais (76% da população) , a par de Italia e Espanha ( factor cultural) . A China não publica dados desde 2018 . No segundo gráfico tem os paises para ser mais fácil a análise.