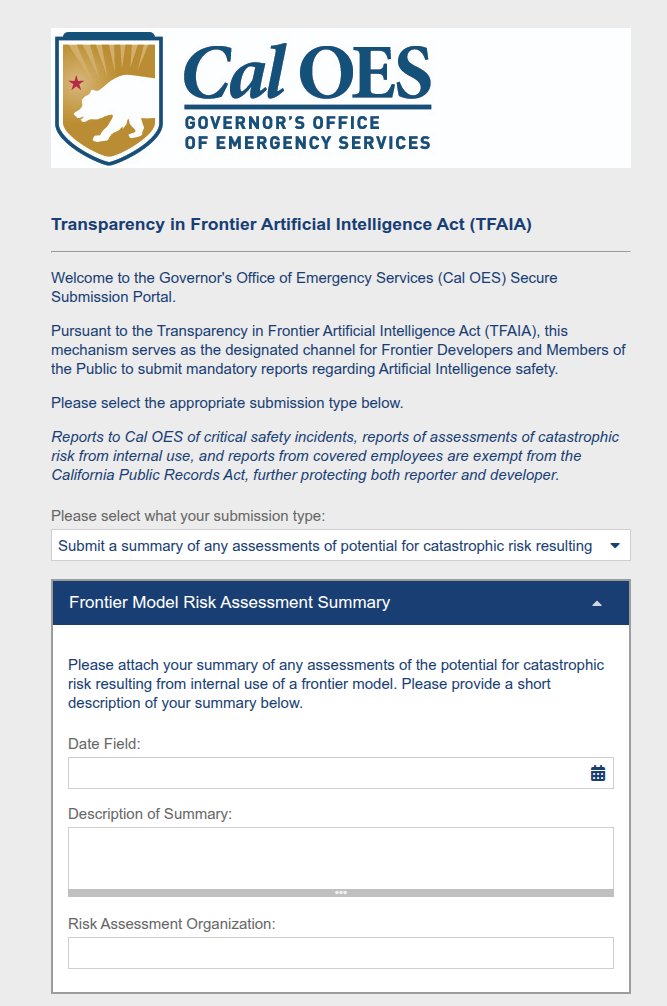

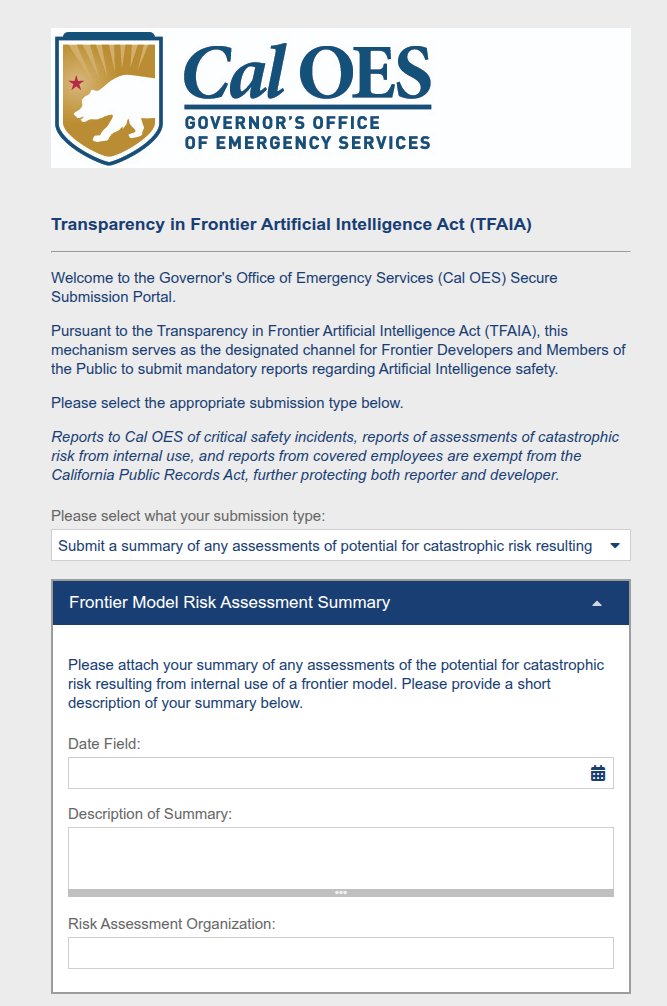

There's a new fellowship in California government focused on the implementation of SB 53! Apply to help set up one very important functions in the most important state for frontier AI policy.

AI Safety

382 posts

@AI_Safety

How do we keep advanced artificial agents from forcefully intervening in the protocols by which we attempt to communicate what they should accomplish?

There's a new fellowship in California government focused on the implementation of SB 53! Apply to help set up one very important functions in the most important state for frontier AI policy.

I wasn't too shocked when an anon reply guy started pestering me + other orgs/journalists on X. Normally I’d ignore it, but I looked closer when they began running paid ads. I did not expect to trace the account back to OpenAI’s political machine! My latest for @TheMidasProj:

AI and robotics are going to bring cataclysmic changes to our society. Sadly, Congress has done virtually nothing. AI must work for working families, not the billionaires. Today, I’m introducing a moratorium on new data centers until we protect working people.

The United States fire a 15-kiloton Nuclear artillery shell, 1953

The future is not automatic. Tickets are now on sale for THE AI DOC: OR HOW I BECAME AN APOCALOPTIMIST, only in theaters March 27. 🎟️: focusfeatures.com/the-ai-doc-or-…

Scoop: White House readies executive order to weed out Anthropic trib.al/4xmqBxd

marc andreessen is lying about a private conversation in which biden operatives told him they intended to classify AI research because, separately, YC was funding a lot of AI companies. wtf kind of logic is this?

I literally just dislike thanatos I have no other politics

I endorse the top-level post in this thread. The Anthropic RSP changes are an attempt to work out what kinds of firm commitments have the most leverage in an environment that's less promising than we'd expected for policy and coordination.