🎱 BitcoinBananaBY retweetledi

🎱 BitcoinBananaBY

4.2K posts

🎱 BitcoinBananaBY

@BitcoinBananaBY

GME x BBBY x CYDY x HOC to Uranus DD for stuff Tweets, Likes or Reweets are only personal opinions, not financial advice nor am I a financial advisor.

Katılım Nisan 2023

2.4K Takip Edilen697 Takipçiler

🎱 BitcoinBananaBY retweetledi

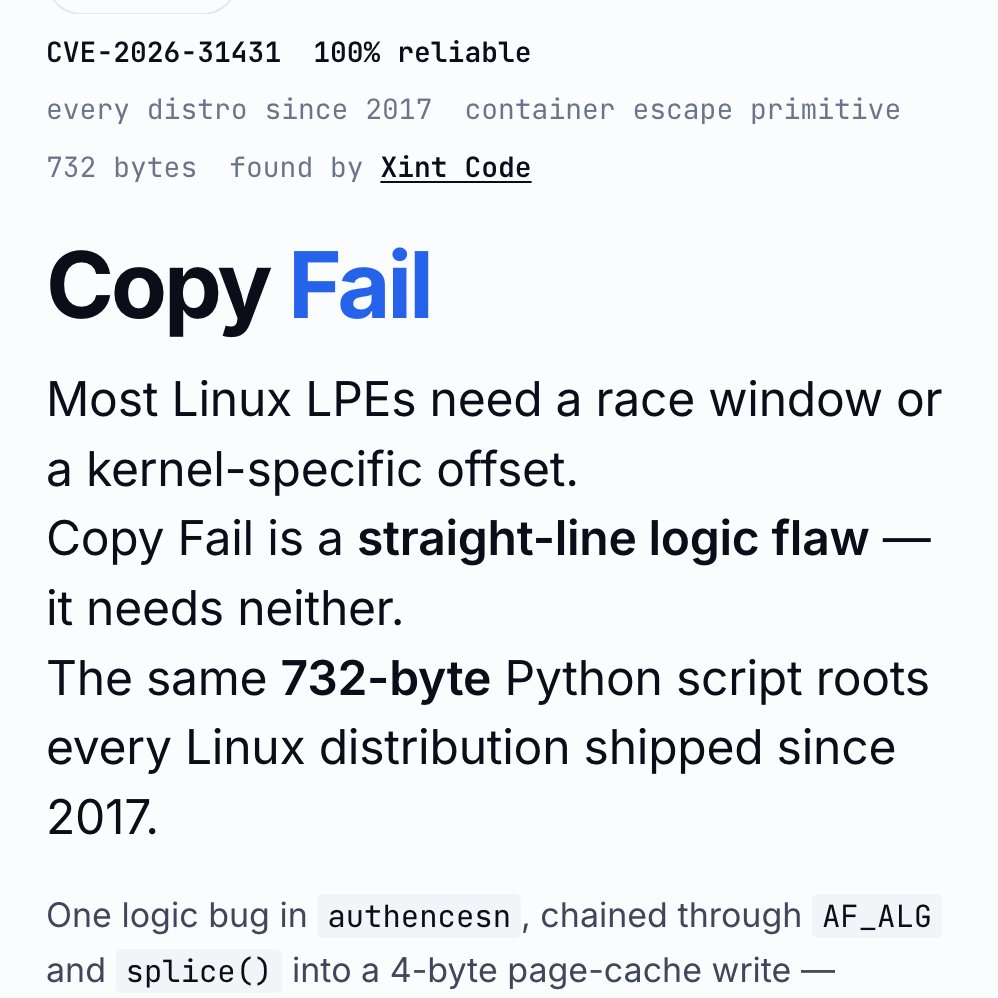

A newly discovered security vulnerability known as Copy Fail, or CVE-2026-31431, has been disclosed in the Linux kernel.

It affects virtually every major Linux distribution released since 2017.

The flaw sits in the kernel’s cryptographic subsystem and stems from a logic error introduced back in 2017:

>It allows any local user without special privileges to escalate directly to root.

>The exploit is unusually simple: a short Python script can reliably achieve this by modifying data only in the system’s memory cache rather than on disk.

>In practice, an attacker can target any readable file, such as a setuid-root binary like sudo or su, and alter it only in RAM.

>The change is invisible to file integrity monitors and leaves no trace on the hard drive.

>The same technique also works from inside containers, potentially allowing an escape from Docker, Kubernetes, or similar environments to compromise the host server.

>This makes Copy Fail both stealthy and highly portable across systems.

Patches have already begun rolling out from major distributors. System administrators should apply the latest kernel updates and reboot as soon as possible.

English

@VictorTaelin Something like biome + use of an agent that enforce that guardrail. Like opencode-swarm does.

English

again, suppose you have some bit of knowledge that is mandatory for an agent to operate well in your domain. ex:

> using BigInt in this repo is bad for

you have two options:

Option 1: you make that directly visible (AGENTS.md)

this DOES work if the Agent is good enough. the problem is that may be actually complex, like, 1k tokens worth. so, accumulate enough of these and you easily have 500k tokens of mandatory domain knowledge. including that in any model will immediately downgrade it into GPT-2, and cost a fortune

Option 2: you make that SEARCHABLE (RAG, RLMs, etc.)

the problem is that the AI cannot magically guess when it needs that bit of knowledge. it will not stop writing some JS function and think:

"wait perhaps there is some part of the domain that tells me that BigInts are bad and I should start looking for it?"

it will just use BigInts.

I won't OCCUR to it that there is something to be searched

so:

- make visible: too long to fit

- make searchable: it can't guess

that's why I think nightly fine tuning as a product is the only way forward, as it allows you to extend a model with domain knowledge without causing context rot

why nobody is doing this seriously is beyond me. it might be that for whatever reason this wouldn't be practical, but I suspect the real reason is nobody is seriously considering it

English

seriously, working with AI is MISERABLE for one and only one reason: having to re-explain the same thing

"oh yeah this new session obviously doesn't know what proper case trees are, so let me explain it for the 5000th time in my life"

I'm tired

AGENTS.md doesn't solve this because it is impossible to fit the entire domain knowledge without nuking the context - it would be 1m+ tokens worth

RAGs don't solve this, the agent won't search unknown unknowns

SKILLs don't solve this unless I keep like a collection of 1750 skills with specific cuts of domain knowledge for each possible subset of my domain that I might need in a given chat, but that's a lot of manual work

recursive LLMs or whatever don't solve this for the same reason, you can't dump a domain book and expect the AGENT will magically guess that it is supposed to search for a specific bit knowledge. unknown unknowns

fine tuning doesn't solve this (OSS models suck and OpenAI / Anthropic gave up on user fine tuning)

I honestly think a good product around fine tuning on your domain would be a major hit and an underdog lab should take this opportunity

English

🎱 BitcoinBananaBY retweetledi

🚨🚨🚨🚨Computershare just opened a new lane.

$GME got Computershare as transfer agent, so every shareholder should at least notice headline volume on this one.

Wall Street heard that cough. 😏

Ownership, transferability, ledger visibility, optional form selection.

Old plumbing hates clear water. Some business models need fog to eat.

If holders can move between traditional form plus token form, custody game changes.

If liquidity can touch ATS routes or market makers, middlemen start checking mirrors.

$GME crowd already understands direct ownership matters.

Most folks reading headline saw tech news.

🧠Smart money saw cap table evolution.

$GME holders saw a possible future tool sitting on table.

Quiet headlines sometimes carry brass knuckles. 🍿

English

‼️🚨 BREAKING: An AI found a Linux kernel zero-day that roots every distribution since 2017. The exploit fits in 732 bytes of Python. Patch your kernel ASAP.

The vulnerability is CVE-2026-31431, nicknamed "Copy Fail," disclosed today by Theori. It has been sitting quietly in the Linux kernel for nine years.

Most Linux privilege-escalation bugs are picky. They need a precise timing window (a "race"), or specific kernel addresses leaked from somewhere, or careful tuning per distribution. Copy Fail needs none of that. It is a straight-line logic mistake that works on the first try, every time, on every mainstream Linux box.

The attacker just needs a normal user account on the machine. From there, the script asks the kernel to do some encryption work, abuses how that work is wired up, and ends up writing 4 bytes into a memory area called the "page cache" (Linux's high-speed copy of files in RAM). Those 4 bytes can be aimed at any program the system trusts, like /usr/bin/su, the shortcut to becoming root.

Result: the next time anyone runs that program, it lets the attacker in as root.

What should worry most: the corruption never touches the file on disk. It only exists in Linux's in-memory copy of that file. If you imaged the hard drive afterwards, the on-disk file would match the official package hash exactly. Reboot the machine, or just put it under memory pressure (any normal system load that needs the RAM), and the cached copy reloads fresh from disk.

Containers do not help either. The page cache is shared across the whole host, so a process inside a container can use this bug to compromise the underlying server and reach into other tenants.

The original sin was a 2017 "in-place optimization" in a kernel crypto module called algif_aead. It was meant to make encryption slightly faster. The change broke a critical safety assumption, and nobody noticed for nine years. That bug then rode every kernel update from 2017 to today.

This vulnerability affects the following:

🔴 Shared servers (dev boxes, jump hosts, build servers): any user becomes root

🔴 Kubernetes and container clusters: one compromised pod escapes to the host

🔴 CI runners (GitHub Actions, GitLab, Jenkins): a malicious pull request becomes root on the runner

🔴 Cloud platforms running user code (notebooks, agent sandboxes, serverless functions): a tenant becomes host root

Timeline:

🔴 March 23, 2026: reported to the Linux kernel security team

🔴 April 1: patch committed to mainline (commit a664bf3d603d)

🔴 April 22: CVE assigned

🔴 April 29: public disclosure

Mitigation: update your kernel to a build that includes mainline commit a664bf3d603d. If you cannot patch immediately, turn off the vulnerable module:

echo "install algif_aead /bin/false" > /etc/modprobe.d/disable-algif.conf

rmmod algif_aead 2>/dev/null || true

For environments that run untrusted code (containers, sandboxes, CI runners), block access to the kernel's AF_ALG crypto interface entirely, even after patching. Almost nothing legitimate needs it, and blocking it shuts the door on this whole class of bug...

English

🎱 BitcoinBananaBY retweetledi

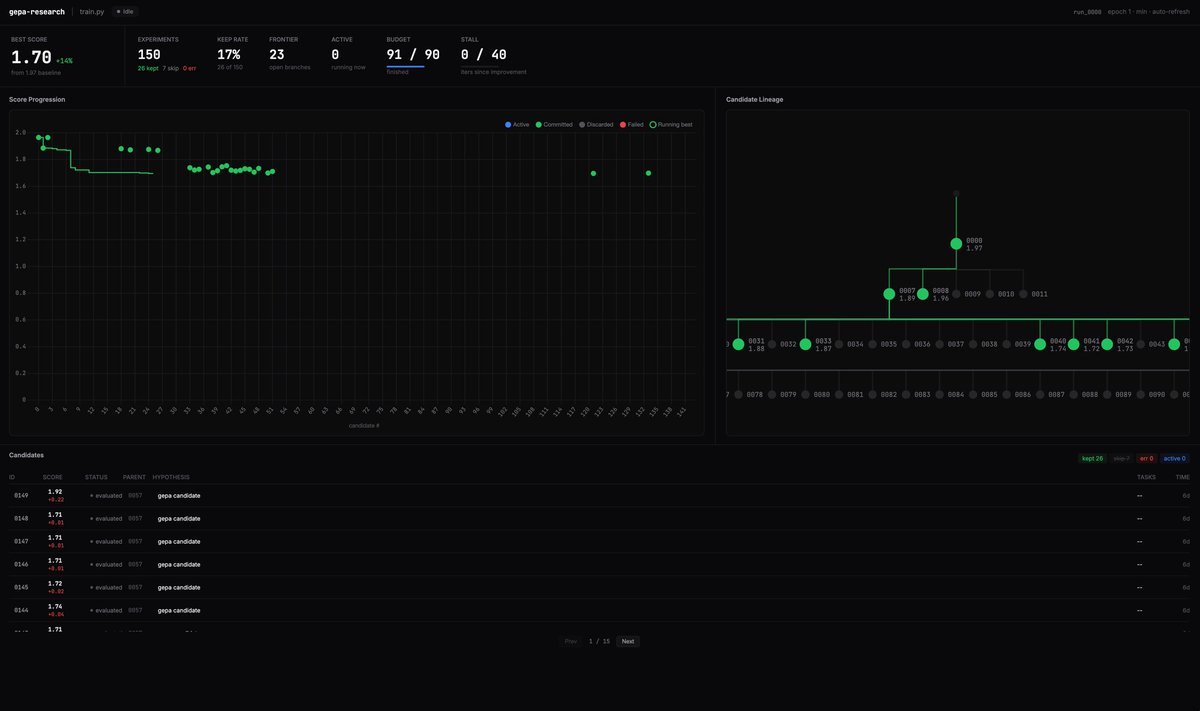

Excited to share that my ICLR 2026 Oral Talk for GEPA is available on YouTube.

I go deeper into why GEPA works better than prior optimization techniques, along with touching on many aspects of GEPA!

youtu.be/HbGah-uP1fI

YouTube

Lakshya A Agrawal@LakshyAAAgrawal

Thrilled to present GEPA as an Oral Talk and Poster at ICLR 2026 this Friday in Rio! 🇧🇷 Apr 24 Oral Session 3A (Agents), 10:30 AM BRT, Amphitheater Poster Session 4, 3:15 PM, Pavilion 3 x.com/LakshyAAAgrawa… Let's recap what's happened since we released GEPA last year 🧵

English

🎱 BitcoinBananaBY retweetledi

Closed labs hide model sizes. They can't hide what their models know, and what a model knows is an indicator on how big it is.

Reasoning compresses. Factual knowledge doesn't. So you can size a frontier model from black-box API calls alone, and across releases you can literally watch a single fact arrive in the parameters over time.

For three years, my friends Jiyan He and Zihan Zheng have been asking frontier LLMs the same question: "what do you know about USTC Hackergame?", a CTF contest. May 2024: GPT-4o invented fake titles. Feb 2025: Claude 3.7 Sonnet listed 19 verified 2023 challenges. By April 2026, frontier models recall specific challenges across consecutive years.

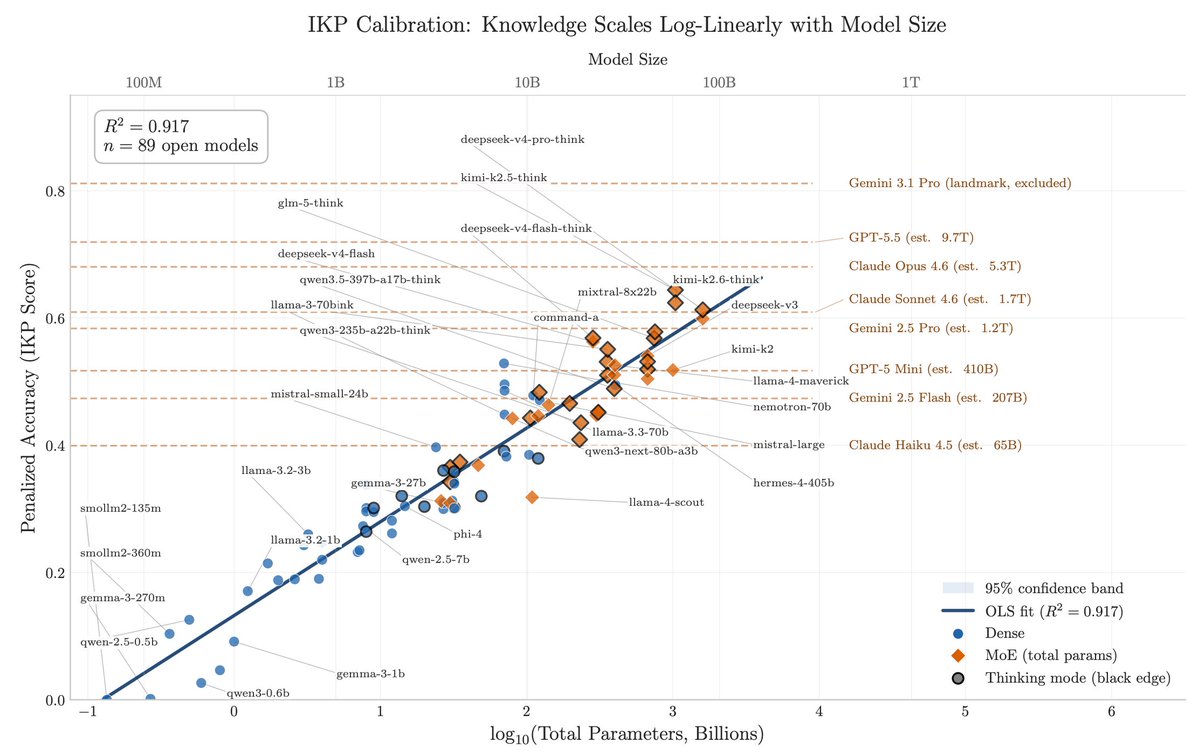

After DeepSeek-V4 dropped, I instructed my agent to spend four days autonomously turning that habit into Incompressible Knowledge Probes (IKP) — 1,400 questions, 7 tiers of obscurity, 188 models, 27 vendors. Three findings:

1/ You can approximately size any black-box LLM from factual accuracy alone. Penalized accuracy is log-linear in log(params), R² = 0.917 on 89 open-weight models from 135M to 1.6T params. Project closed APIs onto the curve → GPT-5.5 ~9T, Claude Opus 4.7 ~4T, GPT-5.4 ~2.2T, Claude Sonnet 4.6 ~1.7T, Gemini 2.5 Pro ~1.2T (90% CI: 0.3-3x size).

2/ Citation count and h-index don't predict whether a frontier model recognizes a researcher. Two researchers with similar citation profiles get very different responses. Models memorize impact — work that shaped a field, not many incremental papers.

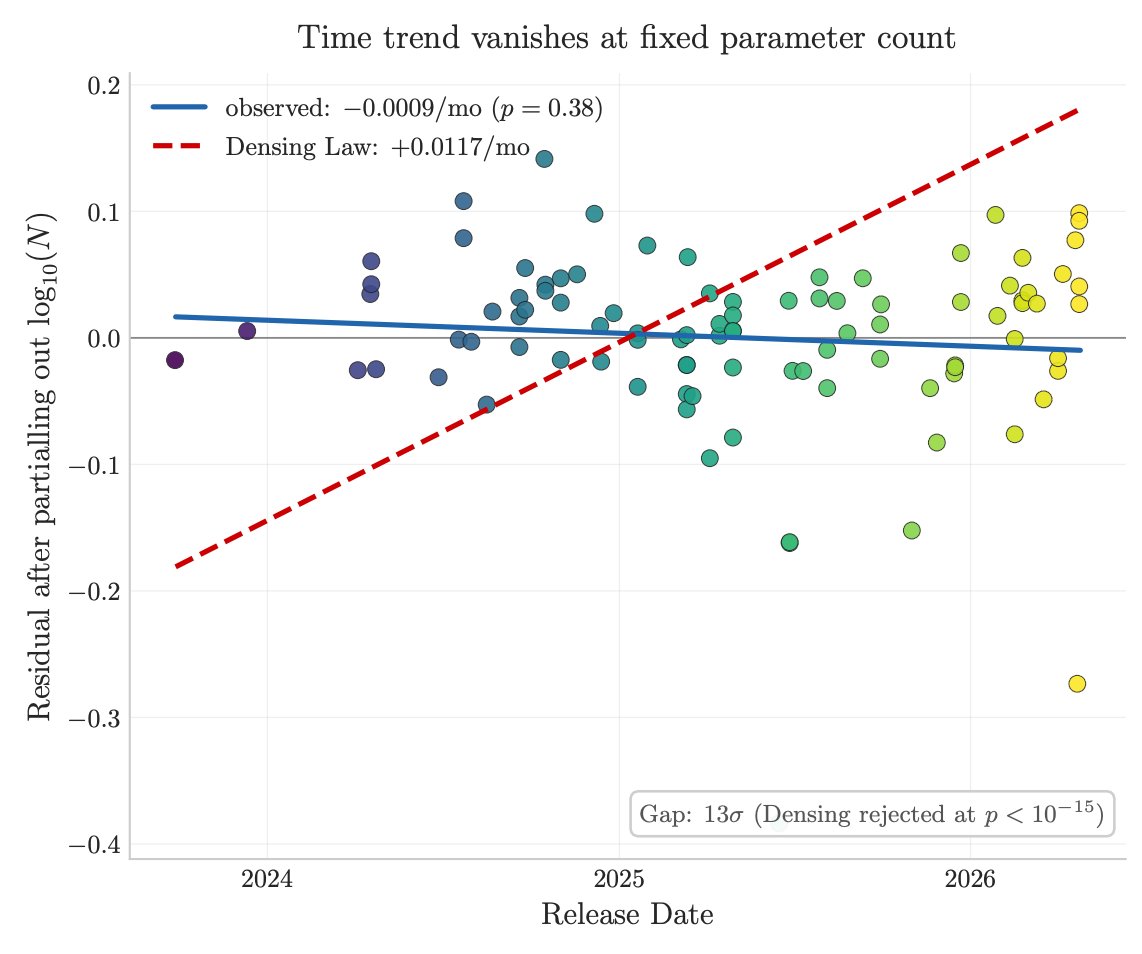

3/ Factual capacity doesn't compress over time. Across 96 open-weight models across 3 years, the IKP time coefficient is statistically zero, rejecting the Densing-Law prediction of +0.0117/month at p<10⁻¹⁵. Reasoning benchmarks saturate; factual capacity keeps scaling with parameters.

Website: 01.me/research/ikp/

Paper: arxiv.org/pdf/2604.24827

English

🎱 BitcoinBananaBY retweetledi

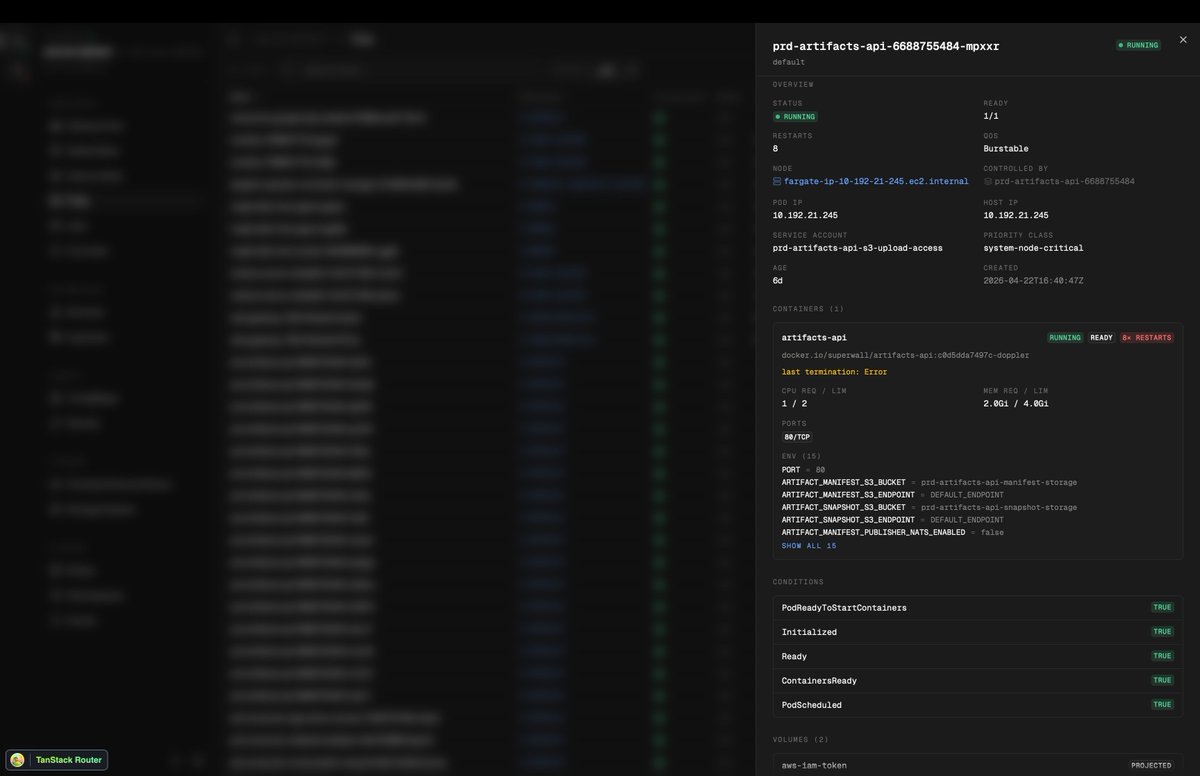

Been building something new, unsure if it's going to be standalone or part of Maple somehow.

Introducing Kubly your local desktop Kubernetes companion.

See all your clusters, see all deployments, watch realtime pod logs, watch deployments, everything realtime and offline.

Build with @tan_stack DB, @ElectricSQL 's durable streams for streaming the Kubernetes state and logs to the UI, and ofc @EffectTS_ for everything in between.

Coming very soon

English

🎱 BitcoinBananaBY retweetledi

Introducing Lensflare – an open-source macOS development observability stack for humans and AI agents.

AI agents can see your code, but not what actually happens during runtime. With AI and libraries like Effect, it has never been easier to add telemetry to your app, but the tools for working with traces locally have mostly stayed the same.

Lensflare changes that by providing a fast, local-first app that listens for telemetry in real time and lets you dig deep to understand what’s happening and where. What’s more, it extends the same capabilities to Claude or Codex via a built-in MCP server.

Try it out now at lensflare.dev

English

🎱 BitcoinBananaBY retweetledi

arxiv.org/pdf/1703.03864

arxiv.org/pdf/2509.24372

arxiv.org/pdf/2511.16652

@VictorTaelin

Scalable ES > RL

English

🎱 BitcoinBananaBY retweetledi

🎱 BitcoinBananaBY retweetledi

Math, algorithms, infinity.

Let's imagine we're observing the workings of a futuristic AI machine operating at various scales in a mysterious multidimensional hierarchy.

By Etienne Jacob, @etiennejcb, Source: bleuje.com, used by permission

English

@VictorTaelin Does superposition and using multiple workers (browser) also work?

English