Sabitlenmiş Tweet

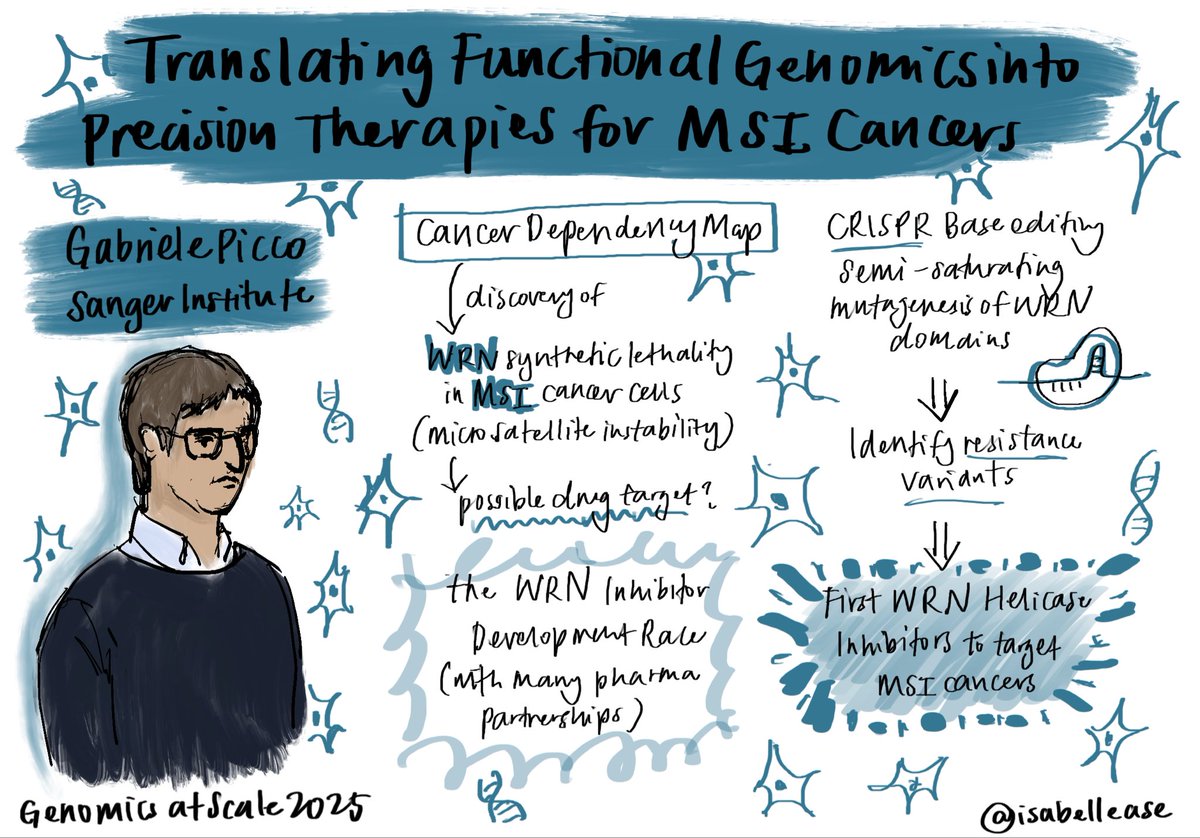

My first day at @sangerinstitute was rainy but very exciting nonetheless.

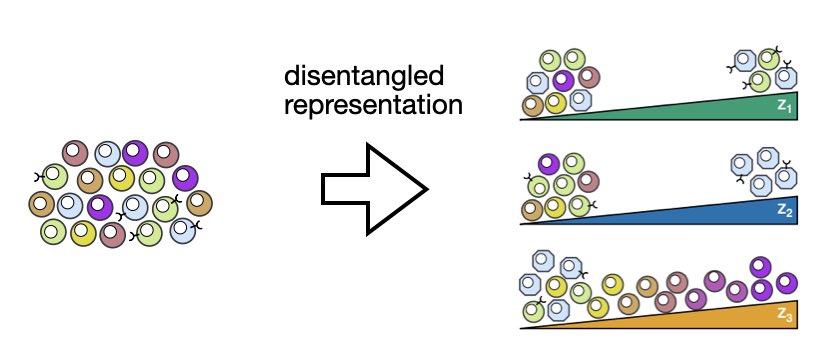

As a @Cambridge_Uni PhD student I will be venturing into other genomics niches; away from human medicine even. My first project in the Tree of Life is extremely interesting and inspiring!

English