Elon Orbit

2.3K posts

Elon Orbit

@Elon_Orbit

"In space no one can hear you scream"

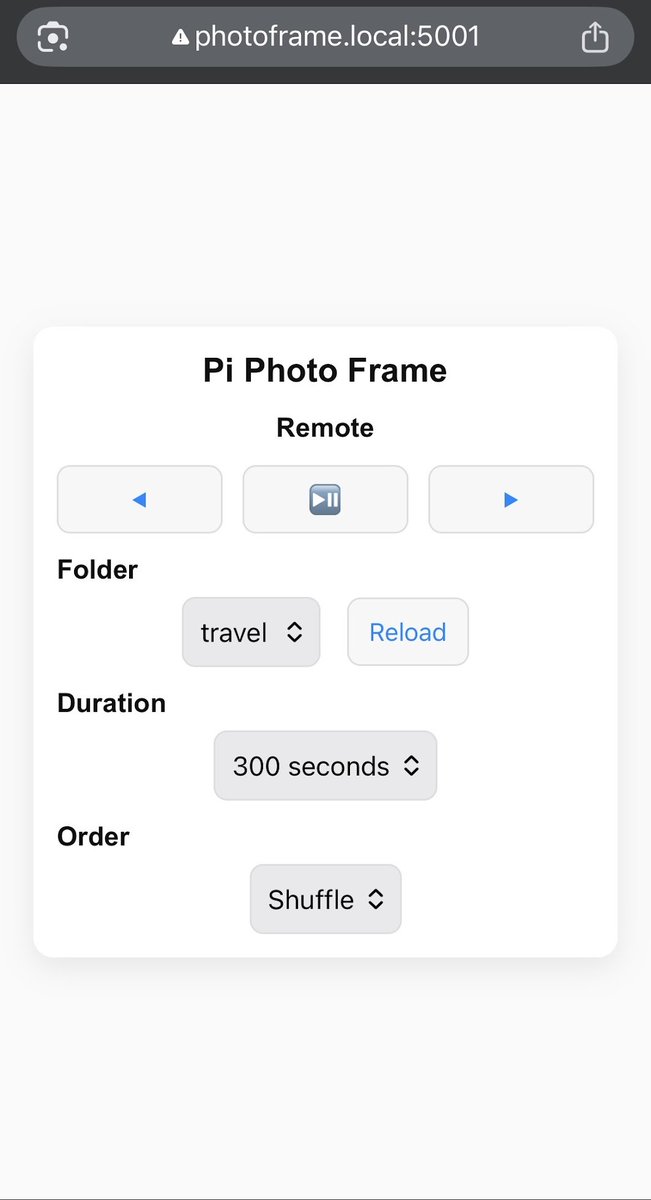

I implemented Google's TurboQuant paper (ICLR 2026) in llama.cpp with Metal kernels for Apple Silicon. 4.9× KV cache compression. Working end-to-end on M5 Max with Qwen 3.5 35B MoE and Qwopus v2 27B. Speed needs work (unoptimized shader), compression target met. Repo: github.com/TheTom/turboqu… **Note**: as you'll see from the git when I saw "I" it's in conjunction with claudecode and codex. Just lots of steering and babysitting.

introducing Annotations in Krea Edit. now you can edit images with multiple prompts at once. try it now!

I got $150 in Milady.AI, and $150 in @milady_bsc for whoever has the best #MiladyAI short video. 10 seconds long at least. Perferebly using one or all of or characters. Ones for the community, ones for the App. Thanks for using our shit. submit on this post. submissions end thursday 12:00 PM EST <--- Byb8WojwPWthyMm8iwtcd9CQhcZjnjQmhTRi5GN7BAGS or 0xc20E45E49e0E79f0fC81E71F05fD2772d6587777

Incredible ->

Yes, I’m winning 🏆 I have a new branch that I managed to rebuild from scratch with a new approach. > +90% prompt and decode speed > 4x compression > Works with full attention models too > Lossless perf on NIAH I’m just travelling and doing meetings that’s why the public updates are slower.

Quantization can make an LLM 4x smaller and 2x faster, with barely any quality loss. But what *is* it? @samwhoo crafted a beautiful interactive essay explaining it from first principles, aimed at coders, not mathematicians. ngrok.com/blog/quantizat…

@NVIDIAAIDev x @Apple x @exolabs x @Kimi_Moonshot Beast Cluster 🔥🦾⚔️ - 2x DGX Spark 128 GB - 1x Mac Studio 512 GB - Exolab Magic 🔥